In Part One, we introduced some climate model basics, including uses of climate models (not all of which are about “projecting” the future).

And we took at a look at them in their best light – on the catwalk, as it were.

Well, really, we took a look at the ensemble of climate models. We didn’t actually see a climate model at all..

Ensembles

The overall evaluation in Part One was the presentation of a “multi-model mean” or an ensemble. An ensemble can be the average of many models, or the average of one model run many times, or both combined.

We will return to more discussion about the curious nature of ensembles in a later post. Just as a starter, two observations from the IPCC.

IPCC AR4 in Chapter 8, Climate Models and their Evaluation, comments:

There is some evidence that the multi-model mean field is often in better agreement with observations than any of the fields simulated by the individual models (see Section 8.3.1.1.2), which supports continued reliance on a diversity of modelling approaches in projecting future climate change and provides some further interest in evaluating the multi-model mean results.

and a little later:

Why the multi-model mean field turns out to be closer to the observed than the fields in any of the individual models is the subject of ongoing research; a superficial explanation is that at each location and for each month, the model estimates tend to scatter around the correct value (more or less symmetrically), with no single model consistently closest to the observations. This, however, does not explain why the results should scatter in this way.

One interpretation of this would be:

We like ensembles because they give more accurate results, but we don’t really understand why..

A subject to come back to, now it’s time for a real model..

Step Forward Climate Model “Cici” – CCSM3

CCSM3, “Cici”, is the model from NCAR (National Center for Atmospheric Research) in the USA. Out of all the GCMs discussed in the IPCC AR4, Cici has the “best curves” – the highest resolution grid. Well, she comes from the prestigious NCAR..

The model’s vital statistics – first the atmosphere:

- top of atmosphere = 2.2 hPa (=2.2mbar), this is pretty much the top of the stratosphere, around 50km

- grid size = 1.4° x 1.4° (T85)

- number of layers vertically = 26 (L26)

second, the oceans:

- grid size = 0.3°–1° x 1°

- number of vertical layers = 40 (L40)

The vital statistics give a quick indication of the level of resolution in the model. And there are also model components for sea ice and land. The model doesn’t need the infamous “flux adjustment” which is the balancing term for energy, momentum and water between the atmosphere and oceans required in most models to keep the two parts of the model working correctly.

The CCSM3 model is described in the paper: The Community Climate System Model Version 3 (CCSM3) by W.D. Collins et al, Journal of Climate (2006). The source code and information about the model is accessible at http://www.ccsm.ucar.edu/models/.

And for those who love equations, especially lots of vector calculus, take a look at the 220 page technical document on CAM3, the atmospheric component.

It will be surprising for many to learn that just about everything on this model is out in the open.

CCSM3 Off the Catwalk – Hindcast Results

As with the multi-model means results in Part One we will take a look through a similar set of results for CCSM3.

Annual temperature

Cici looks pretty good.

Details – The HadISST (Rayner et al., 2003) climatology of SST for 1980-1999 and the CRU (Jones et al., 1999) climatology of surface air tempeature over land for 1961–1990 are shown here. The model results are for the same period of the CMIP3 20th Century simulations. In the presence of sea ice, the SST is assumed to be at the approximate freezing point of sea water (–1.8 °C).

However, it’s hard to tell looking at two sets of absolute values, so of course we turn to the difference between model and reality.

Annual Temperature – Model Error

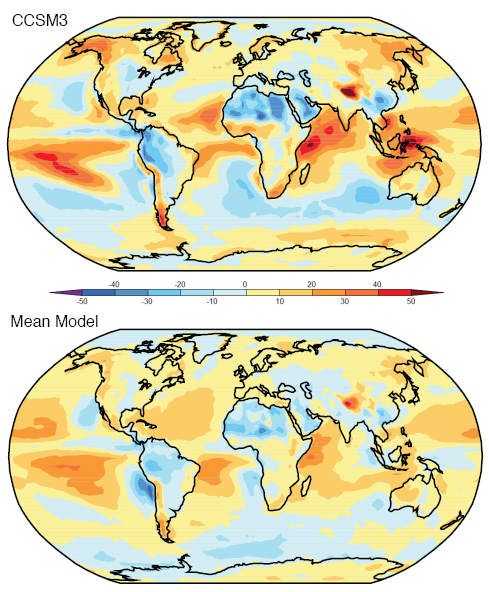

Model simulations of annual average temperature less observed values for Cici and for the “ensemble” or multi-model mean:

In terms of absolute error around the globe, Cici and the ensemble are very close (using the Anglotzen statistical method).

We could note that even though the values are “close”, there are areas where Cici – and the ensemble – don’t do so well. In Cici’s case southern Greenland and the Labrador Sea, which might be very important for predicting the future of the thermohaline circulation. And both are particularly bad for Antarctica, a general problem for models.

To give an idea of the variation of models, here are all of the models reviewed by the IPCC in AR4 (2007):

The top right is Cici (red circle). It’s clear that Cici is a supermodel..

Standard Deviation of Temperature

The standard deviation of temperature – “over the climatological monthly mean annual cycle ” – simulated less observed for Cici and the ensemble. We could describe it as how good is the model at working out how much temperature actually varies over the year in each location?

First, however, to make sense of the “error” of model less actual, we need to know what actual values look like:

As we would expect, the oceans show a lot less temperature variation than the land and around the tropics and sub-tropics the variation is close to zero.

Now let’s take a look at the model less actual, or “model error”:

We can see that Cici has some problems in modeling temperature variation especially under-estimating the actual variation around northern Russia and Canada and over-estimating the variation in the Middle East and Brazil. The ensemble appears to be in slightly better shape here.

Of course, these areas are where the largest temperature variation takes place.

Diurnal Range of Land Temperature

As before, first the actual values:

And now the model less actual, or “model error”:

We can see a lot of areas where the model error is quite large, usually corresponding to larger measured values. In the case of Greenland, for example, the annual average diurnal temperature range is over 20°C, while the model under-estimates this by more than 10°C. Given the legend the error might be as big as the actual value..

We can also see that on average Cici under-estimates the diurnal temperature range, and the ensemble is closer to neutral but still appears to under-estimate.

Here’s another comparison which demonstrates the problem of all the models vs observation:

The black line is the observed value. We can see that all of the models except for one are definitely under-estimating, and none of the models are particularly close to the observed values.

Now we can get to see more fundamental values.

Reflected Solar Radiation

This value is essential for calculating the basic radiation budget for the earth.

First the actual values as measured by ERBE (1985-1989):

And now the model error – model less actual:

The ensemble is definitely better than Cici. Cici has some large errors, for example, North Africa, Pacific Ocean and the Western Indian Ocean where the model error seems to be up to half of the actual value.

If we look at the values averaged by latitude the results appear a little better:

But the deviations give us a better view:

Note Cici in the solid blue line. The ensemble is proving to be the pick of the bunch..

So the model’s ability to simulate reflected solar radiation is much better by latitude than by location. But most or all of the models have significant discrepancies even when averaged over each latitude.

Outgoing Longwave Radiation

The other side of the radiation budget, first the actual ERBE measurement (1985-1989):

And now the model error – model less actual:

As with reflected SW radiation, the ensemble performs better than Cici. So while measured values are in the range of 200-300 W/m2, Cici has some areas where the (absolute) error is in excess of 30W/m2.

Looking at the OLR values averaged by latitude, the results appear a little better:

And the deviations, or model error:

Rainfall

Measured from CMAP, 1980-1999:

Units are in cm of rainfall per year. And now the model error – model less actual:

Once again the ensemble outshines Cici. There are some substantial errors in the areas where rainfall is high.

As with some of the previous model results, if we look at the model vs observed by latitude the picture is somewhat better:

Humidity

Lastly, we will take a look at specific humidity. First the “measured”, as recalculated by ERA-40:

Observed annual mean specific humidity in g/kg, averaged zonally, 1980-1999. Note that the vertical axis is pressure on the left in mbar and km in height on the right.

And now the model error – but this time in % = (model – actual)/actual x 100:

Once again the ensemble appears to outperform Cici. And both, but especially Cici, have problems in the top half of the troposphere (around 500-200mbar) with 20-50% error in some regions in Cici’s case.

Conclusion

This has been a quick survey of model results for different parameters across the globe, but averaged annually, compared with observations.

In Part One, we saw the ensemble in its best light. But when we take a look at a real model, the supermodel Cici, we can see that she has a lot of areas for improvement.

There’s lots more to investigate about models, all to come in future parts of this series.

As always, comments and questions are welcome, but remember the etiquette.

[…] – Part Two now […]

Totally interesting and extremely easy to understand. A corner of my brain is now a lot tidier as a result, thanks.

Specific humidity is expressed as mass percent on the ‘actual’ plot?

You ask the question why the ensemble of models works better than individual models.

I have an extremely limited experience in physics models of atmospheric processes. However I used to develop Gaussian Plume and Puff models for air quality purposes and many of my models were used for my regional EPA assessments.

As part of the modeling process the very first step was to ensure that the model results looked at least reasonable compared to measurement – hindcasting if you like. The way the process worked was to take existing physics models or develop ab-initio models, write some code, and use data inputs – in my case usually time-series spatially distributed temperature, wind speed/direction, and solar radiation. Then run the model and see how well it compared to observation – e.g SO2 or Radon measurements in the study area.

If the model vs observation was way out of whack then the model was modified with more ‘physics’ or regional ‘adjustments’ were made. In the end my boss – (later head of my regional EPA) would say whether the model was acceptable – based on fairly subjective assessment.

Now my models were probably 1% of the complexity of the GCMs perhaps even 0.1% but I suspect the same principles apply. That is each model’s physics and data are tweaked to give a result basically in the ballpark for present state and some prior state.

The reason that the composite of GCM models is better than individual models is that each has a slightly different take on the physics, but all have the same common goal to match existing observations. If one gets the physics wrong in a particular detail, then another may get it right and overall the result will be reasonably accurate. *For the existing observation data set*

Now the problem is that each and every model has gone through a refinement to match observation. In all cases real physical principles are applied. The problem is that the physics adjustments are always the ‘easiest’ adjustments to make – or to be precise the least expensive in terms of data and coding. That they make a goodish fit is expected. That the ensemble is better than the parts is accepted (there is more physics involved) That they make an accurate model that has future validity is not so certain. The model development process is to minimise differences between model and current observation not to guarantee future accuracy.

The development of more accurate models will require much greater observational ability and much better physics modeling and coding. The relatively small data set of observations with relatively few parameters and very sparse observing points does not allow better models to be objectively made. In particular a ‘better’ model can’t be shown to be better than some other model – due to the lack of observations. This is simple statistics resulting from a short period of observations on a very limited set of observational parameters. There is – in my opinion – no possible way that computer models be provably improved using the very limited set of observed data.

When you are dealing with processes that cover decades, centuries and millennia you need accurate data to measure against to at least make sure your model matches the present – ignoring the future. I realize this is not directly relevant, but in my other field – Oceanography – I had to use the Rayleigh criteria to discern signal from noise. I suggest that on the time scales we are looking at that the models are too simplistic and that available data is too short in duration and that the parameters measured to validate the models are insufficient.

Dave McK:

Somehow my own comment didn’t make it onto the blog, perhaps the spam filter caught it..

I did update the article. The actual plot is g/kg – specific humidity. The model results are % error.

Jerry:

I think your assessment of why an ensemble is “better” is close to the mark. I have this theory in my head that I can’t properly express but you have encapsulated my woolly idea pretty well.

The IPCC summary clearly indicates that they can’t come up with a good reason why the ensemble should be preferred on technical grounds. Just it gives results that are, on average, closer to reality.

But, like a parameterization that works but has no physical basis, should we accept it?

Much to question with “ensembles”..

On your later point about validating the models, again the IPCC summary (of the views of the climate science community) is clearly struggling.

Possibly more on that in later posts.

Do models include the cooling effect of the ozone hole over the Antarctic? given the altitude they cover, they should, but the error in that region seems so “robust”…

Do you intend to cover the tropospheric hotspot issue in a future post?

The large polar artifacts may be due to the absence of actual measurements and infilling from stations quite distant- and obviously from warmer latitudes, since 0 is the north pole.

[img]http://wattsupwiththat.files.wordpress.com/2010/03/ghcn2_stations_global.jpg[/img]

How does this reconcile with the view that errors tend to propagate and grow, and that multiple layers of processing will increase, not decrease noise?

Alexandre:

I’m not sure whether the “general run” of a GCM like Cici will produce the Antarctic results, but as you might expect many people have used GCMs to focus on this.

The main approach is to use a chemistry climate model (CCM) in conjunction with a GCM. The big challenge is simulating the ozone changes – a 3d problem with lots of non-linearities.

With ozone changes correct – or “prescribed” from observational results – it would be surprising if less ozone didn’t result in cooling in the models, as the absorption of solar radiation by ozone is quite a very simple process. Still this last point is an assumption. More digging required.

One paper that doesn’t require “access” and gives a flavor of the problem is The simulation of the Antarctic ozone hole by chemistry-climate models, H. Struthers et al, 2009. There’s also what looks like a more interesting paper (requires access): Goddard Earth Observing System chemistry‐climate model simulations of stratospheric ozone‐temperature coupling between 1950 and 2005, S. Pawson, J. Geophys. Res (2007).

And the tropospheric hotspot issue is definitely a subject for posting on..

I read the abstracts and conclusion (of the free paper). Thank you.

As I understood it, those are complicated dynamics that are not yet well enough reproduced in the specific models, let alone the “general” ones.

Your blog (and of course your personal dedication) has been a very helpful resource to me to learn about climate and AGW. Thanks again.

http://ocean.dmi.dk/arctic/meant80n.uk.php

artic temps by day of the year

Alexandre

Do models include the cooling effect of the ozone hole over the Antarctic?

If would appear the question of whether or not the ozone hole will make things warmer of cooler is unanswered.

http://www.guardian.co.uk/environment/2009/dec/01/ozone-antarctica

“As blanket of ozone over southern pole seals up, temperatures on continent could soar by 3C, increasing sea level rise by 1.4m”

I hope we haven’t tossed out the CFC making equipment.

I did not see anything conflicting in the Guardian article:

More ozone in the stratosphere makes the south pole warmer.

The ozone *hole*, on the other hand, makes it cooler.

I think that the two figures that intend to show the reflected solar radiation as a function of latitude actually are showing the outgoing long-wave radiation. The maximum at the equator of about 250 w/m2 fit the values shown in the following figure, whereas the spatial map of reflected solar radiation displayed just before indicate values of at most 150 W/m2

eduardo

You are right, I think I have mixed up my graphs.. give me a few minutes..

… fixed up added the SW reflected radiation (model vs observed and model error) and moved the OLR (model vs observed and model error) to the right place with new captions.

Thanks for pointing it out.

The outgoing radiation by latitude plots overlaid on the humidity by latitude plot just makes a very coherent picture to me. The reflected radiation and the precipitation plots, simlarly.

It’s shaping up very nicely.

Time reading your articles is always a positive experience.

hunter:

Can you rephrase your question, I don’t really understand what you are asking.

Dave McK:

Anything’s possible, I gave up trying to understand the minutiae of temperature measurements a while ago..

But the legend gives us more than 5’C error with Cici vs observation – I expect the issue is with the model.

check it out.

It would be really interesting to compare the plots by-day vs by-night.

If I’m actually making sense of it, something like this follows:

The LW plot would resemble the precipitation plot at night.

I have a feeling also that the precipitation one may have a distinct east/west (day/night) difference that’s blended in the plot shown.

For example, the central peak may represent phenomena to the east of noon as the equatorial column ceases to be fed energy and can’t rise as it did when it was powered, so is no longer spread out to the lower latitudes the same as before.

scienceofdoom,

Here is another stab at it:

Flaws and imprecision tend to propagate.

They tend to hide in self-referential systems.

IOW, a bit of noise going in can easily grow into a lot of noise going out.

How does the ensemble approach reduce this?

I am skeptical about modelling, though I have no doubt that AGW happens.

There is another discipline, very well funded and with the very best in CIT, which makes mathematical models all the time and COMPARES THE RESULTS WITH THE ACTUAL OUTCOME REGULARLY.

It works on very much the same data as climate modelers use. It is known as meteorology, and it STILL can’t tell me what the weather will be like next Sunday.

The British Met. Office has stopped producing seasonal forecasts, perhaps because they fear that their abysmally inaccurate forecasts for the last 12 months, might lead people to question how they could possibly predict climate change .

(I suspect the journalists got it all wrong, but my favourite headline on that subject last summer – from the Daily Mail, not our most intellectual paper, mind you – read something like this:

“Met Office says there is a 50% chance that summer will be warmer than average”

Who needs a computer ?! )

Apropos of this, weather is chaotic and thus unpredictable over relatively short time spans. Climate is weather over a long period. It would be a reasonable to assume that climate was also chaotic and thus unpredictable over longer time spans. The historic temperature data seem to bear this out.

James McC:

One of the big questions is whether climate, as the average of weather, averages out all these chaotic fluctuations.

Science of doom

Isn’t the issue that although climate is the average and variability of weather, the climate models are not focused on that. The are in fact modeling non-weather parameters and trying to forecast what weather averages and variability will result.

So the process – as I understand it – is to generate a model, make sure the averages and variability of some weather parameters match present day – and even a bit of prior. Then run the models forward in time using non-weather parameters that are synthesised. At various future dates averages and variability of weather parameters are emitted as a ‘byproduct’ and it is these that are then used to announce hotter/colder/wetter/dryer/stormier/calmer.

As I pointed out before, there is no way to know on present time scales which models are better than others. They are all using similar input data processed by a whole host of physics programs such as computation fluid dynamics. They then all use their own little ‘physics’ estimates for the stuff they can’t measure or forecast with any accuracy – such as atmospheric lapse rates, cloud cover, aerosol content, albedo, vegetation cover, emissions, land use, solar inputs, atmospheric chemistry, vertical mixing, sea/air interaction, ocean chemistry, mineral/air interaction, ETC.

All models match some measurable parameters today. Which model is accurate? The only true way to know is the actual experiment of doing a forecast and waiting 100 years.

Do lost of different models make the process any better? Perhaps, but there is always the peer thing. If your model is significantly different from the mean then it simply won’t be assessed as relevant. There is an incredibly strong pressure to confirm existing model results.

In some way the development of models is chaotic. The earlier models will have the greatest influence on the development of the model consensus. It is then entirely random as to what the consensus will be depending on who gets in first. And in climate modeling there is no annual review of who’s model worked best – as in weather forecast models. The review period is at best relevant on century scales.

scienceofdoom,

You ask the excellent question, “One of the big questions is whether climate, as the average of weather, averages out all these chaotic fluctuations.”

I think it is reasonable to say that climate *is* weather. Climate exists as weather. I do not think climate, per se, does anything. A way to think of this is that actuaries measure the stats of particular risks and form models of those events occurring. But the tables they for can never be confused with the events.

heh- even climate is local and variable, hence the term ‘microclime’

if all the microclimes reversed, you might get the same average.

I think one can speak of an average ‘global heat content’ as some kind of general proxy related weather over time, though.

Dave McK,

Climate by definition is variable, since what it is made of- weather- is the same. Microclime makes me think of one of my favorite places to visit, the San Francisco Bay area, composed of many micro climates.

But heat content must not the only factor.

Land use, aerosols, gas mixes, are vital as well, to name a few.

Heh- I was actually thinking of a neighbor’s complaint when I put up a house on Cascade in Mill Valley, so I know Zackly.

Jerry

All good questions and comments, many of which will hopefully get looked at in more detail.

For hunter and others, on my comment –

What I’m thinking about is more around this idea:

If weather is a series of random events around “the average” (which we can call “climate”) then maybe the unpredictability of weather, which everyone subscribes to, averages out to something non-chaotic.

I’m not saying it does. I’m just putting it forward as a possibility that should be considered. Weather is chaotic. Is climate chaotic? Perhaps yes, perhaps no. But just because weather is chaotic I don’t think we can assume that climate is chaotic without more information.

Equally, the many concerned citizens claiming that climate isn’t chaotic don’t seem to put forward any evidence either.

Scienceofdoom,

While I’m thinking about it, do you plan to look at the climate derivation processes used by models? That is how they determine climate parameters at particular locations in the future based on their actual modeling of physical parameters such as radiation processes?

I assume their are standard models relating the present values of model parameters to actual climate values at different locations. How accurate are these models? How have they been derived? Will the be accurate in the future?

” The model doesn’t need the infamous “flux adjustment”: would you please be kind enough to post on this one day?

Climate has some very predictable parts- winter, spring summer and fall are named for a reason.

But what we are worried about is catastrophic changes within those limits.

The only question worth asking with public money and international treaties and imposed changes on industry and lives is that.

If we are facing something- even if human generated forcings are responsible- that is pretty much within ‘normal’, then AGW community demands ned to be tabled.

Jerry

I definitely plan to explain some more about the models, how the parameters are derived, how models are validated, and many more interesting aspects of GCMs.

dearieme:

On the “flux adjustment”..

The atmosphere and the oceans interact continuously. In climate models they are really two separate components. For example, in early GCM history an atmospheric model might be run which would simple include a big “slab” for the ocean at a certain temperature, or at a range of temperatures depending on time of year and latitude.

Once the ocean models were further developed and atmospheric and ocean models coupled together, the terminology became AOGCM (atmosphere-ocean GCM).

Many AOGCMs still have a problem. There isn’t an accurate transfer of:

-momentum

-heat

-water

between the two. So this “balancing term”, or “fudge factor” as some might call it, is added to avoid one of the components “drifting off” with too much/too little temperature (and/or momentum and water).

Cici, our supermodel, needs no cosmetics in this area..

[…] The paper with the heavy lifting is Radiative forcing by well-mixed greenhouse gases: Estimates from climate models in the IPCC AR4 by W.D. Collins (2006). There’s a lot in this paper and aspects of it will show up in the long awaited Part Eight of the CO2 series and also in Models, On – and Off, the Catwalk. […]

Overall, a good discussion on hindcasting. A few questions:

1. I note that, accidentally or on purpose, you have not mentioned clouds. The models all get the clouds badly wrong, their performance is all over the board, with differing models varying wildly. Now, given that

a) the clouds are critical to how the climate works, and

b) the modelled cloud results are so wrong, and

c) the results are so different between models, and yet despite that

d) the other results are generally fairly good and agree with each other,

what is your explanation of how they all get something like the “right” results using the wrong clouds?

2. None of these models have been subjected to Validation & Verification or SQA (software quality assurance) … why? Every mission-critical piece of software, the programs that run our submarines and elevators and subways and space shots, all of them are subjected to V&V and SQA. Yet people think we should bet billions on unverified, unvalidated models. Why?

3. None of these models have shown that they converge to the solution of the Navier-Stokes equations, nor that they conserve mass and momentum … how can they be trusted since they don’t follow well known physical laws?

4. In the brokerage ads in the US, they always have to put in a disclaimer on the order of “Past successes are no guarantee of future performance”. This applies double with respect to climate models that are tuned to the past. Being tuned to the past, the fact that they can hindcast the past means NOTHING. All of those pretty pictures above mean nothing about whether they can project future climate changes. Zero. Zip. Nada. Despite that, they are routinely used to project climate a hundred years from now. Perhaps you might comment on that?

5. The Constructal Law says that all flow systems far from equilibrium (like the climate) will work to maximize some internal variable. For the climate, this is the sum of work done plus turbulent loss. No climate model that I know of incorporates this well known constraint. Comments?

6. The reason weather models only are good for a few days is that the weather is chaotic. The climate is just as chaotic. So why do people believe that the climate models can project the climate of the next century? They were unable to forecast the current fifteen year hiatus in warming … people say they are not accurate in the short term but are good at the long term, but at what point do they get believable? Twenty five years? Fifty years? Give us a number.

7. The models give very similar results for climate sensitivity, yet they use very different assumptions for the aerosol forcings. How dey do dat? (Hint … tuning …) See Kiehl for details.

So far, all you have done is show hindcasts of models that are tuned to produce those very hindcasts … sorry, color me unimpressed. I can (and have) produced models that could hindcast the stock market with amazing accuracy equivalent to your pictures above … but the fact I’m not rich should tell you exactly what that’s worth. Chaotic systems are extremely difficult to forecast, and prior successes are no guarantee of future performance.

The pictures are pretty, but are you going to post in the rest of the series about some of the real well-known problems with the models? Read Dan Hughes excellent blog cited above for many more of these problems …

w.

Willis said

I agree with your statement but there is a big caveat.

Both weather and climate are bounded – not by a hard limit “it can’t get hotter than x” but by a probability density function that resembles a top hat with rounded edges and a tail to infinity – or nearest real-life equivalent.

The models for weather and climate can never be exact in detail but – subject to their almost certainly flawed physics models – do have the same bounded characteristic.

The big argument is whether the models are sufficiently physically accurate – my opinion is no – but then again I have no standing in this matter.

I should perhaps clarify my previous comment.

With weather the issue is short-term. Will it rain or not? How hot will it be? There on the one hand is a mass of historical statistics and on the other Computational Fluid Dynamics. The models take the observed state and project to the future (days) what will happen. In general terms they get it right. In summer you wouldn’t expect snow (unless you live in Calgary 🙂 ). This is a bounded result.

With climate models they are modeling long term physical effects. Agreed they are using historical data to calibrate their models and agreed in statistical terms that sucks. (I also worked as an economics modeler). The difference is they are using some physics to determine their model. The result is a valid forecast using their model – at least in terms of average values and variances. The problem with climate models is whether they are a true model of physical processes. As you pointed out there are a lot of processes that may be poorly modeled – clouds, but more importantly aerosols.

The fact the systems are chaotic is accepted. The real issue is the accuracy of the models and the resultant bounding probability density functions.

Willis Eschenbach:

Thanks for commenting.

Some of the model results are reasonable, some of them are poor. I’m surprised by how poor models are for OLR values geographically. I’m surprised at how poor they are for reflected SW radiation – e.g., “Cici has some large errors, for example, North Africa, Pacific Ocean and the Western Indian Ocean where the model error seems to be up to half of the actual value.” Perhaps some of this is clouds.

“Accidentally or on purpose”?? The approach we take on this blog is to assume good motives, not bad ones. That especially goes for the writer.

By the way, the link you gave didn’t work for me.

Why didn’t I dive into clouds?

I think it’s a big subject. You can’t give a good summary of a massive subject like climate models to a diverse audience in one soundbite. The CO2 series is seven parts (so far). Who knows how many parts climate models will be.

I don’t know. I’m still on part two, there’s lots to come.

I still think you are jumping ahead. On momentum and mass I understand that Cici does conserve mass and momentum (from each element in the grid). Much digging remains to be done of course. Perhaps Cici doesn’t. This is the kind of stuff we will be looking at – if you have knowledge of flaws in Cici go ahead and add some comments.

And perhaps, like most fluid dynamics the Navier Stokes equations will always have to be parameterized.

For once I’m ahead – in Part One, I said “A last comment before we see them on the catwalk – the catwalk “retrospective” – is that models matching the past is a necessary but not sufficient condition for them to match the future. However, it is – or it would be – depending on what we find.. a great starting point.”

I’ve never heard of this law. They didn’t teach it when I was at university. I looked it up on google scholar and I see lots of recent papers by A. Bejan. One paper not by Bejan said: “The constructal law is a self-standing principle, which is distinct from the Second Law of Thermodynamics. The place of the constructal law among other fundamental principles, such as the Second Law, the principle of least action and the principles of symmetry and invariance is also presented. The review ends with the epistemological and philosophical implications of the constructal law.”

I don’t even know what any of that means.

You can see my earlier comment (on this post): If weather is a series of random events around “the average” (which we can call “climate”) then maybe the unpredictability of weather, which everyone subscribes to, averages out to something non-chaotic.

I’m not saying it does. I’m just putting it forward as a possibility that should be considered. Weather is chaotic. Is climate chaotic? Perhaps yes, perhaps no. But just because weather is chaotic I don’t think we can assume that climate is chaotic without more information.

Well, it’s a point of view for discussion. I’m trying to see what evidence there is for different points of view..

A subject for a later part in this series. In fact, they give very different results for the value of climate sensitivity, but all calculate a linear sensitivity and so it is assumed by much of the “climate science community” that therefore there can be confidence in the idea of linear sensitivity.. Perhaps this assumption is wrong?

On your last comments, perhaps you have jumped to some conclusion that I haven’t made.

I will try and present the models in a fair light, including their flaws. Hatchet jobs and angel halos should be put to one side.

This kind of assessment takes time.

Willis Eschenbach:

True, it was the lack of just such comments that surprised me.

My apologies. You went on and on about how well the models did with various phenomena. Given that clouds are known to be a huge problem for models, the fact that you didn’t say a single word about them seemed extremely curious. And as you point out below, the omission was not accidental, it was on purpose. My bad, won’t happen again.

Apologies, try this one:

An Overview of the Results of the Atmospheric Model Intercomparison Project (AMIP I)

Yes, but with exactly such a diverse audience, to me you have a responsibility to mention at the outset the big problems. Then you can go on to show how well they do on the other stuff. Otherwise people will read one and two and go “man, the models are really great” and stop there.

Reasonable enough.

Well, depends on what you mean by “conserve”. I’m not that familiar with Cici, but I know that the GISS model E works like this: at the end of each iteration, whatever remains in the way of energy is swept up and spread out evenly around the globe as turbulent heat.

Now you could truthfully say that the energy is being conserved, I mean, none is gained or lost … but in fact, it’s being fudged, not conserved. And since turbulent loss is a key part of natural systems, this is a huge hole in the GISS model.

The problem is not the parameterization. It is whether the particular parameterization scheme being used can be shown to converge in the modelled context. This is done for such things as Boeing’s computer models of airplanes, but (AFAIK) has never been done for the GCMs.

Yes, I caught that. But as you said, your “diverse audience” might not. We’re getting into areas of exposition and emphasis rather than science, but it is important. The fact that models can hindcast is an expected result, not a good or meaningful thing.

In addition, there are a couple of very big problems with the hindcasts you show:

1. How many didn’t match the historical data, and ended up on the cutting room floor, never to be seen?

2. How far in advance of the realization shown did the model start? If it started at the start of the year in question, that is a much less meaningful result than if they started five years before.

So this is the “I couldn’t understand it so I can ignore it” defense? Bejan is one of the 100 most cited scientists on the planet. His recent (1995) discovery of the Constructal Law is one of the most important advances in thermodynamics in centuries. It has been applied very successfully in a host of disciplines from biology to engineering. And it is extremely applicable to climate. I strongly suggest that you take the time to learn about it.

My fault, I didn’t cite that one. No less an authority than Mandelbrot shows mathematically that climate is just as chaotic as weather. If it were not, there would be a breakpoint in the fractal dimension at the timescale where it changes from chaotic to non-chaotic … but there isn’t.

Excellent, I look forward to the rest of the series. I think the idea of a linear sensitivity is nonsense, I think sensitivity is a function of (among other things) temperature.

Glad to hear it, because to date it seems like 97% angel halos. And since many people won’t read all of it, that concerns me.

Overall, you have embarked on a most ambitious project. It would be very valuable if you gave a road map at the start, so folks would know that you were showing the best side first and the warts later …

On re-reading my comments, I see that I have been un-necessarily harsh. My apologies, sometimes I let my disdain for the Tinkertoy™ models overwhelm me. I wrote my first computer program in 1963, on a computer that took up a small room. I know models and modeling intimately, and I am aware of their limitations. One thing is for sure, they can’t forecast anything out 100 years, that’s a sick joke.

Keep up the good work, and please take my comments in the supportive way in which they are intended.

Interesting article. Calling the CCSM3 “Cici” is rather silly – no-one in the know calls it that, never has.

It’s now an obsolete model with the release of CCSM4, and with the upcoming release of the “CESM1.0” (final name as-yet undecided) in June.

Eschenbach’s comments along the lines of GCMs never being correctly V&V’ed is off; both of your need to look into the work of Steve Easterbrook to correct the misperceptions and outright falsehoods in this area.

Derecho64

A citation would be useful. Thanks.

See Steve’s blog at

http://www.easterbrook.ca/steve

Particularly his posts on climate model software there.

Also see his paper

S. M. Easterbrook and T. C. Johns (2009), Engineering the Software for Understanding Climate Change, Computing in Science and Engineering.

linked at

Click to access Easterbrook-Johns-2008.pdf

Willis, I recommend you read some of the relevant literature regarding climate models before putting them in the wastebin so quickly. Being an old programmer doesn’t mean you understand the program in question *a priori*. I recommend you access the source code for either CCSM3 or CCSM4 (both publicly available) and familiarize yourself with them. They aren’t as Tinkertoy as you dismiss them as being.

One point – before starting a run with historical forcings (i.e., ~1850 to ~2010), most climate models are “spun up” for centuries to millenia with fixed pre-industrial forcings. That allows them to mostly equilibrate and knowledge of the internal variability to be gained. They are not started from rest at 1850, or 1980, or 2000.

But, knowing the literature on how climate models are used would tell you that right away.

Derecho64

Derecho, I recommend that you actually find out what the person you are speaking to has done before being foolishly patronizing … I have studied the models enough to make my head spin. I recommend that you access the source code for looking before you leap and familiarize yourself with it.

Regarding Steve’s blog that you cited, I started with the first item, his reference to Jon Pipitone’s work. It is an interesting MSc thesis … but Pipitone makes no claim that there has been any serious V&V or SQA done on the models. Which is what I had said. Nor does he claim that his work consists of V&V on the models. He says:

This is a worthwhile study … but it is not V&V by any stretch of the imagination. And it doesn’t report the results of any V&V.

As one of many examples, he is looking to find out which “quality concepts” capture the quality that is “relevant to climate scientists” … call me crazy, but I want the “quality concepts” relevant to the truth, not the ones that are relevant to Gavin Schmidt.

Next, I had already read Easterbrook and Johns. It is not a V&V study either (although it is an interesting study, and I got a lot of good laughs out of their naiveté). They describe it as “an ethnographic study of the culture and practices of climate scientists at the Met Office Hadley Centre”. They claim that the scientists have their own methods of V&V … but since V&V is a rather mature field of study, and the scientists are using untested “V&V” methods rather than the methods that have been proven to work, I can’t say I was impressed that E&J agreed so readily with the scientists.

They say the scientists are doing “V&V” in three ways. First, by looking at the output and seeing whether it agrees with their preconceived ideas of what the output looks like. Second, by “turning off” new sections of code for “bit comparison testing”. Third, by comparing the output of their model with other models.

If you agree with Easterbrook and Johns that those steps comprise V&V, I fear I don’t know what to say. But looking at the output and seeing whether it fits your preconceptions hasn’t found its way into proper V&V yet, and likely never will.

For another example, the authors say (emphasis mine):

So if the model is going off course regularly because it is not conserving mass (not conserving mass???), you just push it back on course, rather than fix the underlying defects … and you agree with Easterbrook and Johns when they call this “V&V”?

And since the Hadley scientists haven’t done any proper V&V testing, how do they know that this process catches “most errors prior to release”? If you are just making “periodic corrections” you haven’t fixed the error at all. And worse than that, you don’t know what caused the error, or how far it may run, or what other effects it may be having … but no worries, because E&J assure us that they have caught “most errors” … oh, except for the error leading to mass not being conserved …

The idea that what the Hadley scientists are doing constitutes V&V is a sick joke. They are just pushing the model back onto the path that they “know” is the right one, and considering the error fixed, whether or not mass is conserved.

And you want to lecture me on V&V?

Next, if you think that the ideals that the Hadley guys sold to Easterbrook and Johns are what actually happens in the field, you desperately need to take a look at the “Harry Read Me” file for an example of real-world programming regarding a much simpler problem …

Finally, you still have not pointed us to a single V&V study done on a climate model. Not one. Your claim was that:

Instead, you have given us Easterbrooks attempt to claim that what the Hadley scientists are doing constitutes V&V, but that doesn’t even pass the laugh test. V&V is a rigorous process that doesn’t allow for incorrect programs to be just nudged back into line. The cites you have given have nothing to do with V&V.

Derecho64

Well, since I know that, and have known that for years, and have analyzed their performance while they are being spun up, and have debated with AGW supporters about their performance while they are being spun up … what on earth makes you get all snooty about your fatuous claim that I don’t know that? Where did I claim that they were “started from rest”?

I know to whom I’m talking.

What has your V&V analysis of CCSM3 shown? It’s been public for nearly six years now – plenty of time to rip into it and show those climate scientists where they’ve gone wrong.

I’d be interested in reading what a real engineer has done to analyze and report on the quality of the software in climate models, rather than handwaving and digs at specific individuals.

Besides, scientifically correct trumps some narrow definition of software correctness. I’d rather have software get the right answer than the wrong answer. Certainly you can pick at the code, but unless you have a good and thorough understanding of the problem realm, you’re really not capable of good understanding of the code and analyzing it.

If you can keep the personal commentary and *ad hominem* attacks out of it, that would be nice.

Derecho64

You keep going off on these tangents. I never said that I have done a V&V analysis of CCSM3, that’s your fantasy. Stick to facts.

Derecho64

“Digs at specific individuals”? You referred me to a couple of citations. I found them lacking. Says nothing about the individuals.

Nor did I do any “handwaving”. I specified the problems that I found and the objections I had to their work, which you have ignored completely … who is handwaving here?

For a look at what a real engineer says about the model software, see Dan Hughes’ blog here, or here, or here.

Derecho64

You have referred me, with a glowing recommendation, to the E&J paper which describes a model that doesn’t correctly calculate the conservation of mass, and thinks it is “V&V” to just nudge it back on line. So I’m not sure what you mean by “scientifically correct” …

Now I, like you, would rather have the software give the right answer … like, say, be able to forecast that we’d have a fifteen year hiatus in the warming. Unfortunately, not one of the many models saw that coming. So what “right answer” are you talking about?

Please don’t tell me that you are impressed that a model tuned to the past can hindcast the past …

I’m not impressed by Hughes, or Motl, or the standard contrarian canards. I’m also not impressed by digs at Schmidt or material lifted from the hacked CRU files.

There’s probably a nice set of papers that could come out of your ideas about the software of climate models, but refusing to get in the game and instead engaging in nothing more than Monday-morning quarterbacking, based on incomplete knowledge, just isn’t important.

Derecho64

Impressed by Motl? What on earth does whether you are impressed by Motl have to do with his math? Do you truly think I cited Lubos to impress you? Either Motl’s math is good or it is not, regardless of the level of your impressment.

Since you have raised no objections to Motl’s math, we can provisionally assume that he is correct (until he is shown to be wrong) and move on from there.

And since you think the issue is whether you are impressed by Dan Hughes work, and thus you have not raised a single objection to Dan’s work either, we can provisionally assume that Dan is correct (until he is shown to be wrong) and move on from there as well.

Thanks for sharing. If you think Lubos’s math is wrong, please show us where …

Derecho64, regarding E&J’s ideas about V&V, here’s how it works out in the real world. First, here’s how Easterbrook and Johns describe the process:

Next, here’s how it works out in the real world. Gavin Schmidt comments on a change in the GISS Model E climate model here.This is his complete verbatim comment, the ellipses are his.

This is how they handle what E&J call “bit comparison testing” … note that there is an error, wonder about it, and then ignore it.

Is that V&V? Not on my planet … but then they seem to live in Modelworld …

“V&V”

The IPCC does not use the word validation. It uses evaluation instead. There is a reason why climate model runs are not called forecasts but projections and why ensembles of projections are created. The confidence modellers have increases when different models make similar projections and when the outcome is confirmed by comparing the results to the actual climate.

A validation similar to the that done for Boeings computer model of airplanes isn’t possible. Perhaps the world’s climate is more complicated than an airplane?

Willis quoted

That bit technique technique is reminiscent of 50’s and 60’s development of numerical software – such as transcendental calculations. The reason it was done there is because you had to guard against microcode changes as well as physical differences between computers.

These days it’s possible to check a CPU against a known test-suite and guarantee that it’s working correctly – however I bet it’s not 100% sampled.

Quite how the bit comparison technique works with models that include pseudo-random components escapes me.

I’d suggest that it is used in climate science for exactly the same reason that fortran is used – because that’s the way it’s always been done. I’d also suggest that it’s high time climate scientists started using proper software tools and V&V checks that weren’t looking for – literally – bugs in the multiply-accumulator sub-rack.

In my view, proper V&V checks should be based on unit testing of code functionality as well as applying unit physics checks.

A question about ensembles.

A model is a theory a la Popper. Using this fact I can see that one would want to use the same model with different sets of inputs with slightly different values to make a prediction. In this case, the slightly different inputs are necessary to simulate the uncertainty in the measurements that produced the input. The measured climate will then be expected to be close to the computed climates.

What I do not understand is why it is possible to mix the results of different models. It would be the same as saying that you get better results if you mix the results of Newtonian and Einsteinian theories of gravity.

In fact, the different models are competing theories a la Popper, and the thing to do would be to try and find out which one is the best.

Can anyone tell me whether any of the AOGCMs successfully (i.e. realistically) model the annual albedo fluctuation?

[…] wasn’t sure why this should necessarily be the case. Along the way I stumbled across this post from Science of Doom, where he digs up the following quote from IPCC chapter 8: Why the […]

As every engineer knows, all models are wrong but some are useful for a purpose. As someone whose spent a career modeling non-linear systems of 1/100 the complexity of a GCM,color me skeptical that they are useful for the purpose of projections a hundred years hence. If, as is claimed, they can’t make accurate projections on decadal time scales but can on centennial scales, it will be the first such model ever discovered whose uncertainty decreases with time. Integrative models (and one presumes GCMs fall into this category) always diverge due to natural variation. Noise + integration = Random Walk.

2nd part for Willis and Derecho64 et al

1) Ensemble averaging is a very bad idea. It is akin to a “solicitor’s trick”. While there are some rare occasions where it may have a place, it is entirely inappropriate in such controversial settings, since it gives license for the authors to arrive at any answer they wish, and then with pretence of scientific legitimacy. If I had a PDE/model for a submarine, and another for an air-plane, I could average those to pretend I have a sail-boat, but what would that actually mean?

2) In any case, GCM’s, in the context of insisting on massive upheaval for the planet’s population, are … I am sorry to say, rubbish … and Willis is absolutely correct in that the IPCC models do not have anything that qualifies as (proper) verification etc. … see below.

Not only are they wrong, but they must necessarily be wrong due to fundamental properties of cosmos (not to mention the omission of many very important factors; planetary, geological, solar, deep ocean, etc etc).

… as a side issue, relying on the notion that bigger/more complex code must be better is something of a non sequitur.

Just two of the fatal problems with IPCC GCM’s are:

a) Volcanoes are very important. If you look just at Fig 8.1 AR4 (or Fig 9.8 AR5), the volcanoes in the last 100 years account for 1 – 2 C of cooling, and that is when the net warming was 0.7C/100years.

None of the models can account for volcanoes, at least not without cheating. I trust we all agree that no one can predict volcanoes … so how did all those IPCC models get all the volcanoes exactly right in their back-test (1,900 – 2,000)? They did it by cheating, or perhaps they secretly invented a time machine.

If you remove the “volcano cheating” from the back-test, their models would have on the order of 2C in error, just for the past 100 years. That is a massive error … particularly if you are insisting that a 2C rise is already enough to spell doom & gloom

… and especially if they are to insist for the entire population of the planet to undertake a massive upheaval on that type of (flaky) prognostication.

Put differently, there is no way they can account for volcanoes in the future, so there is little credibility for their forecasts out to 2,100 (they couldn’t even get the last 15 years right, and now they are whinging that it was due, in part, to volcanoes … they certainly do have balls).

b) Even worse, none of their modelling has accounted for non-linear dynamics (chaos, etc.) As such, we don’t even know if the Sensitivity to Initial Conditions (SIC) and to Parameters (SP), has fundamental pathologies, as it is entirely likely that nature of the space-time continuum makes any such type of forecasting fundamentally impossible (no matter how many math-geeks or supercomputers you throw at it).

Of course, the models also do not at all or nearly account for other massive forces (solar, planetary, geological, deep ocean cycles, etc), and make quite a number of questionable assumptions even within what they aim to cover (e.g. controversies over RF, etc etc).

… just to save Willis et al some time, my area of expertise is mathematical modelling, PDE’s, non-linear dynamics etc … and I’ve been doing this for decades … of course I am not infallible, and I would be happy to update my position if anyone provides real math/science/equations/data etc to show differently.

… and yes, I have gone through maths/docs for the “cici’s”, and have literally walked through both of the dloadable GISS models, they are not what some seem to assume them to be (very expensive/fancy toys, yes, … reason for screwing over the population of the planet, NO) ….and I have written a few hundred k-line models/solvers on my own.

PS. I composed a short note on the volcano issue including the charts, and short very pedestrian explanation of SIC and SP (lots of pictures, only one simple equation) … dloadable here (http://www.thebajors.com/climategames.htm).

May I ask you if you could give me the source for the excellent DTR figure you have used above (Annual average of diurnal temperature range over land). I have been trawling the IPCC website and reports for it, but have had no luck.

Many thanks

David,

Sorry I should have given a better reference.

This is from Chapter 8 Supplementary Materials: Climate Models and Their Evaluation from AR4.

The figure is on page 17: SM.8-17.

Most of the graphs reproduced are from the Supplementary Material.

@ SOD: Above, you reference the “Anglotzen” method for determining error in models. I’m not familiar with the term and can’t find it online (sorry).

Is that just a tongue-in-cheek term for visual estimation, or is that a real thing?

Jeff,

Sorry – for some reason your comment got stuck in moderation, don’t know why.

It’s over 6 years since I wrote this. I vaguely remember it being tongue in cheek, derived from something German. Let’s put it like this – it definitely wasn’t a serious statistical method.