In the last article – Opinions and Perspectives – 3 – How much CO2 will there be? And Activists in Disguise – one commenter suggested that RCP8.5 was actually “business as usual” and put forward some comments. So I’m posting this followup to identify some key points in that scenario, before going onto the next topic.

I’m trying to keep this series of articles brief and as non-technical as possible, but this one has to be a little more detailed.

It was excellent to find someone prepared to defend RCP8.5 as “business as usual”. We need more champions of this idea so it can be discussed. What key climate scientists should do is publish a paper “Why RCP8.5 is actually business as usual“. Or “How RCP8.5 has a serious likelihood of becoming reality“. So it can be discussed out in the open.

Science dies in the darkness.

The brief for the astrologers and soothsayers – no I’m kidding, future forecasters – in preparing a set of scenarios, later the four RCPs, was to produce internally consistent storylines that covered the range of CO2 concentrations (and other GHGs) covered in climate science papers.

From Emissions Scenarios, IPCC (2000):

..A set of scenarios was developed to represent the range of driving forces and emissions in the scenario literature so as to reflect current understanding and knowledge about underlying uncertainties. They exclude only outlying “surprise” or “disaster” scenarios in the literature. Any scenario necessarily includes subjective elements and is open to various interpretations. Preferences for the scenarios presented here vary among users. No judgment is offered in this Report as to the preference for any of the scenarios and they are not assigned probabilities of occurrence, neither must they be interpreted as policy recommendations..

[Emphasis added]

RCP8.5 was one of the four RCPs constructed because some simulations had covered quadrupling CO2 in the atmosphere (from pre-industrial levels). From van Vuuren et al (2011):

By design, the RCPs, as a set, cover the range of radiative forcing levels examined in the open literature and contain relevant information for climate model runs..

[Emphasis added]

Here are a few extracts from Riahi’s 2007 paper, which was the foundation for RCP8.5 in the 2011 paper (scenario A2r is similar to RCP8.5):

The task ahead of anticipating the possible developments over a time frame as ‘ridiculously’ long as a century is wrought with difficulties. Particularly, readers of this Journal will have sympathy for the difficulties in trying to capture social and technological changes over such a long time frame. One wonders how Arrhenius’ scenario of the world in 1996 would have looked, perhaps filled with just more of the same of his time—geopolitically, socially, and technologically. Would he have considered that 100 years later:

- backward and colonially exploited China would be in the process of surpassing the UK’s economic output, eventually even that of all of Europe or the USA?

- the existence of a highly productive economy within a social welfare state in his home country Sweden would elevate the rural and urban poor to unimaginable levels of personal affluence, consumption, and free time?

- the complete obsolescence of the dominant technology cluster of the day-coal-fired steam engines?

How he would have factored in the possibility of the emergence of new technologies, especially in view of Lord Kelvin’s sobering ‘conclusion’ of 1895 that “heavier-than-air flying machines are impossible”?

Also note that an “extremely high emissions scenario” (as I prefer to describe it), could also be constructed in other ways:

For reasons of scenario parsimony, our set of three scenarios does not include a scenario that combines high emissions (and hence high climate change) with low vulnerability (e.g., as reflected in high per capita incomes).

[Emphases added].

Here is the brief summary of the development of the A2 world – basically the RCP8.5 world:

The A2 storyline describes a very heterogeneous world. Fertility patterns across regions converge only slowly, which results in continuously increasing global population. The resulting ‘high population growth’ scenario adopted here is with 12 billion by 2100, lower than the original ‘high population’ SRES scenario A2 (15 billion). This reflects the most recent consensus of demographic projections toward lower future population levels as a result of a more rapid recent decline in the fertility levels of developing countries.

As in the A2 scenario, fertility patterns in our A2r scenario initially diverge as a result of an assumed delay in the demographic transition from high to low fertility levels in many developing countries. This delay could result both from a reorientation to traditional family values in the light of disappointed modernization expectations in this world of ‘fragmented regions’ and from economic pressures caused by low income per capita, in which large family size provides the only way of economic sustenance on the farm as well as in the city.

Only after an initial period of delay (to 2030) are fertility levels assumed to converge slowly, but they show persistent patterns of heterogeneity from high (some developing regions, such as Africa) to low (such as in Europe).

Economic development is primarily regionally oriented and per capita economic growth and technological change are more fragmented and slower than in other [scenarios]. Per capita GDP growth in our A2r scenario mirrors the theme of a ‘delayed fertility transition’ in terms that potentials for economic catch-up only become available once the demographic transition is re- assumed and a ‘demographic window of opportunity’ (favorable dependency ratios) opens (i.e., post-2030).

As a result, in this scenario, ‘the poor stay poor’ (at least initially) and per capita income growth is the lowest among the scenarios explored and converges only extremely slowly, both internationally and regionally. The combination of high population with limited per capita income growth yields large internal and international migratory pressures for the poor who seek economic opportunities. Given the regionally fragmented characteristic of the A2 world, it is assumed that international migration is tightly controlled through cultural, legal, and economic barriers. Therefore, migratory pressures are primarily expressed through internal migration into cities. Consequently, this scenario assumes the highest levels of urbanization rates and largest income disparities, both within cities (e.g., between affluent districts and destitute ‘favelas’) and between urban and rural areas.

Given the persistent heterogeneity in income levels and the large pressures to supply enough materials, energy, and food for a rapidly growing population, supply structures and prices of both commodities and services remain different across and within regions. This reflects differences in resource endowments, productivities, and regulatory priorities (e.g., for energy and food security).

The more limited rates of technological change that result from the slower rates of both productivity and economic growth (reducing R&D as well as capital turnover rates) translates into lower improvements in resource efficiency across all sectors. This leads to high energy, food, and natural resources demands, and a corresponding expansion of agricultural lands and deforestation.

The fragmented geopolitical nature of the scenario also results in a significant bottleneck for technology spillover effects and the international diffusion of advanced technologies. Energy supply is increasingly focused on low grade, regionally available resources (i.e., primarily coal), with post-fossil technologies (e.g., nuclear) only introduced in regions poorly endowed with resources.

[Emphases added]

Events that would prevent RCP8.5 occurring

- If sub-Saharan Africa stays mired in high levels of poverty – then it won’t be burning vast amounts of coal

- If sub-Saharan Africa moves out of high levels of poverty but goes through the “demographic transition” – women having less babies. This is something that has happened to every country so far, with cultures as different as Iran, Thailand and Taiwan – then the population won’t reach anything like 12bn and so it won’t be burning vast amounts of coal

- If sub-Saharan Africa moves out of high levels of poverty and doesn’t go through the “demographic transition” but adopts latest technology from more advanced countries, which is something that always happens especially with the internet, but also happened well before – then the energy efficiency of their economies will be much better than assumed

- If the above but for some reason sub-Saharan Africa doesn’t adopt more efficient technologies but the rest of the world makes lots of natural gas available at attractive prices – then not much coal will be burnt

It is a very unlikely world where RCP8.5 becomes a reality.

As a small backdrop, here are the population predictions from Wolfgang Lutz & Samir KC (2010). Lutz is a prolific figure in this field:

The total size of the world population is likely to increase from its current 7 billion to 8–10 billion by 2050. This uncertainty is because of unknown future fertility and mortality trends in different parts of the world. But the young age structure of the population and the fact that in much of Africa and Western Asia, fertility is still very high makes an increase by at least one more billion almost certain. Virtually, all the increase will happen in the developing world. For the second half of the century, population stabilization and the onset of a decline are likely.

[Emphasis added].

For people who don’t know much about development over the 20th century I very highly recommend The Great Escape, by Angus Deaton, Nobel Prize winner in Economics. He also covers the demographic transition.

Why This is Important

Some of the results of climate models for this “extremely high emissions scenario” (my term for it) contain very scary outcomes (I’ll be writing about climate models in this series).

The difference in outcomes (as predicted by models) between RCP8.5 and RCP6 (a more likely “business as usual” scenario) should be highlighted. If policymakers and the public are concerned that we might reach 12bn population in 2100 with sub-Saharan Africa lighting up coal fired power stations by the thousands then there are some straightforward steps to take.

- Keep the internet going so that technology for energy efficiency is available to sub-Saharan Africa, also teams of experts for technology transfer

- Encourage women’s education in that region (as Angus Deaton points out in his book this appears to be the key for the demographic transition)

- Encourage large scale gas extraction so that cheap gas is available instead of coal

I realise this last point goes against everything that climate activists believe in. But it seems like the rational response to the concern over RCP8.5 vs RCP6.

Surely this is why these scenarios were developed – so we can make some attempt to assess the outcomes and costs of different possible futures?

References

Scenarios of long-term socio-economic and environmental development under climate stabilization, Keywan Riahi et al, Technological Forecasting & Social Change 74 (2007)

RCP 8.5—A scenario of comparatively high greenhouse gas emissions, Keywan Riahi et al, Climatic Change (2011)

A special issue on the RCPs, van Vuuren et al (2011)

Read Full Post »

Opinions and Perspectives – 9 – Pattern Effects

Posted in Commentary on March 31, 2019| 334 Comments »

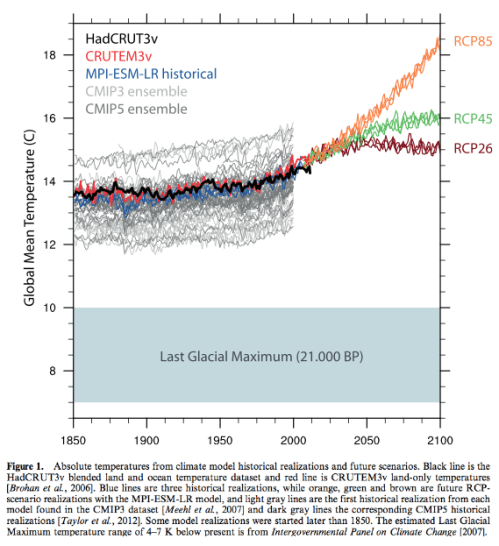

The IPCC 5th Assessment Report (AR5) from 2013 shows the range of results that climate models produce for global warming. These are under a set of conditions which for simplicity is doubling CO2 in the atmosphere from pre-industrial levels. The 2xCO2 result. Also known as ECS or equilibrium climate sensitivity.

The range is about 2-4ºC. That is, different models produce different results.

Other lines of research have tried to assess the past from observations. Over the last 200 years we have some knowledge of changes in CO2 and other “greenhouse” gases, along with changes in aerosols (these usually cool the climate). We also have some knowledge of how the surface temperature has changed and how the oceans have warmed. From this data we can calculate ECS.

This comes out at around 1.5-2ºC.

Some people think there is a conflict, others think that it’s just the low end of the model results. But either way, the result of observations sounds much better than the result of models.

The reason for preferring observations over models seems obvious – even though there is some uncertainty, the results are based on what actually happened rather than models with real physics but also fudge factors.

The reason for preferring models over observations is less obvious but no less convincing – the climate is non-linear and the current state of the climate affects future warming. The climate in 1800 and 1900 was different from today.

“Pattern effects”, as they have come to be known, probably matter a lot.

And that leads me to a question or point or idea that has bothered me ever since I first started studying climate.

Surely the patterns of warming and cooling, the patterns of rainfall, of storms matter hugely for calculating the future climate with more CO2. Yet climate models vary greatly from each other even on large regional scales.

Articles in this Series

Opinions and Perspectives – 1 – The Consensus

Opinions and Perspectives – 2 – There is More than One Proposition in Climate Science

Opinions and Perspectives – 3 – How much CO2 will there be? And Activists in Disguise

Opinions and Perspectives – 3.5 – Follow up to “How much CO2 will there be?”

Opinions and Perspectives – 4 – Climate Models and Contrarian Myths

Opinions and Perspectives – 5 – Climate Models and Consensus Myths

Opinions and Perspectives – 6 – Climate Models, Consensus Myths and Fudge Factors

Opinions and Perspectives – 7 – Global Temperature Change from Doubling CO2

Opinions and Perspectives – 8 – Pattern Effects Primer

Read Full Post »