General Circulation Models or Global Climate Models – aka GCMs – often have a bad reputation outside of the climate science community. Some of it isn’t deserved. We could say that models are misunderstood.

Before we look at models on the catwalk, let’s just consider a few basics

Introduction

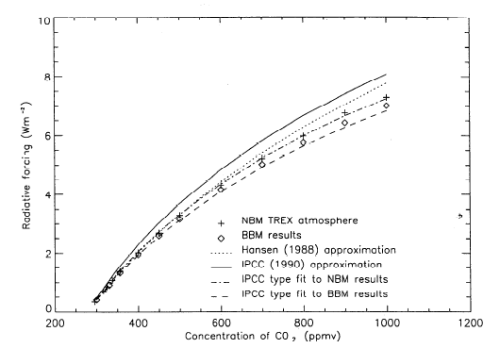

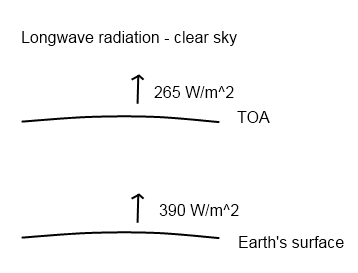

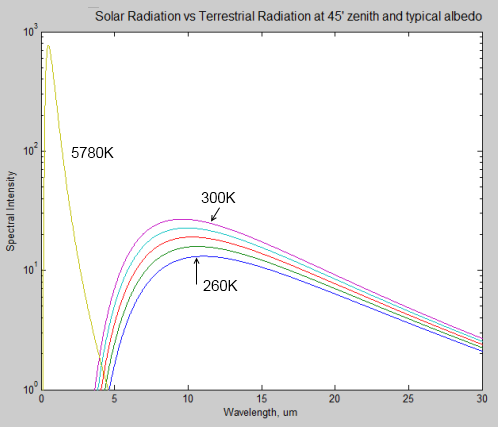

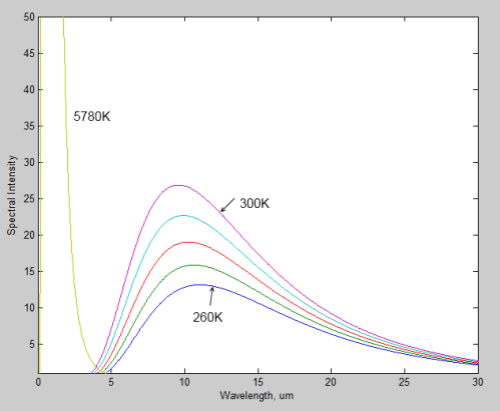

In an earlier series, CO2 – An Insignificant Trace Gas we delved into simpler numerical models. These were 1d models. They were needed to solve the radiative transfer equations through a vertical column in the atmosphere. There was no other way to solve the equations – and that’s the case with most practical engineering and physics problems.

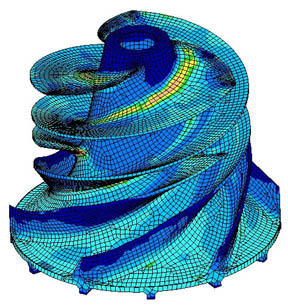

Here’s a model from another world:

Here’s a visualization of “finite element analysis” of stresses in an impeller. See the “wire frame” look, as if the impeller has been created from lots of tiny pieces?

In this totally different application, the problem of calculating the mechanical stresses in the unit is that the “boundary conditions” – the strange shape – make solving the equations by the usual methods of re-arranging and substitution impossible. Instead what happens is the strange shape is turned into lots of little cubes. Now the equations for the stresses in each little cube are easy to calculate. So you end up with 1000’s of “simultaneous” equations. Each cube is next to another cube and so the stress on each common boundary is the same. The computer program uses some clever maths and lots of iterations to eventually find the solution to the 1000’s of equations that satisfy the “boundary conditions”.

Finite element analysis is used successfully in lots of areas of practical problem solving, many orders simpler of course, than GCMs.

Uses of Models

One use of models is to predict, no project, future climate scenarios. That’s the one that most people are familiar with. And to supply the explanation for recent temperature increases.

But models have more practical uses. They are the only way to provide quantitative analysis of certain situations we want to consider. And they are the only way to test our understanding of the causes of past climate change.

Analysis

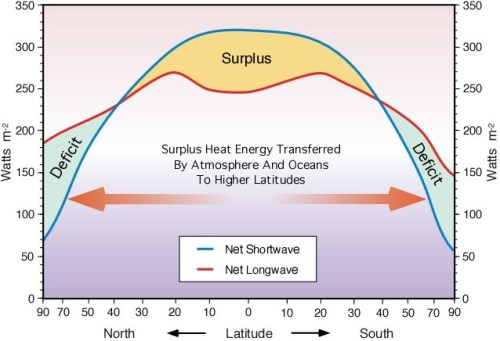

On this blog one commenter asked about how much equivalent radiative forcing would be present if all the Arctic sea ice was gone. That is, with no sea ice, there is less reflection of solar radiation. So more absorption of energy – how do we calculate the amount?

You can start with a very basic idea and just look at the total area of Arctic sea ice as a proportion of the globe, and look at the local change in albedo from around 0.5-0.8 down to 0.03-0.09, multiply by the current percentage area in sea ice to find a number in terms of the change in total albedo of the earth. You can turn that into the change in radiation.

But then you think a little bit deeper and want to take into account the fact that solar radiation is at a much lower angle in the Arctic so the first number you got probably overstated the effect. So now, even without any kind of GCM, you can simply use the equation for the reduction in solar insolation due to the effective angle between the sun and the earth:

I = S cos θ – but because this angle, θ, changes with time of day and time of year for any given latitude you have to plug a straightforward equation into a maths program and do a numerical integration. Or write something up in Visual Basic or whatever your programming language of choice is. Even Excel might be able to handle it.

This approach also gives the opportunity to introduce the dependence of the ocean’s albedo on the angle of sunlight (the albedo of ocean with the sun directly overhead is 0.03 and with the sun almost on the horizon is 0.09).

This will give you a better result. But now you start thinking about the fact that the sun’s rays are travelling in a longer path through the atmosphere because of the low angle in the sky.. how to incorporate that? Is it insignificant or highly significant? Perhaps including or not including this effect would change the “radiative forcing” by a factor of two? (I have no idea).

So if you wanted to quantify the positive feedback effect of melting ice your “model” starts requiring a lot more specifics. Atmospheric absorption by O2 and O3 depending on the angle of the sun. And the model should include the spatial profile of O3 in the stratosphere (i.e., is there less at the poles, or more).

It’s only by doing these calculations that the effect of sea ice albedo can be reliably quantified. So your GCM is suddenly very useful – essential in fact.

Without it, you would simply be doing the same calculations very laboriously, slowly and less accurately on pieces of paper. A bit like how an accounts department used to work before modern PCs and spreadsheets. Now one person in finance can do the job of 10 or 20 people from a few decades ago. Without an accountant someone can just change an exchange rate, or an input cost on a well-created spreadsheet and find out the change in cash-flow, P&L and so on. Armies of people would have been needed before to work out the answers.

And of course, the beauty of the GCM is that you can play around with other factors and find out what effect they have. The albedo of the ocean also changes with waves. So you can try some limits between albedo with no waves and all waves and see the change. If it’s significant then you need a parameter that tells you how calm or stormy the ocean is throughout the year. And if you don’t have that data, you have some idea of the “error”.

Everyone wants their own GCM now..

Of course, in that thought experiment about sea ice albedo we haven’t calculated a “final” answer. Other effects will come into play (clouds).. But as you can see with this little example, different phenomena can be progressively investigated and reasonably quantified.

Past Climate

Do we understand the causes of past climate change or not? Do the Milankovitch cycles actually explain the end of the last ice age, or the start of it?

This is another area where models are invaluable. Without a GCM, you are just guessing. Perhaps with a GCM you are guessing as well, but just don’t know it.. A topic for another day.

Common Misconception

The idea floats around that models have “positive feedback” plugged into them. Positive feedback for those few who don’t understand it.. increases in temperature from CO2 will induce more changes (like melting Arctic sea ice) that increase temperature further.

Unless it’s done very secretly, this isn’t the case. The positive feedbacks are the result of the model’s output.

The models have a mixed bag of:

- fundamental equations – like conservation of energy, conservation of momentum

- parameterizations – for equations that are only empirically known, or can’t be easily solved in the “grid” that makes up the 3d “mesh” of the GCM

More on these important points in the next post.

“Necessary but Not Sufficient”

A last comment before we see them on the catwalk – the catwalk “retrospective” – is that models matching the past is a necessary but not sufficient condition for them to match the future. However, it is – or it would be – depending on what we find.. a great starting point.

Models On the Catwalk

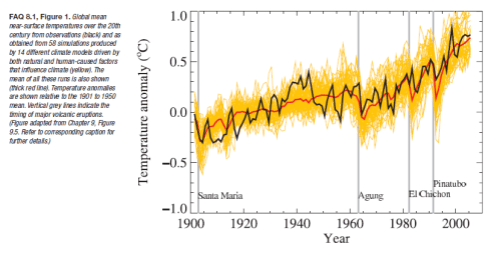

Most people have seen this graph. It comes from the IPCC AR4 (2007).

The IPCC comment:

Models can also simulate many observed aspects of climate change over the instrumental record. One example is that the global temperature trend over the past century (shown in Figure 1) can be modeled with high skill when both human and natural factors that influence climate are included.

In summary, confidence in models comes from their physical basis, and their skill in representing observed climate and past climate changes. Models have proven to be extremely important tools for simulating and understanding climate, and there is considerable confidence that they are able to provide credible quantitative estimates of future climate change, particularly at larger scales. Models continue to have significant limitations, such as in their representation of clouds, which lead to uncertainties in the magnitude and timing, as well as regional details, of predicted climate change. Nevertheless, over several decades of model development, they have consistently provided a robust and unambiguous picture of significant climate warming in response to increasing greenhouse gases.

Now of course, this is a hindcast. Looking backwards. One way to think about a hindcast is that it’s easy to tweak the results to match the past. That’s partly true and, of course, that’s how the model gets improved- until it can match the past.

The other way to think about the hindcast is that it’s a good way to test the model and find out how accurate it is.

The model gets to “past predict” many different scenarios. So if someone could tweak a model so that it accurately ran temperature patterns, rainfall patterns, ocean currents, etc – if it can be tweaked so that everything in the past is accurate – how can that be a bad thing? Also the model “tweaker” can change a parameter but it doesn’t give the flexibility that many would think. Let’s suppose you want to run the model to calculate average temperatures from 1980-1999 (see below) so you put your start conditions into the model, which are values for 1980 for temperature and all other “process variables” and crank up the model.

It’s not like being able to fix up a painting with a spot of paint in the right place – it’s more like tuning an engine and hoping you win the Dhaka rally. After you blew the engine halfway through you get to do a rebuild and guess what to change next. Well, analogies – just illustrations..

Obviously, these results would need to be achieved by equations and parameterizations that matched the real world. If “tweaking” requires non-physical laws then that would create questions. Well, more on this also in later posts.

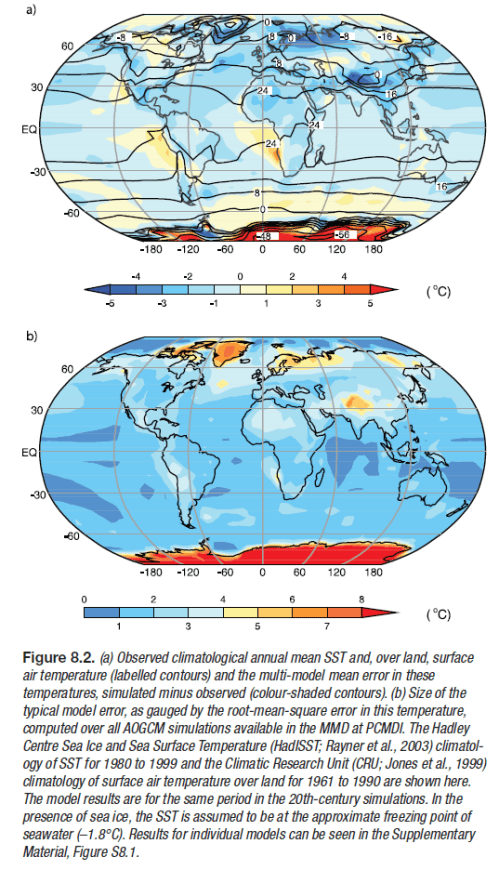

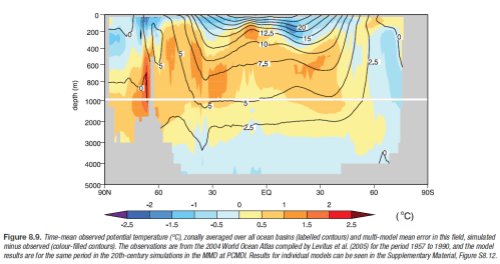

More model shots.. The top graphic is the one of interest. This is actual temperature (average 1980-1999) in contours with the shading denoting the model error (actual minus model values). Light blue and light orange (or is it white?) are good..

The model error is not so bad. Not perfect though. (Note that for some reason, not explained, the land temperature average is over a different time period than sea surface temperatures).

Temperature range:

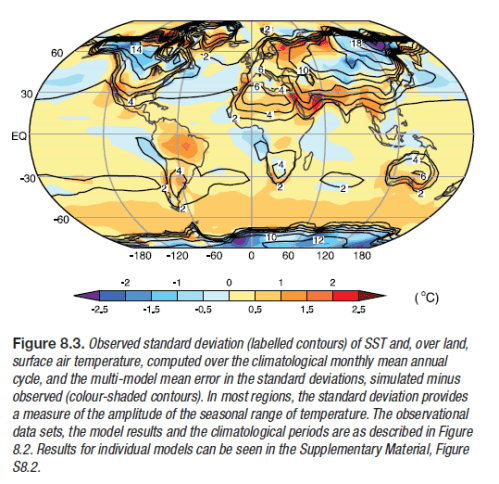

The standard deviation in temperature gives a measure of the range of temperatures experienced. The colors on the globe indicate the difference between the observed and simulated standard deviation of temperatures.

Simplifying, the light blue and light orange areas are where the models are best at working out the monthly temperature range. The darker colors are where the models are worse. Looks pretty good.

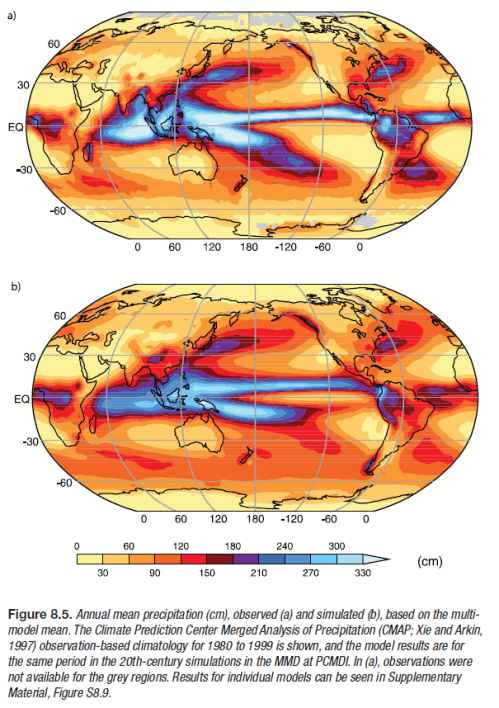

Rainfall:

This one is awesome. Remember that rainfall is calculated by physical processes. Temperature, available water sources, clouds, temperature changes, winds, convection..

Ocean temperature:

Ocean potential temperature, what’s that? Think of it as the real temperature with unstable up and down movements factored out, or read about potential temperature.. Note that the contours are the measurements (averaged over 34 years) and the shaded colors are the deviations of actual – model. So once again the light blue and light orange are very close to reality, the darker colors are further away from reality.

This one you would expect to be easier to get right than rainfall, but still, looking good.

Conclusion

It’s just the start of the journey into models. There will be more, next we will look at Models Off the Catwalk. So if you have comments it’s perhaps not necessary to write your complete thoughts on past climate, chaos.. Interesting, constructive and thoughtful comments are welcome and encouraged, of course. As are questions.

Hopefully, we can avoid the usual bunfight over whether the last ten years actual match the model’s predictions. Other places are so much better for those “discussions”..

Update – Part Two now published.

New Theory Proves AGW Wrong!

Posted in Commentary on February 26, 2010| 38 Comments »

I did think about starting this post by pasting in some unrelated yet incomprehensible maths that only a valiant few would recognize, and finish with:

But that might have put off many readers from making it past the equations, which would have been a shame, even though the idea was amusing.

From time to time new theories relating to, and yet opposing, the “greenhouse” effect or something called AGW, get published in a science journal somewhere and make a lot of people happy.

What is the theory of AGW?

If we are going to consider a theory, then at the very least we need to understand what the theory claims. It’s also a plus to understand how it’s constructed, what it relies on and what evidence exists to support the theory. We also should understand what evidence would falsify the theory.

AGW usually stands for anthropogenic global warming or the idea the humans, through burning of fossil fuels and other activities have added to the CO2 in the atmosphere, thereby increased the “greenhouse” effect and warmed the planet. And the theory includes that the temperature rise over the last 100 years or so is largely explained by this effect, and further increases in CO2 will definitely lead to further significant temperature rises.

So far on this blog I haven’t really mentioned AGW, until now. A few allusions here and there. One very minor non-specific claim at the end of Part Seven.

And yet there is a whole series on CO2 – An Insignificant Trace Gas? where the answer is “no, it’s not insignificant”.

The concept that some gases in the atmosphere absorb and then re-radiate longwave radiation is an essential component of AGW. It is one foundation. But you can accept the “greenhouse gas” theory without accepting AGW. For example, John Christy, Roy Spencer, Richard Lindzen, and many more.

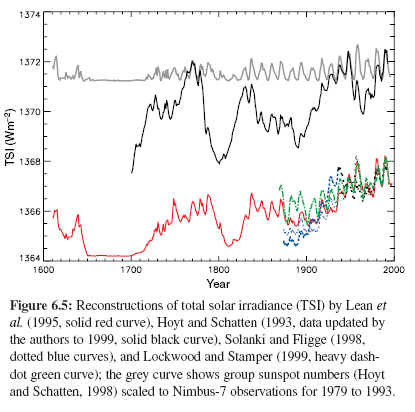

Suppose during the next 12 months the climate science community all start paying close attention to the very interesting theory of Svensmart & Friis-Christensen who propose that magnetic flux changes from the sun induce cloud formation and thereby changing the climate in much more significant ways than greenhouse gases. Perhaps the climate scientists all got bored with their current work, or perhaps some new evidence or re-analysis of the data showed that it was too strong a theory to ignore. Other explanations for the same data just didn’t hold up.

By the end of that 12 months, suppose that a large part of the climate science community were nodding thoughtfully and saying “this explains all the things we couldn’t explain before and in fact fits the data better than the models which use greenhouse gases plus aerosols etc“. (It’s a thought experiment..)

Well, the theory of AGW would be, if not dead, “on the ropes”. And yet, the theory that some gases in the atmosphere absorb and re-radiate longwave radiation would still be alive and well. The radiative transfer equations (RTE) as presented in the CO2 series would still hold up. And the explanations as to how much energy CO2 absorbed and re-radiated versus water vapor would not have changed a jot.

That’s because AGW is not “the greenhouse gas” theory. The “greenhouse gas” theory is an important and essential building block for AGW. It’s foundational atmospheric physics.

Many readers know this, of course, but some visitors may be confused over this point. Overturning the “greenhouse” theory would require a different approach. And in turn, that theory is based on a few elements each of which are very strong, but perhaps one could fall, or new phenomena could be found which affected the way these elements came together. It’s all possible.

So it is essential to understand what theory we are talking about. And to understand what that theory actually says, and what in turn, it depends on.

A Digression about the Oceans

Analogies prove nothing, they are illustrations. This analogy may be useful.

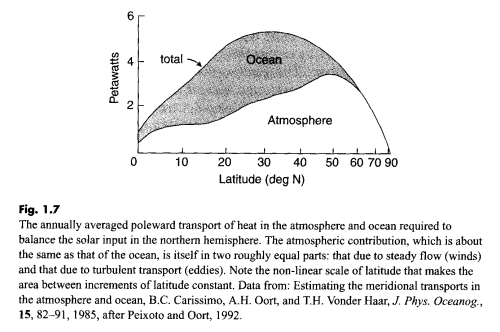

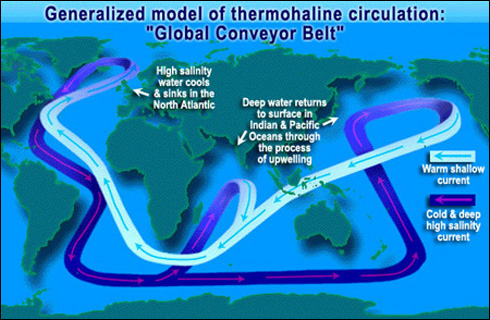

Working out the 3d path of the oceans around the planet is a complex task. You can read a little about some aspects of ocean currents in Predictability? With a Pinch of Salt please.. Computer models which attempt to calculate some aspects of the volume of warm water flowing northwards from the tropics to Northern Europe and then the cold water flowing southwards back down below struggle in some areas to get the simulated flow of water anywhere near close to the measured values (at least in the papers I was reading).

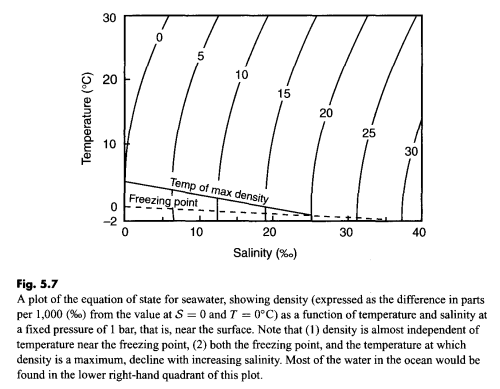

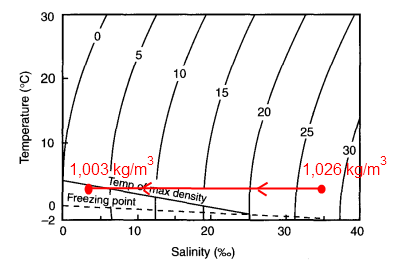

Why is that? The models use equations for conservation of momentum, conservation of angular momentum and density (from salinity and temperature). Plus a few other non-controversial theories.

Most people reading that there is a problem probably aren’t immediately thinking:

Instead many readers might theorize about the challenges of getting the right starting conditions – temperature, salinity, flow at many points in the ocean. Then being able to apply the right wind-drag, how much melt-water flowing from Greenland, how cold that is.. And perhaps how well-defined the shape of the bottom of the oceans are in the models. How fine the “mesh” is..

We don’t expect momentum and density equations to be wrong. Of course, they are just theories, someone might publish a paper which picks a hole in conservation of momentum.. and angular momentum, well, never really believed in that!

The New Paper that Proves “The Theory” Wrong!

Let’s pick a theory. Let’s pick – solving the radiative transfer equations in a standard atmosphere. In laymans terms this would include absorption and re-radiation of longwave radiation by various trace gases and the effect on the temperature profile through the atmosphere – we could call it the “greenhouse theory”.

Ok.. so a physicist has a theory that he claims falsifies our theory. Has he proven our “greenhouse theory” wrong?

We establish that, yes, he is a physicist and has done some great work in a related or similar field. That’s a good start. We might ask next?

So what we are asking is, has anyone of standing checked the paper? Perhaps the physicist has a good idea but just made a mistake. Used the wrong equation somewhere, used a minus sign where a plus sign should have been, or just made a hash of re-arranging some important equation..

Great, we find out that a journal has published the paper.

Not really. It just proves that the editor accepted it for publication. There might be a few reasons why:

Well, people are people. All we know is one more person probably thinks it is a decent approach to a problem. Or was having an off day.

For a theory to become “an accepted theory” (because even the theory of gravity is “a theory” not “a fact”) it usually takes some time to be accepted by the people who understand that field.

Sheer Stubbornness and How to be Right

The fact that it’s not accepted by the community of scientists in that discipline doesn’t mean it’s wrong. People who have put their life’s work behind a theory are not going to be particularly accepting. They might die first!

How scientific theories get overturned is a fascinating subject. Those who don’t mind reading quite turgid work describing a fascinating subject might enjoy The Structure of Scientific Revolutions by Thomas Kuhn. No doubt there are more fun books that others can recommend.

The new theory might be right and it might be wrong. The fact that it’s been published somewhere is only the first step on a journey. If being published was sufficient then what to make of opposing papers that both get published?

Why Papers which Prove “it’s all wrong” are Celebrated

Many people are skeptical of the AGW theory.

Some are skeptical of “greenhouse gas” theory. Some accept that theory in essence but are skeptical of the amount that CO2 contributes to the “greenhouse” gas effect.

Some didn’t realize there was a difference..

If you are skeptical about something and someone with credentials agrees with you, it’s a breath of fresh air! Of course, it’s natural to celebrate.

But it’s also important to be clear.

If, for example, you celebrate Richard Lindzen’s concept as put forward in Lindzen & Choi (2009) then you probably shouldn’t be celebrating Miskolczi’s paper. And if you celebrated either of those, you shouldn’t be celebrating Gerlich & Tscheuschner because they will be at odds with the previous ones (as far as I can tell). And if you like Roy Spencer’s work, he is at odds this all of these.

Now, please don’t get me wrong, I don’t want to attack anyone’s work. Lindzen and Choi’s paper is very interesting although I had a lot of questions about it and maybe will get an opportunity at some stage to explain my thoughts. And of course, Professor Lindzen is a superstar physicist.

Miskolczi’s paper confused me and I put it aside to try and read it again – update April 2011, some major problems as explained in The Mystery of Tau – Miskolczi and the following two parts. And I thought it might be easier to understand the evidence that would falsify that theory (and then look for it) than lots of equations. Someone just pointed me to Gerlich & Tscheuschner so I’m not far into it. Perhaps it’s the holy grail – update, full of huge errors as explained in On the Miseducation of the Uninformed by Gerlich and Tscheuschner (2009).

And Lindzen and Choi’s is in a totally different category which is why I introduced it. Widely celebrated as proving the death of AGW beyond a shadow of doubt by the illustrious and always amusing debater Christopher Monckton, they aren’t at odds with “greenhouse gas” theory. They are at odds with the feedback resulting from an increase in “radiative forcing” from CO2 and other gases. They are measuring climate sensitivity. And as many know and understand, the feedback or sensitivity is the key issue.

So, if New Theory Proves AGW Wrong is an exciting subject, you will continue to enjoy the subject for many years, because I’m sure there will be many more papers from physicists “proving” the theory wrong.

However, it’s likely that if they are papers “falsifying” the foundational “greenhouse” gas effect – or radiative-convective model of the atmosphere – then probably each paper will also contradict the ones that came before and the ones that follow after.

Well, predictions are hard to make, especially about the future. Perhaps there will be a new series on this blog Why CO2 Really is Insignificant. Watch out for it.

Read Full Post »