Recap

This post is a follow on from my original article: American Thinker – the Difference between a Smoking Gun and a Science Paper.

Gary Thompson who wrote the article in American Thinker that prompted my article was kind enough to make some comments and clarifications. Instead of responding in the comments to the first article, I thought it was worth a new post, especially to get the extra formatting that is allowed in posts rather than comments.

I appreciate him commenting and I’m sure he has a thick skin, but just in case, any criticisms are aimed at getting to the heart of the matter, rather than at him – or anyone else who has different views.

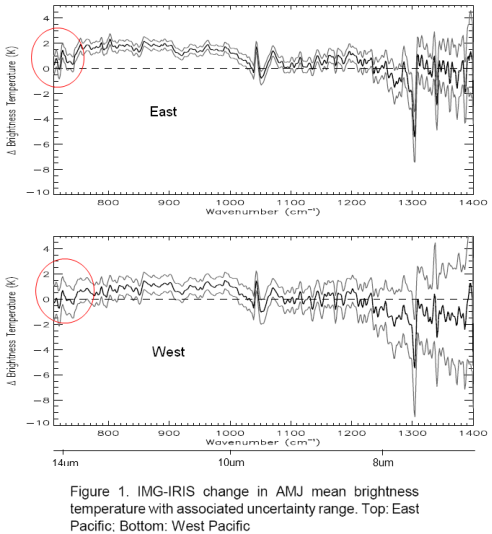

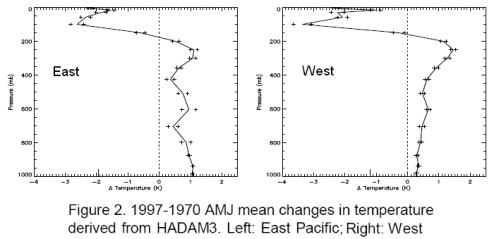

For people who have landed at this post and haven’t read the original.. the heart of Gary’s article were 3 papers, of which I examined one (the first). The paper compared two 3-month periods 27 years apart in the East and West Pacific. Gary commented that the actual OLR (outgoing longwave radiation) was higher in the later period in the important CO2 band (or what we could see of it).

His claim – the theory says that more CO2 should lead to less emission at those wavelengths and therefore the theory has been disproved.

My point – Gary doesn’t understand the theory. The temperature was higher in the later period in this region and therefore the radiation leaving the earth’s surface would be higher. We’ll see this explained again, but did I mention that you should read Gary’s article and my article before moving forward? Also at the end of the Science of Doom post you can see Gary’s comments. Always worth reading what people actually wrote rather than what someone (me) with the opposite point of view highlighted from their words..

The Unqualified Statements in Papers

Gary started by saying:

I know why the authors of the papers were using climate models to simulate the removal of effect from surface temperatures and humidity and that the ‘theory’ says you must do that. But my problem lies in two peer reviewed papers that casts doubt on that theory and that method.

And cites two papers. The first, a 1998 paper: The Trace Gas Greenhouse Effect and Global Warming by the great V. Ramanathan (I will continue to call him ‘great’ even though he didn’t reply to my email about his 1978 paper.. possibly busy, but still..).

I recommend this 1998 paper to everyone reading this article. Even though it is 12 years old, it is all relevant and a very readable summary.

The Great Ramanathan

Gary pointed out page 3 where statements appeared to back up his (Gary’s) interpretation of the later OLR study. Here’s what Ramanathan said:

Why does the presence of gases reduce OLR? These gases absorb the longwave radiation emitted by the surface of the earth and re-emit to space at the colder atmospheric temperatures. Since the emission increases with temperature, the absorbed energy is much larger than the emitted energy, leading to a net trapping of longwave photons in the atmosphere. The fundamental cause for this trapping is that the surface is warmer than the atmosphere; by the same reasoning decrease of temperature with altitude also contributes to the trapping since radiation emitted by the warmer lower layers are trapped in the regions above.

By deduction.. an increase in a greenhouse gas such as CO2 will lead to a further reduction in OLR. If the solar absorption remains the same, there will be a net heating of the planet.

Gary commented on the last part of this:

Notice there is no clarifying statement about having to use model simulated graphs to ‘correct’ for surface temperatures and water vapor before seeing that OLR reduction.

And on the first part:

“since the emission increases with temperature, the absorbed energy is much larger than the emitted energy, leading to a net trapping of longwave photons in the atmosphere.” – here the author stated clearly that even taking into account higher emissions from warmer surfaces, the net will still be a reduction.

For half the readers here, they are shaking their heads.. But for Gary and the other half of the readership, let’s press on.

First of all, if we took any of 1000 papers on the “greenhouse” effect and observations, models, theoretical adjustments, impacts on GCMs – I bet you could find at least 700 – 900 of them at some point will make a statement that could be pulled out which has no “clarifying statement”. That “cast doubt” on the theory. Perhaps 1000 out of a 1000.

Context, context, context as they say in real estate.

Where to begin? Let’s look at “the theory” first. And then come back and examine Ramanathan’s statements.

The Theory

There are a few basics. For newcomers, you can take an extended look at the theory in CO2 – An Insignificant Trace Gas? It’s in seven parts! Actually it’s a compressed treatment.

This is itself is a clue.

In the books I have seen on Atmospheric Physics many tens of pages are devoted to radiation, including absorption and re-radiation – the “greenhouse” effect – and many tens of pages are devoted to convection. Understanding the basics is critical.

For Gary and his followers, the theory is on a precipice and these papers are giving us that clue. For people who’ve studied the subject the theory of the “greenhouse” effect is as solid as the theory of angular momentum, or the 2nd law of thermodynamics (the real one, not the imaginary one).

As Ramanthan says in the same paper Gary cites:

It is convenient to separate the greenhouse effect from global warming. The former is based on observations and physical laws such as Planck’s law for black-body emission. The concept of warming that results from the greenhouse effect, is based on deductions from sound physical principles. Numerous feedback processes, determine the magnitude of the warming; these feedbacks are treated with varying degrees of sophistication in GCMs and other climate models. As a result, predictions of the magnitude of the warming are not only model dependent but are subject to large uncertainties..

If I can paraphrase:

Greenhouse effect – dead solid. Global warming – lots of factors, need GCMs, pretty complicated.

Perhaps he has not realized that his words in the same paper combined with experiments demonstrate a flaw in the theory of the dead-solid “greenhouse” effect..

Back to the theory, but first..

A Quick, possibly Annoying, Diversion to the Theory of Gravity

But before we start, I thought it was worth an analogy. Analogies are illustrations, not proof of anything. They can often inflame an argument, but that’s not the intention here. Many people who are still undecided about the amazing theory of the inappropriately-named “greenhouse” effect might welcome a break from thinking about it.

The theory of planetary movements is my analogy. I picture a world where gravity – and its effects on the planets orbiting the sun – is strangely controversial. A concerned citizen, leafing through some fairly recent scientific papers notices that planets don’t really go around the sun in ellipses as the theory claims. In fact, there are some quite odd movements. And so, surprised that the scientists can’t see what’s in plain sight, this citizen draws attention to them.

When some detail-orientated commenters point out that the theory is actually (in part):

F = GMm/r2

where F= force between 2 bodies, G is a constant, M and m are the masses of the 2 bodies and r is the distance between them

And the ellipse idea is just a handy generalization of the results of the laws of graviational attraction.

And the reason why some planetary movements recently measured don’t follow an ellipse is because a few planets are a bit closer together, there’s a large asteroid flying between them and so when we do the maths it all works out pretty well.

The original concerned citizen then pulls out a few papers where, in the introduction, gravity is explained as that force that produces elliptical movements in the planets.. with no disclaimers about F=GMm/r2 and claims that this theory is, therefore, under question.

Why this annoying analogy? Most “theories” in physics are developed because of some observations which get analyzed to death – and finally someone produces a “comprehensive theory” that satisfies most of the relevant scientific community. The paper with the comprehensive theory usually contains some equations, some observations, some matching of the two – but often in common everyday usage the shorthand version of the theory is used.

“The theory of gravity tells us that planets orbit the sun in an elliptical manner, and ..”

So much more tedious to keep saying F=GMm/r2, and the other formulae..

(I know, I haven’t proved anything, but maybe a few readers can take a moment and see a parallel..)

Back to the Radiative-Convective Theory

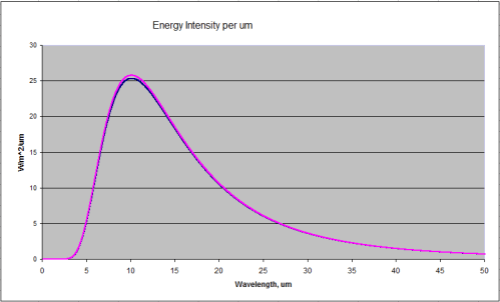

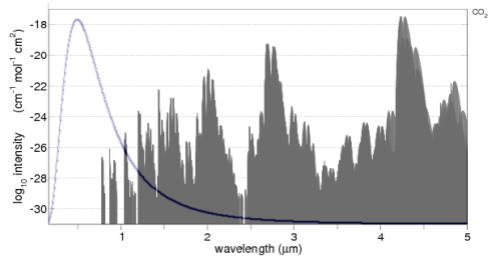

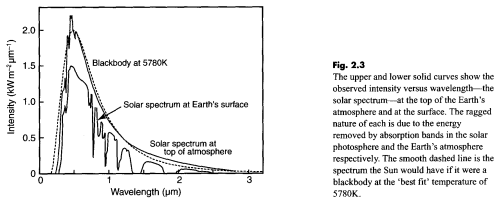

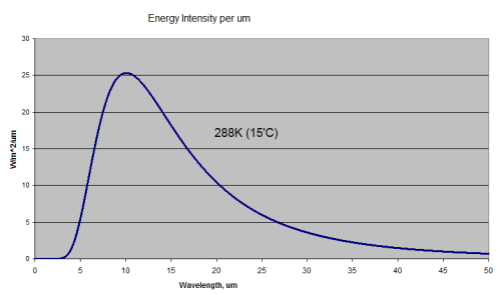

First, bodies radiate energy according to Planck’s formula, which looks complicated when written down. The idea is simplified by the total energy radiated according to the Stefan-Boltzmann law which says that total energy is proportional to the 4th power of temperature.

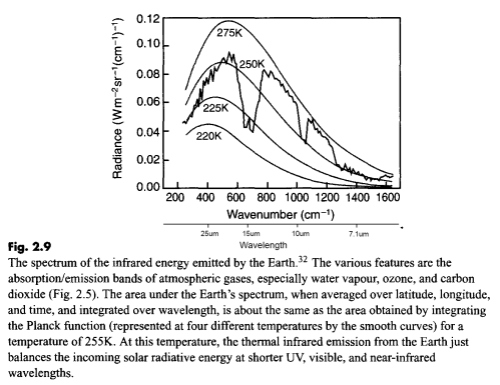

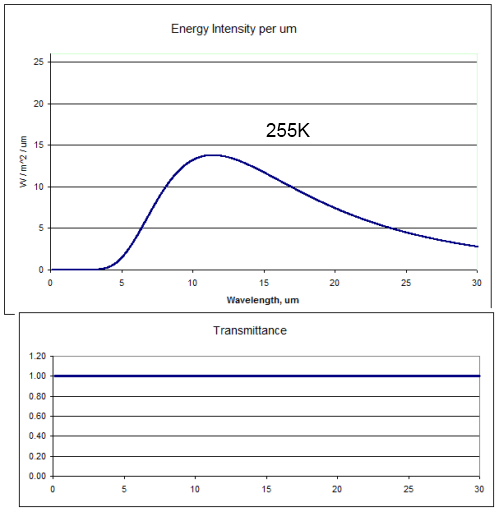

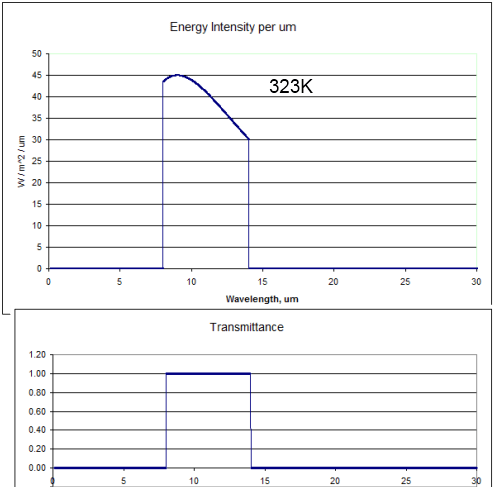

Temperature goes up, energy radiated goes up (quite a bit more) – and the peak wavelength is a little lower. Here’s a graphical look of the Planck formula which takes away the mathematical pain:

Blackbody Radiation at 288K and 289K (15'C and 16'C)

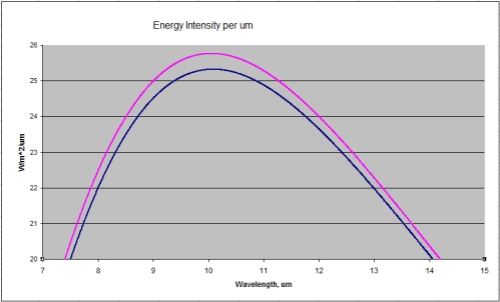

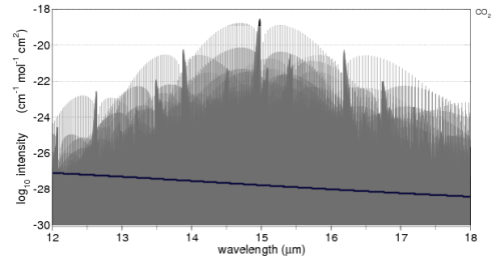

Two curves – 288K and 289K. Now zoomed in a little where most of the energy is:

Close up of the peak energy of 288K and 289K

Total energy in the top curve (289K) is 396W/m^2, and in the bottom curve (288K) is 390W/m^2

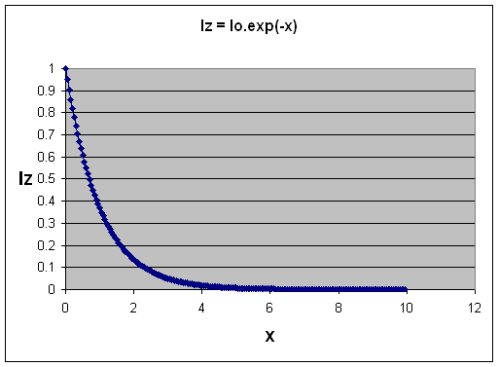

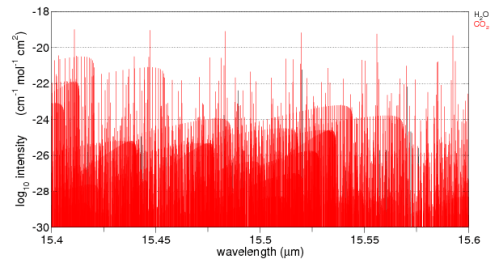

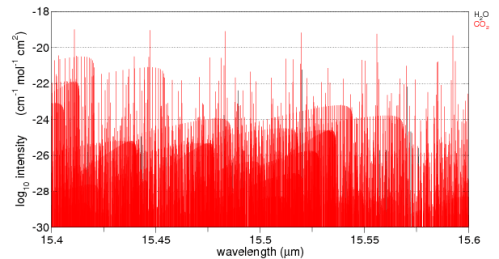

Second, trace gases absorb energy according to a formula, which when simplifed is the Beer-Lambert law.

Absorption of Radiation as "optical thickness" increases

Without going into a lot of maths, as the concentration of a “greenhouse” gas increases, the absorption graph falls off more steeply – more energy is absorbed.

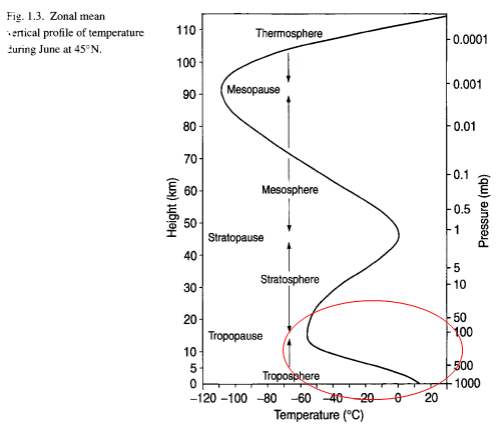

Third, re-radiation of this energy takes place according to an energy balance equation that you can see in CO2 – Part Three. The energy balance or radiative transfer equations rely on knowledge of the temperature profile in the lower atmosphere (the troposphere), because the actual temperature here is dominated by convection not radiation. (Radiation still takes place and the temperature profile is a major factor in the radiation – but this effect is not the primary determinant of the temperature profile).

When Gary says:

I know why the authors of the papers were using climate models to simulate the removal of effect from surface temperatures and humidity and that the ‘theory’ says you must do that. But my problem lies in two peer reviewed papers that casts doubt on that theory and that method.

It sounds like Gary believes this “model” is some suspicious extra that tries to deal with problems between the theory and the real world. But it’s the foundation. Anyone who had read an introduction to atmospheric physics would understand that. Someone who had tried hard to understand a few papers without a proper foundation would easily miss it.

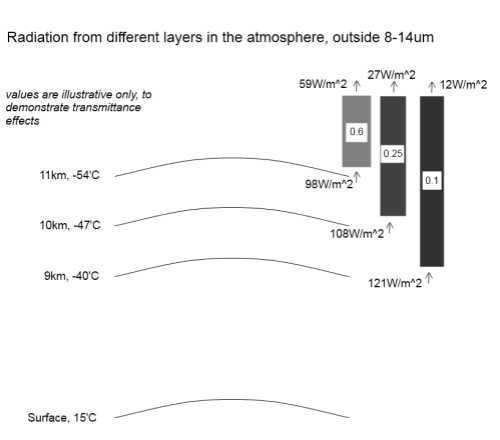

- Higher temperatures increase OLR

- More trace gases reduce OLR in certain wavelengths

Which effect dominates in a particular situation?

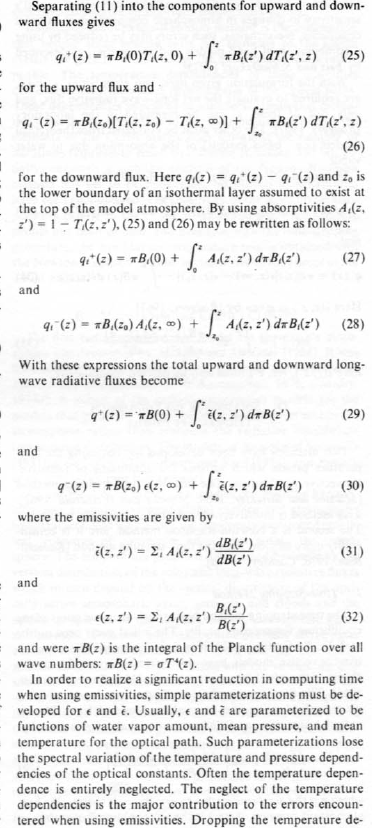

It’s simple conceptually. But, if we want to find out exact results – such as, which effect dominates in a particular situation – we need a “model” = an equation or set of equations. Because if we want to quantify the effects we have to solve some tricky equations which you can see in a paper by.. the Great Ramanathan. Well, Ramanathan & Coakley 1978 – a seminal paper on Climate Modeling through Radiative-Convective Models, here’s an extract from p7:

A few equations from Ramanathan and Coakley, p7

Lots of maths. The rest of the paper is similar. Let’s move on to Ramanathan’s much later paper and what he said and meant.

Has Ramanathan given up on The Theory?

Let’s review the words Gary pulled out of the paper:

Why does the presence of gases reduce OLR? These gases absorb the longwave radiation emitted by the surface of the earth and re-emit to space at the colder atmospheric temperatures. Since the emission increases with temperature, the absorbed energy is much larger than the emitted energy, leading to a net trapping of longwave photons in the atmosphere.

Gary reads into this “here the author stated clearly that even taking into account higher emissions from warmer surfaces, the net will still be a reduction“. By which Gary thinks Ramanathan is saying something like:

..measurements from a specific location at a later date when CO2 has increased will always lead to a reduction in OLR..

-my paraphrase.

But no, he has totally misunderstood what the author is saying. Ramanathan is doing a quick drive through of the basics and explaining how the greenhouse effect works.

Lower temperatures in the atmosphere mean that the radiation to space from this lower temperature atmosphere is lower than the radiation from the surface. (This is the “net trapping”). Therefore – the “greenhouse” effect. There is no conclusion here that “at all times in all situations increases in “greenhouse” gases will lead to a reduction in OLR in these bands“. The conclusion is just that “greenhouse” gases mean that the surface is warmer than it would be without these gases

And the first claim, Ramanathan said:

By deduction.. an increase in a greenhouse gas such as CO2 will lead to a further reduction in OLR. If the solar absorption remains the same, there will be a net heating of the planet.

Gary said:

Notice there is no clarifying statement about having to use model simulated graphs to ‘correct’ for surface temperatures and water vapor before seeing that OLR reduction.

That’s because the basics of the theory are the solid foundation and don’t need to be restated as qualifiers to every statement. Ramanathan helped write the theory! For people who think that this stuff is just some added extra, read his 1978 paper and see all the maths and the explanations. This is the theory.

In the earlier part of his earlier statement he said “Since the emission increases with temperature” but didn’t qualify it with “emission increases in proportion to the 4th power of temperature“.

Is Ramanathan losing confidence in the Stefan-Boltzmann formula? Or Planck? Are these rocks crumbling?

It’s only because Gary has decided that these points are somehow rocky that he would reach these conclusions. (And I could pick 10’s of other statements in the paper which, without qualifiers, could be taken to be the beginning of the end of a specific theory).

As a general point – when we look at the hypothetical global annual average after “new equilibrium” from increased CO2 is reached – if this new equilibrium exists – the theory (1st law of thermodynamics) predicts that the “new” OLR will match the “old” OLR (global annual average). And in that case the OLR in “greenhouse” gas bands will be reduced a little, while the OLR outside of those bands will be increased a little.

But when we look at one local situation we need the theory, also known as “the model”, also known as equations, to tell us what exactly the result will be.

Ramanathan hasn’t cast any doubt on it. He believes it. His papers from the 1970s to today work it all out. He just doesn’t write qualifiers to each statement as if every line will be read by people who don’t understand the theory..

Gary’s Maths

In Gary’s comment he reproduced his back of envelope calculations of how much surface temperature should have changed over this 27 year period.

He takes the charitable approach of considering a net reduction in OLR from one of the three papers he originally reviewed – if I understood this step correctly. The figure he takes to work with is a 1K reduction. And then tries to work out how much this 1K reduction in OLR in the CO2 band would have on surface temperatures.

Like all good science this starts on napkins and the back of envelopes, because everyone studying a problem first has to attempt to quantify it using available data and available formulae. Then when the first results are worked out and it seems like something new is discovered – or something old overturned – then the scientist, patent clerk, writer now has to turn to more serious methods.

In Gary’s preliminary results he shows that a reduction in OLR due to CO2 might have contributed something like 0.3°C to surface temperature change over 30 years or so – where the GISS temperature increase for the period is something like 0.7°C. The essence of the calculation was comparing the Planck function (see the first and second graph in this post) of two temperatures 1K apart, then considering how much is in the CO2 band and so calculating the approximate change in W/m2. Then by applying a “climate sensitivity” number from realclimate.org, converting that into a temperature change.

Radiative physics has the potential to confuse everyone. Perhaps if we consider the “equilibrium case”, one problem with Gary’s calculation above will become clearer.

What’s the equilibrium case? In fact, it’s one generalized result of the real theory. And because it’s easier to understand than dI = -Inσdz + Bnσdz = (I – B)dχ people start to think the generalized result under equilibrium is the theory..

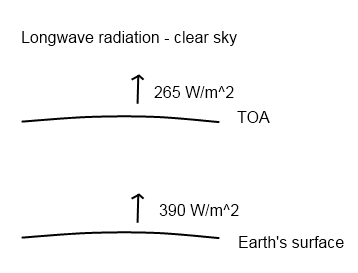

Under equilibrium, energy out = energy in. This is for the whole climate system. So we calculate the incoming solar radiation that is absorbed, averaged across the complete surface area of the earth = 239 W/m2.

So if the planet is not heating up or cooling down, energy out (OLR) must also = 239 W/m2.

So we consider the golden age of equilibrium in 1800 or thereabouts. We’ll assume the above numbers are true for that case. Then lots of CO2 (and other stuff) was added. Let’s suppose CO2 has reached the new current CO2 level of 380ppm – and stays constant from now on. The downward radiative forcing as calculated at “top of atmosphere” from the increase in CO2 is 1.7 W/m2. And nothing else happens in the climate (“all other things being equal” as we say).

Eventually we will reach the new equilibrium. Doesn’t matter how long it takes, but let’s pretend it’s 2020. Before equilibrium the planet will be heating up, which means OLR < 239 W/m2 (because more energy must come into the planet than leave the planet for it to warm up). At equilibrium, in 2020, OLR = 239 W/m2 – once again. (Of course, at wavelengths around 15μm the energy will be lower than in 1800 and other wavelengths the energy will be higher).

At this new equilibrium point we still have a “radiative forcing” of 1.7W/m2, which is why the surface temperature is higher, but no change in OLR when measured at 1800 and again at 2020.

We’ll assume, like Gary, the realclimate.org climate sensitivity – how do we calculate the new equilibrium temperature?

The change in OLR is 0.0 W/m2. Therefore, change in temperature = 0’C. Ha, we’ve proved realclimate wrong. Climate sensitivity cannot exist.

Or, it was wrongly applied.

As trace gases like CO2 increase in concentration they absorb energy. The atmosphere warms up and re-radiates the energy in all direction. Simplifying, we say it re-radiates both up and down. The extra downward radiation is what is used to work out changes in surface temperature. Not the immediate or eventual change in OLR.

The extra downward part is usually called “radiative forcing” and comes with a number of definitions you can see in Part Seven of the CO2 series (along with my mistaken attempt to do a “back of envelope” calculation of surface temperature changes without feedback).

How do we work out the change in surface temperature?

In Gary’s case, what he will need to know is the change in downward longwave radiation. Not upwards.

Conclusion

The theory of radiative transfer in the atmosphere, at its heart, is a relatively simple one – but in application is a very challenging one computationally speaking. Therefore, it’s hard to grasp it in its details intuitively.

The theory isn’t the generalized idea of what will happen when moving from one equilibrium state to another equilibrium state with more “greenhouse” gases. That is a consequence of the theory under specific (and idealized) circumstances.

But because everyone likes shorthand, to many people this has become “the theory”. So when someone applies the maths to a specific situation it is “suspicious”. Maths becomes equated with “models” which to many people means “GCMs” which means “make-belief”. I think that if Gary had read Climate Modeling through Radiative-Convective Models by the Great Ramanthan, he might have a different opinion about the later paper and whether Ramanathan is “bailing out” on the extremely solid theory of “greenhouse” gases.

Anyone who has read a book on atmospheric physics would know that Gary’s claim is – no nice way to say it – “unsupported”. Yes – cruel, harsh, wounding words – but it had to be said.

However, most of the readers here and most on American Thinker haven’t read an undergraduate book on the topic. So it makes it worth trying to explain in some detail.

It’s also great to see people trying to validate or falsify theories with a little maths. Working out some numbers is an essential step in proving or disproving our own theories and those of others. In the example Gary provided he didn’t apply the correct calculation. (The subsequent pages of Ramanathan’s 1998 paper also run through these basics).

For those convinced that the idea that more CO2 will warm the surface of the planet is some crazy theory – these words are in vain.

But for the many more people who want to understand climate science, hopefully this article provides some perspective. The claims in American Thinker might at first sight seem to be a major problem to an important theory. But they aren’t.

Note – I haven’t yet opened the Philipona paper, but will do so in coming weeks and probably add another article about it. I didn’t want to leave Gary’s comments unanswered, and this post is already long enough..

Note on a Few Technical Matters

A few clarifications are in order, for people who like to get their teeth into the technical details.

Strictly speaking, at the very top of atmosphere there is no downward longwave forcing at all. That’s because there’s no atmosphere to radiate.

The IPCC definition of “radiative forcing” at “top of atmosphere” is a handy comparison tool of extra downward radiation before feedbacks and before equilibrium of the surface or troposphere is reached. In reality the increase in downward radiation doesn’t occur in just one location, extra downward longwave radiation occurs all throughout the troposphere and stratosphere.

In the example above, from 2010 to 2020 as surface and troposphere temperatures increased, radiative forcing would also increase slightly so it wouldn’t necessarily be constant at 1.7W/m2.

Climate sensitivity is calculated by GCMs to work out the resulting long term (“equilibrium”) temperature change, with climate feedbacks, from increased radiative forcing. No warranty express or implied as to their accuracy or usefulness, just explaining how to apply the climate sensitivity value correctly.

Read Full Post »

American Thinker Smoking Gun – Gary Thompson’s comments examined

Posted in Commentary on March 27, 2010| 31 Comments »

Recap

This post is a follow on from my original article: American Thinker – the Difference between a Smoking Gun and a Science Paper.

Gary Thompson who wrote the article in American Thinker that prompted my article was kind enough to make some comments and clarifications. Instead of responding in the comments to the first article, I thought it was worth a new post, especially to get the extra formatting that is allowed in posts rather than comments.

I appreciate him commenting and I’m sure he has a thick skin, but just in case, any criticisms are aimed at getting to the heart of the matter, rather than at him – or anyone else who has different views.

For people who have landed at this post and haven’t read the original.. the heart of Gary’s article were 3 papers, of which I examined one (the first). The paper compared two 3-month periods 27 years apart in the East and West Pacific. Gary commented that the actual OLR (outgoing longwave radiation) was higher in the later period in the important CO2 band (or what we could see of it).

His claim – the theory says that more CO2 should lead to less emission at those wavelengths and therefore the theory has been disproved.

My point – Gary doesn’t understand the theory. The temperature was higher in the later period in this region and therefore the radiation leaving the earth’s surface would be higher. We’ll see this explained again, but did I mention that you should read Gary’s article and my article before moving forward? Also at the end of the Science of Doom post you can see Gary’s comments. Always worth reading what people actually wrote rather than what someone (me) with the opposite point of view highlighted from their words..

The Unqualified Statements in Papers

Gary started by saying:

And cites two papers. The first, a 1998 paper: The Trace Gas Greenhouse Effect and Global Warming by the great V. Ramanathan (I will continue to call him ‘great’ even though he didn’t reply to my email about his 1978 paper.. possibly busy, but still..).

I recommend this 1998 paper to everyone reading this article. Even though it is 12 years old, it is all relevant and a very readable summary.

The Great Ramanathan

Gary pointed out page 3 where statements appeared to back up his (Gary’s) interpretation of the later OLR study. Here’s what Ramanathan said:

Gary commented on the last part of this:

And on the first part:

For half the readers here, they are shaking their heads.. But for Gary and the other half of the readership, let’s press on.

First of all, if we took any of 1000 papers on the “greenhouse” effect and observations, models, theoretical adjustments, impacts on GCMs – I bet you could find at least 700 – 900 of them at some point will make a statement that could be pulled out which has no “clarifying statement”. That “cast doubt” on the theory. Perhaps 1000 out of a 1000.

Context, context, context as they say in real estate.

Where to begin? Let’s look at “the theory” first. And then come back and examine Ramanathan’s statements.

The Theory

There are a few basics. For newcomers, you can take an extended look at the theory in CO2 – An Insignificant Trace Gas? It’s in seven parts! Actually it’s a compressed treatment.

This is itself is a clue.

In the books I have seen on Atmospheric Physics many tens of pages are devoted to radiation, including absorption and re-radiation – the “greenhouse” effect – and many tens of pages are devoted to convection. Understanding the basics is critical.

For Gary and his followers, the theory is on a precipice and these papers are giving us that clue. For people who’ve studied the subject the theory of the “greenhouse” effect is as solid as the theory of angular momentum, or the 2nd law of thermodynamics (the real one, not the imaginary one).

As Ramanthan says in the same paper Gary cites:

If I can paraphrase:

Perhaps he has not realized that his words in the same paper combined with experiments demonstrate a flaw in the theory of the dead-solid “greenhouse” effect..

Back to the theory, but first..

A Quick, possibly Annoying, Diversion to the Theory of Gravity

But before we start, I thought it was worth an analogy. Analogies are illustrations, not proof of anything. They can often inflame an argument, but that’s not the intention here. Many people who are still undecided about the amazing theory of the inappropriately-named “greenhouse” effect might welcome a break from thinking about it.

The theory of planetary movements is my analogy. I picture a world where gravity – and its effects on the planets orbiting the sun – is strangely controversial. A concerned citizen, leafing through some fairly recent scientific papers notices that planets don’t really go around the sun in ellipses as the theory claims. In fact, there are some quite odd movements. And so, surprised that the scientists can’t see what’s in plain sight, this citizen draws attention to them.

When some detail-orientated commenters point out that the theory is actually (in part):

F = GMm/r2

where F= force between 2 bodies, G is a constant, M and m are the masses of the 2 bodies and r is the distance between them

And the ellipse idea is just a handy generalization of the results of the laws of graviational attraction.

And the reason why some planetary movements recently measured don’t follow an ellipse is because a few planets are a bit closer together, there’s a large asteroid flying between them and so when we do the maths it all works out pretty well.

The original concerned citizen then pulls out a few papers where, in the introduction, gravity is explained as that force that produces elliptical movements in the planets.. with no disclaimers about F=GMm/r2 and claims that this theory is, therefore, under question.

Why this annoying analogy? Most “theories” in physics are developed because of some observations which get analyzed to death – and finally someone produces a “comprehensive theory” that satisfies most of the relevant scientific community. The paper with the comprehensive theory usually contains some equations, some observations, some matching of the two – but often in common everyday usage the shorthand version of the theory is used.

“The theory of gravity tells us that planets orbit the sun in an elliptical manner, and ..”

So much more tedious to keep saying F=GMm/r2, and the other formulae..

(I know, I haven’t proved anything, but maybe a few readers can take a moment and see a parallel..)

Back to the Radiative-Convective Theory

First, bodies radiate energy according to Planck’s formula, which looks complicated when written down. The idea is simplified by the total energy radiated according to the Stefan-Boltzmann law which says that total energy is proportional to the 4th power of temperature.

Temperature goes up, energy radiated goes up (quite a bit more) – and the peak wavelength is a little lower. Here’s a graphical look of the Planck formula which takes away the mathematical pain:

Blackbody Radiation at 288K and 289K (15'C and 16'C)

Two curves – 288K and 289K. Now zoomed in a little where most of the energy is:

Close up of the peak energy of 288K and 289K

Total energy in the top curve (289K) is 396W/m^2, and in the bottom curve (288K) is 390W/m^2

Second, trace gases absorb energy according to a formula, which when simplifed is the Beer-Lambert law.

Absorption of Radiation as "optical thickness" increases

Without going into a lot of maths, as the concentration of a “greenhouse” gas increases, the absorption graph falls off more steeply – more energy is absorbed.

Third, re-radiation of this energy takes place according to an energy balance equation that you can see in CO2 – Part Three. The energy balance or radiative transfer equations rely on knowledge of the temperature profile in the lower atmosphere (the troposphere), because the actual temperature here is dominated by convection not radiation. (Radiation still takes place and the temperature profile is a major factor in the radiation – but this effect is not the primary determinant of the temperature profile).

When Gary says:

It sounds like Gary believes this “model” is some suspicious extra that tries to deal with problems between the theory and the real world. But it’s the foundation. Anyone who had read an introduction to atmospheric physics would understand that. Someone who had tried hard to understand a few papers without a proper foundation would easily miss it.

Which effect dominates in a particular situation?

It’s simple conceptually. But, if we want to find out exact results – such as, which effect dominates in a particular situation – we need a “model” = an equation or set of equations. Because if we want to quantify the effects we have to solve some tricky equations which you can see in a paper by.. the Great Ramanathan. Well, Ramanathan & Coakley 1978 – a seminal paper on Climate Modeling through Radiative-Convective Models, here’s an extract from p7:

A few equations from Ramanathan and Coakley, p7

Lots of maths. The rest of the paper is similar. Let’s move on to Ramanathan’s much later paper and what he said and meant.

Has Ramanathan given up on The Theory?

Let’s review the words Gary pulled out of the paper:

Gary reads into this “here the author stated clearly that even taking into account higher emissions from warmer surfaces, the net will still be a reduction“. By which Gary thinks Ramanathan is saying something like:

-my paraphrase.

But no, he has totally misunderstood what the author is saying. Ramanathan is doing a quick drive through of the basics and explaining how the greenhouse effect works.

Lower temperatures in the atmosphere mean that the radiation to space from this lower temperature atmosphere is lower than the radiation from the surface. (This is the “net trapping”). Therefore – the “greenhouse” effect. There is no conclusion here that “at all times in all situations increases in “greenhouse” gases will lead to a reduction in OLR in these bands“. The conclusion is just that “greenhouse” gases mean that the surface is warmer than it would be without these gases

And the first claim, Ramanathan said:

Gary said:

That’s because the basics of the theory are the solid foundation and don’t need to be restated as qualifiers to every statement. Ramanathan helped write the theory! For people who think that this stuff is just some added extra, read his 1978 paper and see all the maths and the explanations. This is the theory.

In the earlier part of his earlier statement he said “Since the emission increases with temperature” but didn’t qualify it with “emission increases in proportion to the 4th power of temperature“.

Is Ramanathan losing confidence in the Stefan-Boltzmann formula? Or Planck? Are these rocks crumbling?

It’s only because Gary has decided that these points are somehow rocky that he would reach these conclusions. (And I could pick 10’s of other statements in the paper which, without qualifiers, could be taken to be the beginning of the end of a specific theory).

As a general point – when we look at the hypothetical global annual average after “new equilibrium” from increased CO2 is reached – if this new equilibrium exists – the theory (1st law of thermodynamics) predicts that the “new” OLR will match the “old” OLR (global annual average). And in that case the OLR in “greenhouse” gas bands will be reduced a little, while the OLR outside of those bands will be increased a little.

Ramanathan hasn’t cast any doubt on it. He believes it. His papers from the 1970s to today work it all out. He just doesn’t write qualifiers to each statement as if every line will be read by people who don’t understand the theory..

Gary’s Maths

In Gary’s comment he reproduced his back of envelope calculations of how much surface temperature should have changed over this 27 year period.

He takes the charitable approach of considering a net reduction in OLR from one of the three papers he originally reviewed – if I understood this step correctly. The figure he takes to work with is a 1K reduction. And then tries to work out how much this 1K reduction in OLR in the CO2 band would have on surface temperatures.

Like all good science this starts on napkins and the back of envelopes, because everyone studying a problem first has to attempt to quantify it using available data and available formulae. Then when the first results are worked out and it seems like something new is discovered – or something old overturned – then the scientist, patent clerk, writer now has to turn to more serious methods.

In Gary’s preliminary results he shows that a reduction in OLR due to CO2 might have contributed something like 0.3°C to surface temperature change over 30 years or so – where the GISS temperature increase for the period is something like 0.7°C. The essence of the calculation was comparing the Planck function (see the first and second graph in this post) of two temperatures 1K apart, then considering how much is in the CO2 band and so calculating the approximate change in W/m2. Then by applying a “climate sensitivity” number from realclimate.org, converting that into a temperature change.

Radiative physics has the potential to confuse everyone. Perhaps if we consider the “equilibrium case”, one problem with Gary’s calculation above will become clearer.

What’s the equilibrium case? In fact, it’s one generalized result of the real theory. And because it’s easier to understand than dI = -Inσdz + Bnσdz = (I – B)dχ people start to think the generalized result under equilibrium is the theory..

Under equilibrium, energy out = energy in. This is for the whole climate system. So we calculate the incoming solar radiation that is absorbed, averaged across the complete surface area of the earth = 239 W/m2.

So if the planet is not heating up or cooling down, energy out (OLR) must also = 239 W/m2.

So we consider the golden age of equilibrium in 1800 or thereabouts. We’ll assume the above numbers are true for that case. Then lots of CO2 (and other stuff) was added. Let’s suppose CO2 has reached the new current CO2 level of 380ppm – and stays constant from now on. The downward radiative forcing as calculated at “top of atmosphere” from the increase in CO2 is 1.7 W/m2. And nothing else happens in the climate (“all other things being equal” as we say).

Eventually we will reach the new equilibrium. Doesn’t matter how long it takes, but let’s pretend it’s 2020. Before equilibrium the planet will be heating up, which means OLR < 239 W/m2 (because more energy must come into the planet than leave the planet for it to warm up). At equilibrium, in 2020, OLR = 239 W/m2 – once again. (Of course, at wavelengths around 15μm the energy will be lower than in 1800 and other wavelengths the energy will be higher).

At this new equilibrium point we still have a “radiative forcing” of 1.7W/m2, which is why the surface temperature is higher, but no change in OLR when measured at 1800 and again at 2020.

We’ll assume, like Gary, the realclimate.org climate sensitivity – how do we calculate the new equilibrium temperature?

The change in OLR is 0.0 W/m2. Therefore, change in temperature = 0’C. Ha, we’ve proved realclimate wrong. Climate sensitivity cannot exist.

Or, it was wrongly applied.

As trace gases like CO2 increase in concentration they absorb energy. The atmosphere warms up and re-radiates the energy in all direction. Simplifying, we say it re-radiates both up and down. The extra downward radiation is what is used to work out changes in surface temperature. Not the immediate or eventual change in OLR.

The extra downward part is usually called “radiative forcing” and comes with a number of definitions you can see in Part Seven of the CO2 series (along with my mistaken attempt to do a “back of envelope” calculation of surface temperature changes without feedback).

How do we work out the change in surface temperature?

In Gary’s case, what he will need to know is the change in downward longwave radiation. Not upwards.

Conclusion

The theory of radiative transfer in the atmosphere, at its heart, is a relatively simple one – but in application is a very challenging one computationally speaking. Therefore, it’s hard to grasp it in its details intuitively.

The theory isn’t the generalized idea of what will happen when moving from one equilibrium state to another equilibrium state with more “greenhouse” gases. That is a consequence of the theory under specific (and idealized) circumstances.

But because everyone likes shorthand, to many people this has become “the theory”. So when someone applies the maths to a specific situation it is “suspicious”. Maths becomes equated with “models” which to many people means “GCMs” which means “make-belief”. I think that if Gary had read Climate Modeling through Radiative-Convective Models by the Great Ramanthan, he might have a different opinion about the later paper and whether Ramanathan is “bailing out” on the extremely solid theory of “greenhouse” gases.

Anyone who has read a book on atmospheric physics would know that Gary’s claim is – no nice way to say it – “unsupported”. Yes – cruel, harsh, wounding words – but it had to be said.

However, most of the readers here and most on American Thinker haven’t read an undergraduate book on the topic. So it makes it worth trying to explain in some detail.

It’s also great to see people trying to validate or falsify theories with a little maths. Working out some numbers is an essential step in proving or disproving our own theories and those of others. In the example Gary provided he didn’t apply the correct calculation. (The subsequent pages of Ramanathan’s 1998 paper also run through these basics).

For those convinced that the idea that more CO2 will warm the surface of the planet is some crazy theory – these words are in vain.

But for the many more people who want to understand climate science, hopefully this article provides some perspective. The claims in American Thinker might at first sight seem to be a major problem to an important theory. But they aren’t.

Note – I haven’t yet opened the Philipona paper, but will do so in coming weeks and probably add another article about it. I didn’t want to leave Gary’s comments unanswered, and this post is already long enough..

Note on a Few Technical Matters

A few clarifications are in order, for people who like to get their teeth into the technical details.

Strictly speaking, at the very top of atmosphere there is no downward longwave forcing at all. That’s because there’s no atmosphere to radiate.

The IPCC definition of “radiative forcing” at “top of atmosphere” is a handy comparison tool of extra downward radiation before feedbacks and before equilibrium of the surface or troposphere is reached. In reality the increase in downward radiation doesn’t occur in just one location, extra downward longwave radiation occurs all throughout the troposphere and stratosphere.

In the example above, from 2010 to 2020 as surface and troposphere temperatures increased, radiative forcing would also increase slightly so it wouldn’t necessarily be constant at 1.7W/m2.

Climate sensitivity is calculated by GCMs to work out the resulting long term (“equilibrium”) temperature change, with climate feedbacks, from increased radiative forcing. No warranty express or implied as to their accuracy or usefulness, just explaining how to apply the climate sensitivity value correctly.

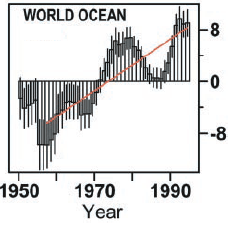

Read Full Post »