Gary Thompson at American Thinker recently produced an article The AGW Smoking Gun. In the article he takes three papers and claims to demonstrate that they are at odds with AGW.

A key component of the scientific argument for anthropogenic global warming (AGW) has been disproven. The results are hiding in plain sight in peer-reviewed journals.

The article got discussed on Skeptical Science, with the article Have American Thinker Disproven Global Warming? although the blog article really just covered the second paper. The discussion was especially worth reading because Gary Thompson joined in and showed himself to be a thoughtful and courteous fellow.

He did claim in that discussion that:

First off, I never stated in the article that I was disproving the greenhouse effect. My aim was to disprove the AGW hypothesis as I stated in the article “increased emission of CO2 into the atmosphere (by humans) is causing the Earth to warm at such a rate that it threatens our survival.” I think I made it clear in the article that the greenhouse effect is not only real but vital for our planet (since we’d be much cooler than we are now if it didn’t exist).

However, the papers he cites are really demonstrating the reality of the “greenhouse” effect. If his conclusions – different from the authors of the papers – are correct, then he has demonstrated a problem with the “greenhouse” effect, which is a component – a foundation – of AGW.

This article will cover the first paper which appears to be part of a conference proceeding: Changes in the earth’s resolved outgoing longwave radiation field as seen from the IRIS and IMG instruments by H.E. Brindley et al. If you are new to understanding the basics on longwave and shortwave radiation and absorption by trace gases, take a look at CO2 – An Insignificant Trace Gas?

Take one look at a smoking gun and you know it’s been fired. One look at a paper on a complex subject like atmospheric physics and you might easily jump to the wrong conclusion. Let’s hope I haven’t fallen into the same trap..

The Concept Behind the Paper

The paper examines the difference between satellite measurements of longwave radiation from 1970 and 1997. The measurements are only for clear sky conditions, to remove the complexity associated with the radiative effects of clouds (they did this by removing the measurements that appeared to be under cloudy conditions). And the measurements are in the Pacific, with the data presented divided between east and west. Data is from April-June in both cases.

The Measurement

The spectral data is from 7.1 – 14.1 μm (1400 cm-1 – 710 cm-1 using the convention of spectral people, see note 1 at end). Unfortunately, the measurements closer to the 15μm band had too much noise so were not reliable.

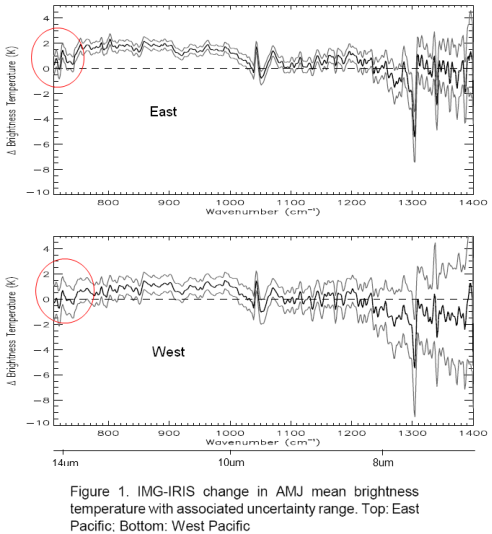

Their first graph shows the difference of 1997 – 1970 spectral results converted from W/m2 into Brightness Temperature (the equivalent blackbody radiation temperature). I highlighted the immediate area of concern, the “smoking gun”:

Note first that the 3 lines on each graph correspond to the measurement (middle) and the error bars either side.

I added wavelength in μm under the cm-1 axis for reference.

What Gary Thompson draws attention to is the fact that OLR (outgoing longwave radiation) has increased even in the 13.5+μm range, which is where CO2 absorbs radiation – and CO2 has increased during the period in question (about 330ppm to 380ppm). Surely, with an increase in CO2 there should be more absorption and therefore the measurement should be negative for the observed 13.5μm-14.1μm wavelengths.

One immediate thought without any serious analysis or model results is that we aren’t quite into the main absorption of the CO2 band, which is 14 – 16μm. But let’s read on and understand what the data and the theory are telling us.

Analysis

The key question we need to ask before we can draw any conclusions is what is the difference between the surface and atmosphere in these two situations?

We aren’t comparing the global average over a decade with an earlier decade. We are comparing 3 months in one region with 3 months 27 years earlier in the same region.

Herein seems to lie the key to understanding the data..

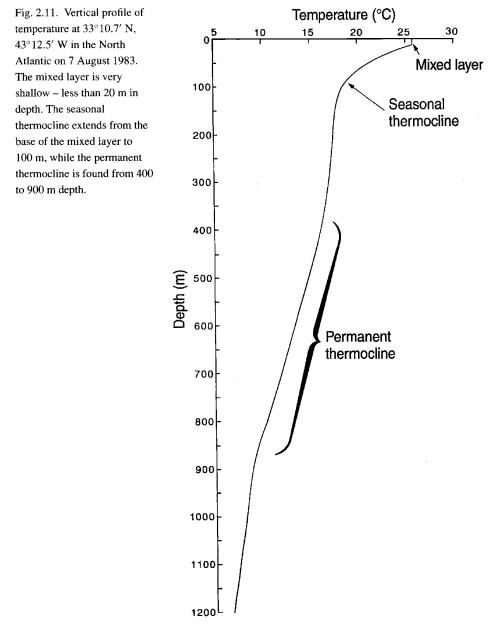

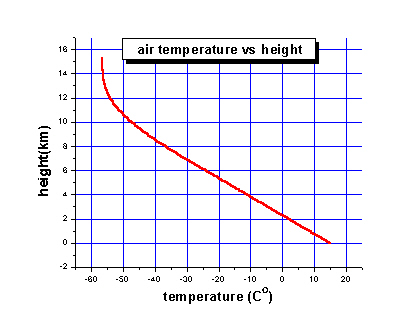

For the authors of the paper to assess the spectral results against theory they needed to know the atmospheric profile of temperature and humidity, as well as changes in the well-studied trace gases like CO2 and methane. Why? Well, the only way to work out the “expected” results – or what the theory predicts – is to solve the radiative transfer equations (RTE) for that vertical profile through the atmosphere. Solving those equations, as you can see in CO2 – Part Three, Four and Five – requires knowledge of the temperature profile as well as the concentration of the various gases that absorb longwave radiation. This includes water vapor and, therefore, we need to know humidity.

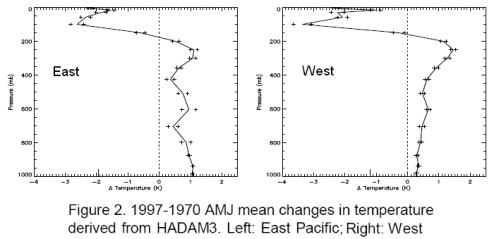

I’ve broken up their graphs, this is temperature change – the humidity graphs are below.

Now it is important to understand where the temperature profiles came from. They came from model results, by using the recorded sea surface temperatures during the two periods. The temperature profiles through the atmosphere are not usually available with any kind of geographic and vertical granularity, especially in 1970. This is even more the case for humidity.

Note that the temperature – the real sea surface temperature – in 1997 for these 3 months is higher than 1970.

Higher temperature = higher radiation across the spectrum of emission.

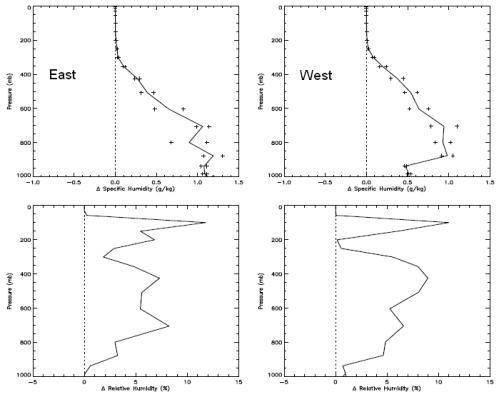

Now the humidity:

The top graph is change in specific humidity – how many grams of water vapor per kg of air. The bottom is change in relative humidity. Not relevant to the subject of the post, but you can see how even though the difference in relative humidity is large high up in the atmosphere it doesn’t affect the absolute amount of water vapor in any meaningful way – because it is so cold high up in the atmosphere. Cold air cannot hold as much water vapor as warm air.

It’s no surprise to see higher humidity when the sea temperature is warmer. Warmer air has a higher ability to absorb water vapor, and there is no shortage of water to evaporate from the surface of the ocean.

Model Results of Expected Longwave Radiation

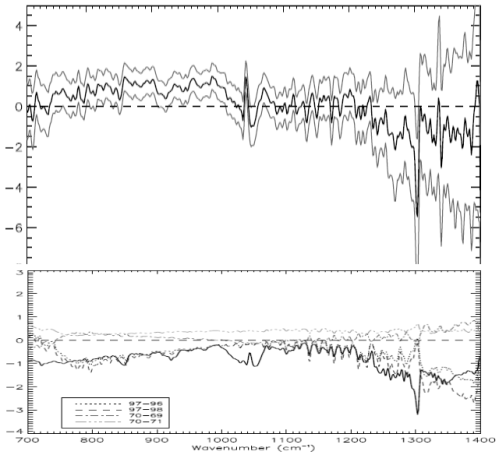

Now here are some important graphs which initially can be a little confusing. It’s worth taking a few minutes to see what these graphs tell us. Stay with me..

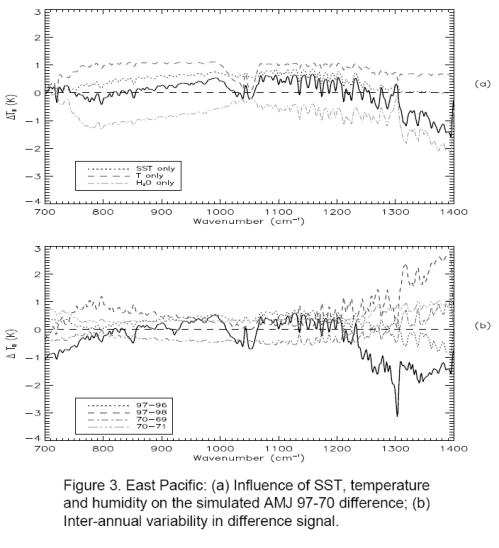

The top graph. The bold line is the model results of expected longwave radiation – not including the effect of CO2, methane, etc – but taking into account sea surface temperature and modeled atmospheric temperature and humidity profiles.

This calculation includes solving the radiative transfer equations through the atmosphere (see CO2 – An Insignificant Trace Gas? Part Five for more explanation on this, and you will see why the vertical temperature profile through the atmosphere is needed).

The breakdown is especially interesting – the three fainter lines. Notice how the two fainter lines at the top are the separate effects of the warmer surface and the higher atmospheric temperature creating more longwave radiation. Now the 3rd fainter line below the bold line is the effect of water vapor. As a greenhouse gas, water vapor absorbs longwave radiation through a wide spectral range – and therefore pulls the longwave radiation down.

So the bold line in the top graph is the composite of these three effects. Notice that without any CO2 effect in the model, the graph towards the left edge trends up: 700 cm-1 to 750 cm-1 (or 13.5μm to 14.1μm). This is because water vapor is absorbing a lot of radiation to the right (wavelengths below 13.5μm) – dragging that part of the graph proportionately down.

The bottom graph. The bold line in the bottom graph shows the modeled spectral results including the effects of the long-term changes in the trace gases CO2, O3, N2O, CH4, CFC11 and CFC12. (The bottom graph also confuses us by including some inter-annual temperature changes – the fainter lines – let’s ignore those).

Compare the top and bottom bold graphs to see the effect of the trace gases. In the middle of the graph you see O3 at 1040 cm-1 (9.6μm). Over on the right around 1300cm-1 you see methane absorption. And on the left around 700cm-1 you see the start of CO2 absorption, which would continue on to its maximum effect at 667cm-1 or 15μm.

Of course we want to compare this bottom graph – the full model results – more easily with the observed results. And the vertical axes are slightly different.

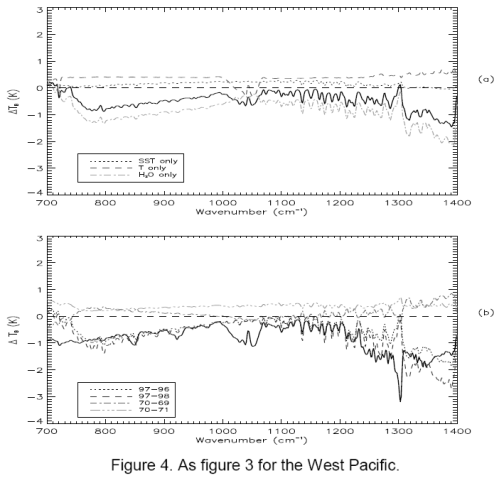

First for completeness, the same graphs for the West Pacific:

Let’s try the comparison of observation to the full model, it’s slightly ugly because I don’t have source data, just a graphics package to try and line them up on comparable vertical axes.

Here is the East Pacific. Top is observed with (1 standard deviation) error bars. Bottom is model results based on: observed SST; modeled atmospheric profile for temperature and humidity; plus effect of trace gases:

Now the West Pacific:

We notice a few things.

First, the model and the results aren’t perfect replicas.

Second, the model and the results both show a very similar change in the profile around methane (right “dip”), ozone (middle “dip”) and CO2 (left “dip”).

Third, the models show a negative value in change of brightness temperature (-1K) at the 700 cm-1 wavelength, whereas the actual results for the East Pacific is around 1K and for West Pacific is around -0.5K. The 1 standard deviation error bars for measurement include the model results – easily for West Pacific and just for East Pacific.

It appears to be this last observation that has prompted the article in American Thinker.

Conclusion

Hopefully, those who have taken the time to review:

- the results

- the actual change in surface and atmospheric conditions between 1970 and 1997

- the models without trace gas effects

- the models with trace gas effects

might reach a different conclusion to Gary Thompson.

The radiative transfer equations as part of the modeled results have done a pretty good job of explaining the observed results but aren’t exactly the same. However, if we don’t include the effect of trace gases in the model we can’t explain some of the observed features – just compare the earlier graphs of model results with and without trace gases.

It’s possible that the biggest error is the water vapor effect not being modeled well. If you compare observed vs model (the last 2 sets of graphs) from 800cm-1 to 1000cm-1 there seems to be a “trend line” error. The effect of water vapor has the potential to cause the most variation for two reasons:

- water vapor is a strong greenhouse gas

- water vapor concentration varies significantly vertically through the atmosphere and geographically (due to local vaporization, condensation, convection and lateral winds)

It’s also the case that the results for the radiative transfer equations will have a certain amount of error using “band models” compared with the “line by line” (LBL) codes for all trace gases. (A subject for another post but see note 2 below). It is rare that climate models – even just 1d profiles – are run with LBL codes because it takes a huge amount of computer time due to the very detailed absorption lines for every single gas.

The band models get good results but not perfect – however, they are much quicker to run.

Comparing two spectra from two different real world situations where one has higher sea surface temperatures and declaring the death of the model seems premature. Perhaps Gary ran the RTE calculations through a pen and paper/pocket calculator model like so many others have done.

There is a reason why powerful computers are needed to solve the radiative transfer equations. And even then they won’t be perfect. But for those who want to see a better experiment that compared real and modeled conditions, take a look at Part Six – Visualization where actual measurements of humidity and temperature through the atmosphere were taken, the detailed spectra of downwards longwave radiation was measured and the model and measured values were compared.

The results might surprise even Gary Thompson.

Notes:

1. Wavelength has long been converted to wavenumber, or cm-1. This convention is very simple. 10,000/wavenumber in cm-1 = wavelength in μm.

e.g. CO2 central absorption wavelength of 15μm => 667cm-1 (=10,000/15)

2. Solving the radiative transfer equations through the atmosphere requires knowledge of the absorption spectra of each gas. These are extremely detailed and consequently the numerical solution to the equations require days or weeks of computational time. The detailed versions are known as LBL – line by line transfer codes. The approximations, often accurate to within 10% are called “band models”. These require much less computational time and so the band models are almost always used.

On Having a Laugh – by Gerlich and Tscheuschner (2009)

Posted in Commentary on March 14, 2010| 61 Comments »

There’s a paper out which has created some excitement, On Falsification Of The Atmospheric CO2 Greenhouse Effects, by Gerlich & Tscheuschner (2009). It was published in International Journal of Modern Physics B. I don’t know what the B stands for.

Usually I would try and read a paper all the way through to understand it, then reread it.. but I got as far as page 55 out of 115 – even the seminal Climate Modeling through Radiative Convective Methods by Ramanathan & Coakley (1978) paper only had 25 pages.

Quite a few points have already jumped out at me that made me not want to read the whole thing:

First, a lot of time was spent showing that greenhouses and bodies surrounded by glass (or anything that stops air movement) retain heat not because of absorption and reradiation of longwave energy but because convection is reduced.

Why spend so long on it when everyone agrees. Sadly the “so-called greenhouse effect” became that because it passed into common language to describe this effect even though it’s not the right description.

Even in CO2 – An Insignificant Trace Gas? Part Six – Visualization I said:

But didn’t mention greenhouses, because the greenhouse isn’t a good analogy..

This is a concern if it’s a serious paper, because attacking arguments that no one agrees with is the strawman fallacy, a refuge of people with no strong argument.

Here is a nice example, commenting on a paper by Lee, who says that the “greenhouse” term is a misnomer:

The authors of this paper don’t actually explain where Lee’s equations are questionable, instead draw attention to a day that should be marked down in history.. and use that to show that anyone mentioning “greenhouses” have got it wrong.

None of the papers that discuss the radiative-convective method actually argue from the greenhouse. So why are the authors of this paper spending so much time on it?

Second, attacking poor presentations with a mixture of correct (but really irrelevant) and incorrect arguments.

They cite, not a paper, but an Encyclopedia..

Nice. They have brought this up a few times. Yes, technically we call infrared that part of the radiation that is longer wavelength than visible light. So anything >700nm is infrared. Any yet, in common terminology, often cited to a point of pain, we use “longwave” to mean that radiation over 4μm because 99% of it is radiated from the earth, and we use “shortwave” to mean that radiation under 4μm which is solar radiation.

So their first “disproof” isn’t a disproof. And their second one is simply picking a terminology mistake in an encyclopedia. Yes, the encyclopedia has mixed up the phenomenon.

Why are they citing from this source?

Third, another example of “destroying” the opponent’s argument..

They quote another source:

And comment:

It wasn’t what their source actually said. Their source didn’t say, or imply, that radiation was emitted only downward.

Fourth, and most importantly, the paper gives the appearance of discussing prior work by discussing a real mix of very old work and lots of more recent comments by people in their “introduction” to something quite different. That is they are citing from papers which are introducing another subject while not attempting to demonstrate any formal proof of the inappropriately named “greenhouse effect”. They don’t discuss the relevant modern work that attempts to prove the relevance and solution of the radiative transfer equations.

They do reference one key paper but never discuss it to point out any problems.

The paper in question is S. Manabe and R.F. Strickler, Thermal Equilibrium of the Atmosphere with Convective Adjustment, J. Atmosph. Sciences 21, 361-385 (1964)

It is referenced through this quote:

The work that most recent papers on the solution to the radiative transfer equations discuss or cite is Ramanathan and Coakley (1978) – often along with a citation of M&S – and of course Ramanathan and Coakley cite and discuss Manabe and Strickler (1964). That is, anyone calculating the effect of CO2 and other trace gases on the surface temperatures.

Why not open up these two great papers and show the flaws? Ramanathan and Coakley are never even cited. Manabe and Strickler aren’t discussed.

R&C is 25 pages long and works through a lot of thermodynamics in their paper. If Gerlich & Tscheuschner want to get a result, show their flaws. It should be a breeze for them..

This doesn’t instill any confidence in the paper. I starting writing this post a few weeks ago and at the time wrote:

I’m sure they would say otherwise.. And I’m certain we would get on great over a few drinks. If we drank enough I’m sure they would admit they did it for a bet..

Non-Conclusion

If Gerlich & Tscheuschner want to be taken seriously maybe they can write a paper which is 20-30 pages long – it should be enough – and they can ignore greenhouses and encyclopedia references and what people say in introductions to less relevant works.

Their paper could reference and discuss recent work which from first principles demonstrate and solve the radiative transfer equations. And they should show the flaw in these papers. Use Ramanathan and Coakley (1978) – everyone else references it.

On that paper: Climate Modeling through Radiative Convective Methods – R&C are into the maths by page 2 and don’t mention greenhouses. I would recommend this excellent paper (you should be able to find it online without paying) to anyone who wants to learn more about the approach to solving this difficult but well-understood problem. Even if you don’t want to follow their maths there is lots to learn.

Gerlich & Tscheuschner waste 50 pages with irrelevance and poorly directed criticism.. if they have produced a great insight it will be lost on many.

In New Theory Proves AGW Wrong! I commented that many ideas come along which are widely celebrated.

Some “disprove” the “greenhouse effect” or modify it to the extent that if their ideas are correct our ideas about the (inappropriately named) greenhouse effect are quite wrong.

Some disprove AGW (anthropogenic global warming). There is a world of difference between the two.

This paper falls into the first category. I also commented that the papers in the first category usually disprove each other as well, so it’s not “one more nail in the greenhouse effect” – it’s “one more nail in the last theory” and the theories that will inevitably follow.

Interestingly (for me), since I wrote that article: New Theory Proves AGW Wrong! someone produced a list of a few papers that I should “disprove”. One was this paper by Gerlich and Tschueschner, another was by Miskolczi. Yet they disprove each other and both disprove what this person promoted as their own theory.

This doesn’t prove anyone wrong – just they can’t all be right. One or zero..

And I’ll be the first to admit I haven’t proven Gerlich and Tschueschner wrong in their central theory. I have pointed out a few “areas for improvement” in their paper but these are all distractions from the main event. More interesting stuff to do.

Update – new post: On the Miseducation of the Uninformed by Gerlich and Tscheuschner (2009)

Read Full Post »