I am very much a novice with statistics. Until recently I have avoided stats in climate, but of course, I keep running into climate science papers which introduce some statistical analysis.

So I decided to get up to speed and this series is aimed at getting me up to speed as well as, hopefully, providing some enlightenment to the few people around who know less than me about the subject. In this series of articles I will ask questions that I hope people will answer, and also I will make confident statements that will turn out to be completely or partially wrong – I expect knowledgeable readers to put us all straight when this happens.

One of the reasons I have avoided stats is that I have found it difficult to understand the correct application of the ideas from statistics to climate science. And I have had a suspicion (that I cannot yet prove and may be totally wrong about) that some statistical analysis of climate is relying on unproven and unstated assumptions. All for the road ahead.

First, a few basics. They will be sketchy basics – to avoid it being part 30 by the time we get to interesting stuff – and so if there are questions about the very basics, please ask.

In this article:

- independence, or independent events

- the normal distribution

- sampling

- central limit theorem

- introduction to hypothesis testing

Independent Events

A lot of elementary statistics ideas are based around the idea of independent events. This is an important concept to understand.

One example would be flipping a coin. The value I get this time is totally independent of the value I got last time. Even if I have just flipped 5 heads in a row, assuming I have a normal unbiased coin, I have a 50/50 chance of getting another head.

Many people, especially people with “systems for beating casinos”, don’t understand this point. Although there is only a 1/25 = 1/32 = 3% chance of getting 5 heads in a row, once it has happened the chance of getting one more head is 50%. Many people will calculate the chance – in advance – of getting 6 heads in a row (=1.6%) and say that because 5 heads have already been flipped, therefore the probability of getting the 6th head is 1.6%. Completely wrong.

Another way to think about this interesting subject is that the chance of getting H T H T H T H T is just as unlikely as getting H H H H H H H H. Both have a 1/28 = 1/256 = 0.4% chance.

On the other hand, the chance of getting 4 heads and 4 tails out of 8 throws is much more likely, so long as you don’t specify the order like we did above.

If you send 100 people to the casino for a night, most will lose “some of their funds”, a few will lose “a lot”, and a few will win “a lot”. That doesn’t mean the winners have any inherent skill, it is just the result of the rules of chance.

A bit like fund managers who set up 20 different funds, then after a few years most have done “about the same as the market”, some have done very badly and some have done well. The results from the best performers are published, the worst performers are “rolled up” into the best funds and those who understand statistics despair of the standards of statistical analysis of the general public. But I digress..

Normal Distributions and “The Bell Curve”

The well-known normal distribution describes a lot of stuff unrelated to climate. The normal distribution is also known as a gaussian distribution.

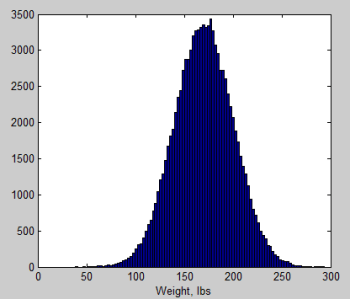

For example, if we measure the weights of male adults in a random country we might get a normal distribution that looks like this:

Figure 1

Essentially there is a grouping around the “mean” (= arithmetic average) and outliers are less likely the further away they are from the mean.

Many distributions match the normal distribution closely. And many don’t. For example, rainfall statistics are not Gaussian.

The two parameters that describe the normal distribution are:

- the mean

- the standard deviation

The mean is the well-known concept of the average (note that “the average” is a less-technical definition than “the mean”), and is therefore very familiar to non-statistics people.

The standard deviation is the measure of the spread of the population. In the example of figure 1 the standard deviation = 30. A normal distribution has 68% of its values within 1 standard deviation from the mean – so in figure 1 this means that 68% of the population are between 140-200 lbs. And 95% of its values are within 2 standard deviation from the mean – so 95% of the population are between 110-230 lbs.

Sampling

If there are 300M people in a country and we want to find out their weights it is a lot of work. A lot of people, a lot of scales, and a lot of questions about privacy. Even under a dictatorship it is a ton of work.

So the idea of “a sample” is born.. We measure the weights of 100 people, or 1,000 people and as a result we can make some statements about the whole population.

The population is the total collection of “things” we want to know about.

The sample is our attempt to measure some aspects of “the population” without as much work as measuring the complete population

There are many useful statistical relationships between samples and populations. One of them is the central limit theorem.

Central Limit Theorem

Let me give an example, along with some “synthetic data”, to help get this idea clear.

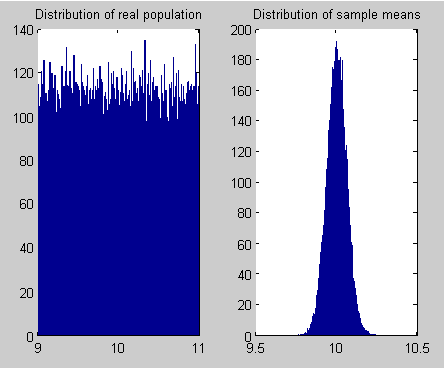

I have a population of 100,000 with a uniform distribution between 9 and 11. I have created this population using Matlab.

Now I take a random sample of 100 out of my population of 100,000. I measure the mean of this sample. Now I take another random sample of 100 (out of the same population) and measure the mean. I do this many many many times (100,000 times in this example below). What does the sampling distributions of the mean look like?

Figure 2

Alert readers will see that the sampling distribution of the means – right side graph – looks just like the “bell curve” of the normal distribution. Yet the original population is not a normal distribution.

It turns out that regardless of the population distribution, if you have enough items in your sample, you get a normal distribution (when you plot the mean of each sample distribution).

The mean of this normal distribution (the sampling distribution of the mean) is the same as the mean of the population, and the standard deviation, s = σ/√n, where σ= standard deviation of the population, and n = number of items in one sampling distribution.

This is the central limit theorem – in non-technical language – and is the reason why the normal distribution takes on such importance in statistical analysis. We will see more in considering hypothesis testing..

Hypothesis Testing

We have zoomed through many important statistical ideas and for people new to the concepts, probably too fast. Let’s ask this one question:

If we have a sampling distribution can we asses how likely it is that is was drawn from a particular population?

Let’s pose the problem another way:

The original population is unknown to us. How do we determine the characteristics of the original population from the sample we have?

Because the probabilities around the normal distribution are very well understood, and because the sampling distribution of the mean has a normal distribution, this means that if we have just one sampling distribution we can calculate the probability that it has come from a population of specified mean and specified standard deviation.

More in the next article in the series.

Not being rude, but this is stats 101 – or even high-school. Not very relevant to modern climatology.

A lot of the historical climate stuff that’s important is Factor Analysis, of which variation Principal Component Analysis (PCA) is the most contentious and Principal Factor Analysis (PFA) is the most ‘acceptable’

Factor analysis looks at multiple time series of different data types and attempts to create a simplified model that has less variables than the original set.

The math is generally the same and involves calculating eigenmatrices from the input data and then rotating the matrix to achieve a extreme of variance.

PCA searches for maximum variance. PFA seeks minimum variance.

The devil is in the criteria to remove factors/components – hence simplify the model.

The big argument at present is that ‘historical’ climatologists use PCA with dubious ‘reduction’ selection criteria that can significantly bias the result based on subjective criteria.

It’s not a mathematical argument, it’s an interpretation argument.

The very big point of contention is historical tree-ring data and the present mainstream concentration of PCA ‘energy’ into a very small time-seris data set – i.e Yamal.

It is true, this is stats 101. However, the readership here is from a diverse background.

It is common – just to pick one example – to find unfavorable comments on blogs about “false assumptions of normal distributions” which demonstrate that the writer doesn’t understand the central limit theorem.

I like to cover basics, because that way I have an article to refer to with said basics, and readers who want to learn but don’t have stats 101 get an opportunity to start to understand the basics.

And there is a lot of statistical analysis of climate which is nothing to do with tree-ring data and the hockey stick debate of the last 1000 years of climate history.

Correct treatment of temperature data in the light of auto-correlation of temperature, just as one example.

SOD: It is common – just to pick one example – to find unfavorable comments on blogs about “false assumptions of normal distributions” which demonstrate that the writer doesn’t understand the central limit theorem.

Just to be clear, you don’t always get normal (Gaussian) distributions out of averaging of a series of numbers. Necessary conditions include that the individual samples are uncorrelated, and that the distribution is homoscedastic (variance of all samples is the same).

The average of value from a distribution that changes over time, such as might happen if the net radiative forcing of climate changes over time, would probably not have a normal error associated with it (violation of homoscedasticity).

Another example is log-normal distributions (or “log-normal like“), which crop up frequently in meteorology due to the correlated (1/f^n) nature of the weather/climate noise.

Finally, even when the sum of a number of values from distribution approaches normality, it doesn’t do so uniformly, the tail of the distribution associated with a finite sum approaches normality more slowly than the centroid of the distribution.

Since it’s precisely the tail that we are usually interested in, this can lead to erroneous conclusions about the uncertainty of the result (often the assumption of normalcy underestimates the uncertainty of the result.)

It might be stats 101, but that’s where I start. It might be a long way until we get to climate stats, but I think it is a good thing to recall some of the basics.

Looking forward to the continuation of this series – I often find that whilst I have a good grasp of the physical side of climate change, the stats can often be befuddling. Cheers!

Thanks for this. I’m looking forward to more. The plain English explanations suit me just fine.

Your explanation of factor analysis and principal components analysis is not correct. Factor analysis (FA) tries to explain the correlations between sets of items based on underlying dimensions known as factors. A principal axis factoring is a type of factor analysis, and probably the most common type. The shared variance between the items is the factor. A principal components analysis (PCA) is not a factor analysis, as it does not try to explain the common dimensions, but rather tries to explain all the variance. PCA is used in genomics research, where one wants to use a component that is simply a combination of all the genes that code for a protein — it’s not just what’s common, but it’s everything. Time series analysis is an independent area of statistics from factor analysis. However, recently, people have done analyses called “Dynamic Factor Analysis” (DFA) where they’ve crossed a time series analysis with a factor analysis. If you’re looking at only one thing (i.e., temperature), there’s no need to do a factor analysis. However, if you’re a psychologists looking at something like IQ which has many parts and want to see how the parts go together over time, the crossing of time series and FA in the DFA framework is necessary.

In climatology it is the time series of different parameters that are used. For example historical tree data or ice cores or pollen counts.

The ‘result’ is a time series model of the original data reduced from say 20 time series to say 5 time series – representing a simplified model of an originally complex measurement.

I’ve used factor analysis in this way on time series data such economic parameters or education outcomes. It can also be used for example with time series of data from a farm to evaluate the contribution and relationship of different inputs to different outputs.

I sense there will be a lot for me to learn in this series. Looking forward to the sequels.

Thanks for this, can I put out my own question? In my mind this is about the importance of stats versus physics, maybe it’s not actually relevant.

It’s about statements such as “the hottest in 100 years’ or “the wettest in 200 years”. I’m just interested what we are meant to make of these statements? It was brought home to me with the Queensland floods this year but is maybe also relevant with the heatwave hitting the US. If we take the example of Queensland then rainfall isn’t randomly distrubed. It mainly rains in the wet season (Dec-March), the heaviest rain falls in La Nina years and the very highest rain years usually also includes landfall from one or more tropical storms. Maybe there are only 20-30 La Nina in the past 200 years. Should the Queensland flood be a 1 in 20 year event? More generally does stats mean much when we can get a better handle on these sorts of events by looking at real physical processes?

HR,

Hypothesis testing is, IMO, the main reason for stats. For example, is there a trend in some variable that is significantly different from no trend? The problem with things like temperature time series is that the data aren’t i.i.d. (independent, identically distributed). That means the quick and dirty calculation of variance will probably be too low.

There’s also the question of the test power. There are two types of errors in statistical tests, rejecting a hypothesis when it shouldn’t be rejected and failing to reject when it should be (Type I and Type II, although I probably have them backwards from the standard nomenclature). Generally, if you minimize one of these errors, you maximize the other. Some tests are better, have more power, than others because for a given rate of Type I error, the more powerful test will have a lower rate of Type II error. As I remember, the probability usually quoted, as in 95% confidence limits, is the Type I error rate.

Personally, I would avoid the statistics of paleoclimate reconstruction from proxy data entirely. It’s a can of worms that needs a lot of background to even begin to understand. Unfortunately, a lot of the people who do it don’t appear to have the necessary background either and have failed to consult with anyone who does.

‘Sage advice’ DeWitt. 🙂

Best regards, Ray.

I am with benpal on this – just starting to brush up my stats from a long time ago so I have a better chance of understanding some of the statisically based arguments. I am quite looking forward to the next in the series!

Good show SOD this is of value to us all. SPC rules or did rule the auo industry because of Deming so if quality is brought forth that is a good thing.

I decided to go back and play with adding noise together that has a power spectral density (PSD) of the form 1/f^nu. This is as a follow up to my prior comment that you don’t always reduce to the central limit theorem in large N, with one of the places where this happens is in fact climate science (they are likely to have 1/f^nu type noise sources and heteroscedastic noise to boot).

Like SOD, I use MATLAB/Octave for most of my work. I don’t have the slightest idea how you’d implement this in R, though I’m sure it’s very doable.

First here’s a function that produces an interval of 1/f^nu noise.

Here’s the usage:

function noise = fnoise(npoints, nu)

Just give it how many points you want to generate, and the exponent for the noise, and it does the rest. One note, it is set to give zero DC offset. For S(f) = 1/f^nu noise, this means S(0) = 0.

Secondly, here’s a function that computes the cumulative sum of navg consecutive points for a 1/f^nu distribution.

Again here’s the usage:

function anoise = fnoiseavg(ntrials, navg, nu)

Note that this function has fnoise embedded in it (trying to make it simpler for people who don’t have great matlab familiarity).

I’ve also written a driver that demonstrates the effect of varying nu.

This should have all the functions needed to make it run.

Here’s what the waveforms looks like.

And the spectra…

Finally the histograms.

What you find (briefly) is that the only case where you get true gaussian noise in the limit of large n, is nu = 0. However, this is the case for “white noise”, which is uncorrelated. Values of nu 0 (“red noise”) give either fattened (platykurtic) or “double peaked” distributions.

I can explain why that happens, but it’s a bit technical.

The values of nu used were -2.0, -1.5, -1.0, -0.5, 0.0, 0.5, 1.0, 1.5, and 2.0. (The color selections are the same in each case.)

The matlab script I’ve linked to generates PDF plots, but they will look visually different than the case I’ve posted here.

Sorry, I muffed the URL for the histograms

I also flubbed at least the link to fnoise.m. Here it is as well as a tarball of the project, which includes the text files for the spectra, histograms, and matlab pdfs for one particular run.

[…] Comments « Statistics and Climate – Part One […]

[…] the first two parts we looked at some basic statistical concepts, especially the idea of sampling from a distribution, […]

Kudos, for a very readable explanation of the Central Limit Theorem. I find myself constantly looking up terms in an old stats book when reading certain papers and a beginning summary that is accessible from all backgrounds is woefully needed. If you so not use the stats often, you forget it quickly.

As usual, I am somewhat late to the party, but I would like to add my thanks for this series of articles. So much of climate science relies on stats and your articles are are great way of helping me figure out how much I don’t know!

I like this start, it’s good for those who wish to understand the principles first before diving into contentious methods such as PCA and its alleged misuse by Mann et al in the infamous Hockey Stick. Where did that get us? I see much misuse of statistics, it’s OK using fancy models etc but models basically tell you what you put in, if that’s garbage then that’s what you get out. Bit like the incorrect use of any complex statistical procedure, which have underlying assumptions. The availability of high powered programs often hides the inability of the user to understand when things go wrong, as alleged in the case of Mann. One should not hide behind an all empowering computer program which one cannot fully comprehend.

There are basic principles of statistics and inference that the AGW proponents fail on. No matter what fancy methods you use they will not fix fundamental problems with the data captured. The whole AGW inference mechanism is fatally flawed and will serve in future as a perfect example of the misuse of statistics. For now post-modern science rules, propagated by those who wish to garner fame or fortune and fuelled by a parasitic industry that feeds on our fears.

This post may contain elementary principles but if one thinks behind the issues at what can really go wrong then they are probably more than enough to categorise what is going wrong in this fiasco of our times. Question: how was the 90% sure figure that the IPCC quote (as the likelihood of the A in AGW being right) determined? It’s rather simpler I suspect that using the Normal Distribution, application of the Central Limit Theorem, Sampling Theory, Hypothesis Testing or even flipping coins. It’s what most people call, in technical terms, an off the top of one’s head estimate.

Another question: When gathering information about something and forming opinion will one get a balanced view by review of the papers? You’re in effect summarising data, will the average of the paper views have a nice distribution (CLT) that we can infer from? Sure if you take enough info then the distribution will tend to be normal (woohoo!) but it’s hiding a fundamental problem with the data in terms of bias (unless the papers are unbiased). So you get a nice normal distribution for your mean but because the data were biased in the first place it’s located around the wrong mean and very likely has the wrong spread. In technical terms it’s rubbish.

[…] + https://scienceofdoom.com/2011/07/24/statistics-and-climate-part-one/ […]

Just to clarify Jeremy’s comment, PCA searches for maximum EXPLAINED variance…