Recap

This post is a follow on from my original article: American Thinker – the Difference between a Smoking Gun and a Science Paper.

Gary Thompson who wrote the article in American Thinker that prompted my article was kind enough to make some comments and clarifications. Instead of responding in the comments to the first article, I thought it was worth a new post, especially to get the extra formatting that is allowed in posts rather than comments.

I appreciate him commenting and I’m sure he has a thick skin, but just in case, any criticisms are aimed at getting to the heart of the matter, rather than at him – or anyone else who has different views.

For people who have landed at this post and haven’t read the original.. the heart of Gary’s article were 3 papers, of which I examined one (the first). The paper compared two 3-month periods 27 years apart in the East and West Pacific. Gary commented that the actual OLR (outgoing longwave radiation) was higher in the later period in the important CO2 band (or what we could see of it).

His claim – the theory says that more CO2 should lead to less emission at those wavelengths and therefore the theory has been disproved.

My point – Gary doesn’t understand the theory. The temperature was higher in the later period in this region and therefore the radiation leaving the earth’s surface would be higher. We’ll see this explained again, but did I mention that you should read Gary’s article and my article before moving forward? Also at the end of the Science of Doom post you can see Gary’s comments. Always worth reading what people actually wrote rather than what someone (me) with the opposite point of view highlighted from their words..

The Unqualified Statements in Papers

Gary started by saying:

I know why the authors of the papers were using climate models to simulate the removal of effect from surface temperatures and humidity and that the ‘theory’ says you must do that. But my problem lies in two peer reviewed papers that casts doubt on that theory and that method.

And cites two papers. The first, a 1998 paper: The Trace Gas Greenhouse Effect and Global Warming by the great V. Ramanathan (I will continue to call him ‘great’ even though he didn’t reply to my email about his 1978 paper.. possibly busy, but still..).

I recommend this 1998 paper to everyone reading this article. Even though it is 12 years old, it is all relevant and a very readable summary.

The Great Ramanathan

Gary pointed out page 3 where statements appeared to back up his (Gary’s) interpretation of the later OLR study. Here’s what Ramanathan said:

Why does the presence of gases reduce OLR? These gases absorb the longwave radiation emitted by the surface of the earth and re-emit to space at the colder atmospheric temperatures. Since the emission increases with temperature, the absorbed energy is much larger than the emitted energy, leading to a net trapping of longwave photons in the atmosphere. The fundamental cause for this trapping is that the surface is warmer than the atmosphere; by the same reasoning decrease of temperature with altitude also contributes to the trapping since radiation emitted by the warmer lower layers are trapped in the regions above.

By deduction.. an increase in a greenhouse gas such as CO2 will lead to a further reduction in OLR. If the solar absorption remains the same, there will be a net heating of the planet.

Gary commented on the last part of this:

Notice there is no clarifying statement about having to use model simulated graphs to ‘correct’ for surface temperatures and water vapor before seeing that OLR reduction.

And on the first part:

“since the emission increases with temperature, the absorbed energy is much larger than the emitted energy, leading to a net trapping of longwave photons in the atmosphere.” – here the author stated clearly that even taking into account higher emissions from warmer surfaces, the net will still be a reduction.

For half the readers here, they are shaking their heads.. But for Gary and the other half of the readership, let’s press on.

First of all, if we took any of 1000 papers on the “greenhouse” effect and observations, models, theoretical adjustments, impacts on GCMs – I bet you could find at least 700 – 900 of them at some point will make a statement that could be pulled out which has no “clarifying statement”. That “cast doubt” on the theory. Perhaps 1000 out of a 1000.

Context, context, context as they say in real estate.

Where to begin? Let’s look at “the theory” first. And then come back and examine Ramanathan’s statements.

The Theory

There are a few basics. For newcomers, you can take an extended look at the theory in CO2 – An Insignificant Trace Gas? It’s in seven parts! Actually it’s a compressed treatment.

This is itself is a clue.

In the books I have seen on Atmospheric Physics many tens of pages are devoted to radiation, including absorption and re-radiation – the “greenhouse” effect – and many tens of pages are devoted to convection. Understanding the basics is critical.

For Gary and his followers, the theory is on a precipice and these papers are giving us that clue. For people who’ve studied the subject the theory of the “greenhouse” effect is as solid as the theory of angular momentum, or the 2nd law of thermodynamics (the real one, not the imaginary one).

As Ramanthan says in the same paper Gary cites:

It is convenient to separate the greenhouse effect from global warming. The former is based on observations and physical laws such as Planck’s law for black-body emission. The concept of warming that results from the greenhouse effect, is based on deductions from sound physical principles. Numerous feedback processes, determine the magnitude of the warming; these feedbacks are treated with varying degrees of sophistication in GCMs and other climate models. As a result, predictions of the magnitude of the warming are not only model dependent but are subject to large uncertainties..

If I can paraphrase:

Greenhouse effect – dead solid. Global warming – lots of factors, need GCMs, pretty complicated.

Perhaps he has not realized that his words in the same paper combined with experiments demonstrate a flaw in the theory of the dead-solid “greenhouse” effect..

Back to the theory, but first..

A Quick, possibly Annoying, Diversion to the Theory of Gravity

But before we start, I thought it was worth an analogy. Analogies are illustrations, not proof of anything. They can often inflame an argument, but that’s not the intention here. Many people who are still undecided about the amazing theory of the inappropriately-named “greenhouse” effect might welcome a break from thinking about it.

The theory of planetary movements is my analogy. I picture a world where gravity – and its effects on the planets orbiting the sun – is strangely controversial. A concerned citizen, leafing through some fairly recent scientific papers notices that planets don’t really go around the sun in ellipses as the theory claims. In fact, there are some quite odd movements. And so, surprised that the scientists can’t see what’s in plain sight, this citizen draws attention to them.

When some detail-orientated commenters point out that the theory is actually (in part):

F = GMm/r2

where F= force between 2 bodies, G is a constant, M and m are the masses of the 2 bodies and r is the distance between them

And the ellipse idea is just a handy generalization of the results of the laws of graviational attraction.

And the reason why some planetary movements recently measured don’t follow an ellipse is because a few planets are a bit closer together, there’s a large asteroid flying between them and so when we do the maths it all works out pretty well.

The original concerned citizen then pulls out a few papers where, in the introduction, gravity is explained as that force that produces elliptical movements in the planets.. with no disclaimers about F=GMm/r2 and claims that this theory is, therefore, under question.

Why this annoying analogy? Most “theories” in physics are developed because of some observations which get analyzed to death – and finally someone produces a “comprehensive theory” that satisfies most of the relevant scientific community. The paper with the comprehensive theory usually contains some equations, some observations, some matching of the two – but often in common everyday usage the shorthand version of the theory is used.

“The theory of gravity tells us that planets orbit the sun in an elliptical manner, and ..”

So much more tedious to keep saying F=GMm/r2, and the other formulae..

(I know, I haven’t proved anything, but maybe a few readers can take a moment and see a parallel..)

Back to the Radiative-Convective Theory

First, bodies radiate energy according to Planck’s formula, which looks complicated when written down. The idea is simplified by the total energy radiated according to the Stefan-Boltzmann law which says that total energy is proportional to the 4th power of temperature.

Temperature goes up, energy radiated goes up (quite a bit more) – and the peak wavelength is a little lower. Here’s a graphical look of the Planck formula which takes away the mathematical pain:

Two curves – 288K and 289K. Now zoomed in a little where most of the energy is:

Total energy in the top curve (289K) is 396W/m^2, and in the bottom curve (288K) is 390W/m^2

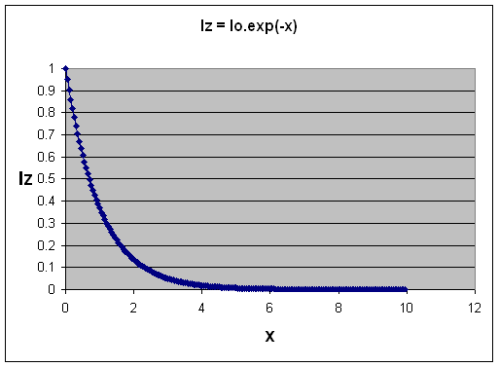

Second, trace gases absorb energy according to a formula, which when simplifed is the Beer-Lambert law.

Without going into a lot of maths, as the concentration of a “greenhouse” gas increases, the absorption graph falls off more steeply – more energy is absorbed.

Third, re-radiation of this energy takes place according to an energy balance equation that you can see in CO2 – Part Three. The energy balance or radiative transfer equations rely on knowledge of the temperature profile in the lower atmosphere (the troposphere), because the actual temperature here is dominated by convection not radiation. (Radiation still takes place and the temperature profile is a major factor in the radiation – but this effect is not the primary determinant of the temperature profile).

When Gary says:

I know why the authors of the papers were using climate models to simulate the removal of effect from surface temperatures and humidity and that the ‘theory’ says you must do that. But my problem lies in two peer reviewed papers that casts doubt on that theory and that method.

It sounds like Gary believes this “model” is some suspicious extra that tries to deal with problems between the theory and the real world. But it’s the foundation. Anyone who had read an introduction to atmospheric physics would understand that. Someone who had tried hard to understand a few papers without a proper foundation would easily miss it.

- Higher temperatures increase OLR

- More trace gases reduce OLR in certain wavelengths

Which effect dominates in a particular situation?

It’s simple conceptually. But, if we want to find out exact results – such as, which effect dominates in a particular situation – we need a “model” = an equation or set of equations. Because if we want to quantify the effects we have to solve some tricky equations which you can see in a paper by.. the Great Ramanathan. Well, Ramanathan & Coakley 1978 – a seminal paper on Climate Modeling through Radiative-Convective Models, here’s an extract from p7:

Lots of maths. The rest of the paper is similar. Let’s move on to Ramanathan’s much later paper and what he said and meant.

Has Ramanathan given up on The Theory?

Let’s review the words Gary pulled out of the paper:

Why does the presence of gases reduce OLR? These gases absorb the longwave radiation emitted by the surface of the earth and re-emit to space at the colder atmospheric temperatures. Since the emission increases with temperature, the absorbed energy is much larger than the emitted energy, leading to a net trapping of longwave photons in the atmosphere.

Gary reads into this “here the author stated clearly that even taking into account higher emissions from warmer surfaces, the net will still be a reduction“. By which Gary thinks Ramanathan is saying something like:

..measurements from a specific location at a later date when CO2 has increased will always lead to a reduction in OLR..

-my paraphrase.

But no, he has totally misunderstood what the author is saying. Ramanathan is doing a quick drive through of the basics and explaining how the greenhouse effect works.

Lower temperatures in the atmosphere mean that the radiation to space from this lower temperature atmosphere is lower than the radiation from the surface. (This is the “net trapping”). Therefore – the “greenhouse” effect. There is no conclusion here that “at all times in all situations increases in “greenhouse” gases will lead to a reduction in OLR in these bands“. The conclusion is just that “greenhouse” gases mean that the surface is warmer than it would be without these gases

And the first claim, Ramanathan said:

By deduction.. an increase in a greenhouse gas such as CO2 will lead to a further reduction in OLR. If the solar absorption remains the same, there will be a net heating of the planet.

Gary said:

Notice there is no clarifying statement about having to use model simulated graphs to ‘correct’ for surface temperatures and water vapor before seeing that OLR reduction.

That’s because the basics of the theory are the solid foundation and don’t need to be restated as qualifiers to every statement. Ramanathan helped write the theory! For people who think that this stuff is just some added extra, read his 1978 paper and see all the maths and the explanations. This is the theory.

In the earlier part of his earlier statement he said “Since the emission increases with temperature” but didn’t qualify it with “emission increases in proportion to the 4th power of temperature“.

Is Ramanathan losing confidence in the Stefan-Boltzmann formula? Or Planck? Are these rocks crumbling?

It’s only because Gary has decided that these points are somehow rocky that he would reach these conclusions. (And I could pick 10’s of other statements in the paper which, without qualifiers, could be taken to be the beginning of the end of a specific theory).

As a general point – when we look at the hypothetical global annual average after “new equilibrium” from increased CO2 is reached – if this new equilibrium exists – the theory (1st law of thermodynamics) predicts that the “new” OLR will match the “old” OLR (global annual average). And in that case the OLR in “greenhouse” gas bands will be reduced a little, while the OLR outside of those bands will be increased a little.

But when we look at one local situation we need the theory, also known as “the model”, also known as equations, to tell us what exactly the result will be.

Ramanathan hasn’t cast any doubt on it. He believes it. His papers from the 1970s to today work it all out. He just doesn’t write qualifiers to each statement as if every line will be read by people who don’t understand the theory..

Gary’s Maths

In Gary’s comment he reproduced his back of envelope calculations of how much surface temperature should have changed over this 27 year period.

He takes the charitable approach of considering a net reduction in OLR from one of the three papers he originally reviewed – if I understood this step correctly. The figure he takes to work with is a 1K reduction. And then tries to work out how much this 1K reduction in OLR in the CO2 band would have on surface temperatures.

Like all good science this starts on napkins and the back of envelopes, because everyone studying a problem first has to attempt to quantify it using available data and available formulae. Then when the first results are worked out and it seems like something new is discovered – or something old overturned – then the scientist, patent clerk, writer now has to turn to more serious methods.

In Gary’s preliminary results he shows that a reduction in OLR due to CO2 might have contributed something like 0.3°C to surface temperature change over 30 years or so – where the GISS temperature increase for the period is something like 0.7°C. The essence of the calculation was comparing the Planck function (see the first and second graph in this post) of two temperatures 1K apart, then considering how much is in the CO2 band and so calculating the approximate change in W/m2. Then by applying a “climate sensitivity” number from realclimate.org, converting that into a temperature change.

Radiative physics has the potential to confuse everyone. Perhaps if we consider the “equilibrium case”, one problem with Gary’s calculation above will become clearer.

What’s the equilibrium case? In fact, it’s one generalized result of the real theory. And because it’s easier to understand than dI = -Inσdz + Bnσdz = (I – B)dχ people start to think the generalized result under equilibrium is the theory..

Under equilibrium, energy out = energy in. This is for the whole climate system. So we calculate the incoming solar radiation that is absorbed, averaged across the complete surface area of the earth = 239 W/m2.

So if the planet is not heating up or cooling down, energy out (OLR) must also = 239 W/m2.

So we consider the golden age of equilibrium in 1800 or thereabouts. We’ll assume the above numbers are true for that case. Then lots of CO2 (and other stuff) was added. Let’s suppose CO2 has reached the new current CO2 level of 380ppm – and stays constant from now on. The downward radiative forcing as calculated at “top of atmosphere” from the increase in CO2 is 1.7 W/m2. And nothing else happens in the climate (“all other things being equal” as we say).

Eventually we will reach the new equilibrium. Doesn’t matter how long it takes, but let’s pretend it’s 2020. Before equilibrium the planet will be heating up, which means OLR < 239 W/m2 (because more energy must come into the planet than leave the planet for it to warm up). At equilibrium, in 2020, OLR = 239 W/m2 – once again. (Of course, at wavelengths around 15μm the energy will be lower than in 1800 and other wavelengths the energy will be higher).

At this new equilibrium point we still have a “radiative forcing” of 1.7W/m2, which is why the surface temperature is higher, but no change in OLR when measured at 1800 and again at 2020.

We’ll assume, like Gary, the realclimate.org climate sensitivity – how do we calculate the new equilibrium temperature?

The change in OLR is 0.0 W/m2. Therefore, change in temperature = 0’C. Ha, we’ve proved realclimate wrong. Climate sensitivity cannot exist.

Or, it was wrongly applied.

As trace gases like CO2 increase in concentration they absorb energy. The atmosphere warms up and re-radiates the energy in all direction. Simplifying, we say it re-radiates both up and down. The extra downward radiation is what is used to work out changes in surface temperature. Not the immediate or eventual change in OLR.

The extra downward part is usually called “radiative forcing” and comes with a number of definitions you can see in Part Seven of the CO2 series (along with my mistaken attempt to do a “back of envelope” calculation of surface temperature changes without feedback).

How do we work out the change in surface temperature?

In Gary’s case, what he will need to know is the change in downward longwave radiation. Not upwards.

Conclusion

The theory of radiative transfer in the atmosphere, at its heart, is a relatively simple one – but in application is a very challenging one computationally speaking. Therefore, it’s hard to grasp it in its details intuitively.

The theory isn’t the generalized idea of what will happen when moving from one equilibrium state to another equilibrium state with more “greenhouse” gases. That is a consequence of the theory under specific (and idealized) circumstances.

But because everyone likes shorthand, to many people this has become “the theory”. So when someone applies the maths to a specific situation it is “suspicious”. Maths becomes equated with “models” which to many people means “GCMs” which means “make-belief”. I think that if Gary had read Climate Modeling through Radiative-Convective Models by the Great Ramanthan, he might have a different opinion about the later paper and whether Ramanathan is “bailing out” on the extremely solid theory of “greenhouse” gases.

Anyone who has read a book on atmospheric physics would know that Gary’s claim is – no nice way to say it – “unsupported”. Yes – cruel, harsh, wounding words – but it had to be said.

However, most of the readers here and most on American Thinker haven’t read an undergraduate book on the topic. So it makes it worth trying to explain in some detail.

It’s also great to see people trying to validate or falsify theories with a little maths. Working out some numbers is an essential step in proving or disproving our own theories and those of others. In the example Gary provided he didn’t apply the correct calculation. (The subsequent pages of Ramanathan’s 1998 paper also run through these basics).

For those convinced that the idea that more CO2 will warm the surface of the planet is some crazy theory – these words are in vain.

But for the many more people who want to understand climate science, hopefully this article provides some perspective. The claims in American Thinker might at first sight seem to be a major problem to an important theory. But they aren’t.

Note – I haven’t yet opened the Philipona paper, but will do so in coming weeks and probably add another article about it. I didn’t want to leave Gary’s comments unanswered, and this post is already long enough..

Note on a Few Technical Matters

A few clarifications are in order, for people who like to get their teeth into the technical details.

Strictly speaking, at the very top of atmosphere there is no downward longwave forcing at all. That’s because there’s no atmosphere to radiate.

The IPCC definition of “radiative forcing” at “top of atmosphere” is a handy comparison tool of extra downward radiation before feedbacks and before equilibrium of the surface or troposphere is reached. In reality the increase in downward radiation doesn’t occur in just one location, extra downward longwave radiation occurs all throughout the troposphere and stratosphere.

In the example above, from 2010 to 2020 as surface and troposphere temperatures increased, radiative forcing would also increase slightly so it wouldn’t necessarily be constant at 1.7W/m2.

Climate sensitivity is calculated by GCMs to work out the resulting long term (“equilibrium”) temperature change, with climate feedbacks, from increased radiative forcing. No warranty express or implied as to their accuracy or usefulness, just explaining how to apply the climate sensitivity value correctly.

It seems to me that much Climate Science is weakened by zealotry, by the low intellectual standards of some of the participants, and by — moderators snip —. A useful shortcut to assessing the probity of the alleged physics is therefore to ask “Does anyone else use all this stuff – e.g. about CO2 and H2O absorbing and emitting radiation; anyone without the perverse incentives of the Climate Scientologists?”

Yes they do – for instance it’s routine stuff in the calculations on furnace performance that combustion engineers undertake.

“Climate sensitivity is calculated by GCMs to work out the resulting long term (“equilibrium”) temperature change, with climate feedbacks, from increased radiative forcing. No warranty express or implied as to their accuracy or usefulness, just explaining how to apply the climate sensitivity value correctly.”

It’s the models where my ‘faith’ is less then certain. Just too assumptions.

Looked at a paper the other day which analyzed the Grump gridded/urban data set.

Click to access schneiderERL2009.pdf

One of the most used data sets to determine Urban/Rural is 73% accurate. A coin toss would be 50% accurate.

Grump estimates global urban land usage as 2.74% of the total land, MODIS estimates at .5%.

dearieme:

Sorry, had to snip a little. Check out the Etiquette

We are talking about a field which has been studied by many 1000’s and the much of the foundational work was done in ’60s and ’70s. In the field or absorption and re-emission we are talking a century of work.

And the approach on this blog is to try and weigh up the evidence. Just because something is in a paper doesn’t mean it’s true. Just because someone’s theory isn’t in a paper doesn’t mean it’s not true. We’ll try and stay away from motives and “stuff people might have done”..

I didn’t follow this.

If you know the imbalance in OLR over time (instantaneous – equilibrium) you should be able to use this to compute the total delta E (thermal energy) for the Earth, which is trivially related to delta T.

What have I missed?

Also for my own edification, after you’ve given the Earth a shot of greenhouse gasses in 1800 (destroying the gold age of the French Republic I suppose), after it has warmed in response and reached a new thermal equilibrium, is it not the case that you will see a broadening of the absorption lines for the greenhouse gases (hence a net drop in OLR for these bands), which in turn implies an increase (“brightening”) in the pass-bands. This is probably contained in what you’ve written, just checking my comprehension.

Thanks for the nice post.

Carrick:

The last question first:

You are more or less correct in your summing up.

If the “atmospheric window” of 8-14um was truly transparent, for example, then with a higher surface temperature, and therefore higher radiation, clearly we would see a higher radiated energy in 8-14um at TOA.

And with more absorption in other bands, they will be radiating less. But it’s not really a “broadening in absorption lines”. It could be that more absorption will take place in the “wings” of a heavily saturated band, like the 15um band of CO2 – if that’s what you mean by broadening. It could also be more absorption in the same saturated area of the band, meaning that radiation is now coming from higher up in the atmosphere (and therefore a colder radiation) – see Earth’s Energy Budget – Part Three.

Changes in line broadening takes place mainly because of pressure. So lower in the atmosphere the lines are broader, higher up they are narrower.

And your first question:

A very interesting point and one to think about.

There’s nothing wrong in principle with this approach. I think about it as an approach that is much more indirect than the usual way of radiative forcing.

Why indirect? Well, you have no knowledge of where the energy is, and what direct effect it is having on the surface temperature or the atmosphere. You also don’t know how long it will take to get to equilibrium – because you have less understanding where the energy is going so you don’t know how much will be radiated out.

These issues also affect GCMs, of course. (But they attempt to calculate surface temperature changes, ocean temperatures and so on, so that the radiative ins and outs can be quantified).

So if you know the “downward radiative forcing” at the top of atmosphere, at the surface – and, in fact, at all levels through the atmosphere you have more opportunity to investigate the immediate results.

Your articles always are a bit of a reach for my experience, but never so far off to breed despair. By the end of it, I’m always glad I took the trip.

Dearieme-

Particularly apt, I think, are refrigeration systems designers.

Chemical engineers do the same but with heavier math and more variables.

“There’s nothing wrong in principle with this approach. I think about it as an approach that is much more indirect than the usual way of radiative forcing.”

If it’s the entire radiation spectrum (blackbody) that is meant by OLR, then if measuring the energy budget to determine the balance in a simple, direct manner subject to known and few kinds of errors (comparatively), how could it possibly be done any other way?

If any other way is used, doesn’t it still require the aforementioned empirical data to verify it?

Apart from energy budget, isn’t any further modelling all meteorology?

Dave McK:

The problem is that using a total energy balance model doesn’t solve the question of outgoing radiation vs time.

Let’s say we have a nice simple model and CO2 suddenly increases, so less radiation leaves the planet.

Before equilibrium, how does the outgoing longwave radiation (OLR) change with time?

And how long before equilibrium?

We don’t know, we can’t tell. So the solution eludes us. If it takes 30 years instead of 3 years (for example) then we get 10x as much energy and the planet warms up much much more..

Calculating instead the radiative forcing through the atmosphere we can at least use a GCM (with all their flaws) to work out temperature changes and therefore – the immediate, and subsequent changes, in OLR.

Even though, as we have seen in Models, On – and Off – the Catwalk GCMs have their flaws, we can at least attempt to model the surface temperature change and latent energy changes (via evaporation, cloud formation and rainfall) to calculate the changing energy balance over time.

I particularly like those two black body curve snapshots. I’ve been trying to explain that kind of thing without math, and that was an elegant alternative.

That is what I mean, that the effective absorption band widens, not broadening in the sense of e.g., thermal broadening.

Regarding the other, I thought about it a bit further, and I decided the biggest issue is you need to also monitor the amount of radiation reaching the surface, since changing climate will influence net albedo for example, as well as of course mundane things like changes in solar forcing from solar activity and orbital mechanics. It is a messy way to get to the answer, though one that doesn’t depend on temperature.

If you go to:

http://www.ssmi.com/msu/msu_data_description.html#msu_amsu_time_series

and look at TLT and TLS for the period 1994 to the present you will note two things. First, TLT, which is the lower troposphere, had the largest temperature variation and temperature rise in that period. In fact, the level at 1994 is known from other data (radiosondes and ground and sea surface temperatures) to only be a small amount larger than the 1940 level, so most of the large temperature rise used to support the unusual amount of global warming is in the period after 1994 (at least to 2003). Second, the average level of TLS was nearly constant from 1994 to the present. TLS is the upper troposphere. Since the temperature was increasing in TLT, it should have been decreasing in TLS (see the decreasing fairing line shown on TLS). It is obvious that two volcanoes caused the jump up followed by a level shift down about 1 to 2 years later for TLS up to 1994, and this level shift is not related to the TLT temperature variation. Your analysis may be valid with the simplifying assumptions of no negative feedback to CO2, but it appears to me there has to be a strong negative feedback, or errors in the maths due to leaving out other critical terms (the errors due to trying to get an average of a global value when it doesn’t mean what is assumed?) to get this result. The end result seems to be that the upper troposphere seems to have not cooled during the times while the lower troposphere is heating. It is a long enough time period so that short term non-balance can’t be used as a reason.

I want to state that I understand why there is a so called greenhouse effect, I am not disagreeing with your analysis. I agree that water vapor, CO2 and some other gases cause it as you state. The issue is the sensitivity to increasing CO2 in the presence of possible negative feedback from water vapor (probably from increasing lower clouds). While increasing CO2 IN THE ABSENCE OF OTHER EFFECTS will do as you state, it is questionable that there are no other effects, and it appears to me that there is likely a negative feedback. There has been a warming trend for the last 150 years, but that seems to be coming out of a particularly low temperature period, so I see no need to look for a scapegoat.

Leonard Weinstein:

Of course, there are other effects.

The handy thing about the radiative transfer equations is we can do a calculation of the conditions now or at some specific time, and have a lot of confidence in the results. That is, the measured OLR matches the calculated OLR.

And the measured downward longwave radiation at the surface matches the calculated values, as in CO2 – Part Six, Visualization

What these calculations can’t tell us is the consequent change in the rest of the climate.

But the RTE are an important first step, and because they provide good results we can have confidence in using them. It’s important of course to understand how and where they can be used. Or what they do and don’t do for us.

For your other points – all good ones which need a full discussion.

“Before equilibrium, how does the outgoing longwave radiation (OLR) change with time?”

Yes- I am understanding that this is directly observable.

I actually think I can make the equipment myself on the kitchen table, it is so simple in concept.

Ultimately, how else can you validate the hypotheses without doing this?

Dave McK:

It’s directly observable (see footnote). But that wasn’t the point.

The question was about the usefulness of “radiative forcing” = the extra downward longwave radiation – vs the usefulness of the OLR mismatch.

And usefulness as in “how to calculate the effect on the surface temperature, energy absorbed by oceans, etc”.

Footnote – measurements of incoming and outgoing radiation are not highly accurate to an absolute standard. Especially when thinking about reflected solar for all angles, and OLR for all wavelengths.

Possibly more on this in Earth’s Energy Budget – Part Four, like I promised was going to be in Part Three..

All righty. Understood.

[…] something I commented on in American Thinker Smoking Gun – Gary Thompson’s comments examined, where I explained that a particular theory is not usually a generalized statement about effects […]

What a great site. Congratulations. I really like your style, your reasoning and your presentational skills.

On this particular issue, while I agree with your intellectual demolition of Gary’s reasoning, (sorry, Gary), and also agree in general with your conclusions that, like share prices, OLR can go up or down, I actually believe that, if the IPCC attribution argument were valid, we should have seen OLR on a decreasing trend from the late 70s and not an increasing trend as suggested by the available satellite data. I don’t have a problem with the RTE in all this, but I do see a major problem with IPCC’s attribution studies.

Consider an idealised Earth model where TSI is an absolute constant and there are no awkward amplification factors on TSI. Assume that at some time t0, this system is in radiative equilibrium. Now add an injection pulse of CO2. Theory (and the models in the AMIP suite) says that the OLR perturbation should reach a maximum (negative) impact after some finite time period (as the CO2 diffuses and causes feedback effects), and thereafter should decrease in magnitude (in response to the induced increase in brightness temperature) to zero after some equilibration time, te, when once again radiative equilibrium is achieved. (I am not going to try to produce a solution for surface temperature under this model, since, as you point out, it is complicated by the need to solve for net downward radiation; however, we can assert that over the same time period, temperature should be increasing until it asymptotes to its new stable level at time te, ignoring seasonal variation.)

The area under this single-pulse OLR curve under 1st law must be equal to the total energy added to the system, since we have deemed TSI to be constant using our magical assumptive powers, and there is no other energy gained or lost by the system. Call this energy value “Ea”. The final equilibrium temperature from the CO2 pulse can be related to Ea through the specific heat capacity of the planet, while the time variation of brightness temperature is dictated by S-B. If the specific heat capacity is moderately constant, then there should be a linear relationship between temperature change at equilibrium and the energy, Ea, from this single pulse.

I hope that this simple model is clear so far and not yet controversial.

Now, for convenience, let us rescale the single pulse OLR perturbation curve by dividing throughout by the area subtended by the curve, Ea. The area subtended by the new curve (let’s call it “dimensionless OLR”) becomes equal to 1. This dimensionless OLR curve, since it is all on one side of the zero line (positive or negative depending on what convention one wishes to adopt) has the mathematical properties of a probability density function on [0,te), where te is the equilibration time. So let’s call this curve f(t) and let’s call the integral form F(t). F(t) is the integral of f from 0 to t – analogous to the cumulative distribution function of f.

We now use superposition to model the total OLR response of the planet (to any given CO2 profile) as an aggregate of stacked annual pulses. (For mathematical purists, there is an issue about the validity of using superposition when , as in this case, we cannot confirm that the underlying homogeneous equations are linear, but in this instance, we can show that the linear combination of solutions completely satisfies initial conditions and asymptotic boundary conditions. But, I digress.)

Let us first consider the case where the atmospheric CO2 profile increases geometrically year-on-year, because it offers extraordinary insight. Because of the log relationship between CO2 and temperature rise, the OLR response curve initiated each year is the same for each year. When we stack the solutions year-on-year, we find that the total dimensionless OLR response of the planet for this case, rather remarkably, is equal to:-

F(t) when t is less than or equal to te; and

1.0 when t is greater than te.

In order to obtain the actual OLR response from the dimensionless form, recall that it is necessary to rescale the above result by Ea, where Ea corresponds to the total energy related to the first year’s CO2 pulse. Note also one very important conclusion from this is that, since F(t) has the attributes of a Cumulative Distribution Function, the OLR response to this cumulative perturbation is monotonic increasing in magnitude (actually in a negative direction) for t less than te, irrespective of the shape and modality of the response function f(t) chosen or calculated!!!

It says that, starting from a position of radiative balance, if CO2 increases by a fixed % per year, then we expect measured OLR to decrease monotonically until time te, and to then become constant. After the equilibration period, we are adding the same energy commitment (or equilibrium temperature commitment) year-on year, which makes intuitive sense given the assumption in this case of geometric growth in atmospheric CO2 and the logarithmic relationship between CO2 concentration and temperature. Only when one stops or slows down the growth in CO2 does measured OLR start to show an increase.

One can apply this solution methodology quite readily to a model of linear growth in CO2, or by inputting actual Mauna Loa data, by the simple expedient of applying separate rescalings to each successive year of CO2 pulse to account for the reduced energy commitment relative to the first year.

Let us now consider the critical period from the mid 70s to turn of the century, when IPCC attributes planetary heating largely to CO2.

In IPCC AR4 are the results of “constant composition commitment” numerical experiments run on GCMs that suggest an equilibration time for radiative balance of in excess of 80 years.

Using a value of te = 80 years, it makes no difference to the conclusions whether one fits a geometric growth model to CO2, or a linear model or one uses the actual data. The results are all very similar since the time period of interest (25 years or so) is much less than te. For ANY single year OLR response function f(t), all three assumptions lead to a very similar OLR perturbation suggesting conclusively that the CO2 should lead to a strong MONOTONIC DECREASE in OLR over this period.

Ah, but, but, but, but (you may say) this solution methodology only considers the cumulative PERTURBATION in OLR from a position of radiative equilibrium. There may be a previous “commitment” which would cause OLR to increase over this period and which more than offsets the effect of the more recent CO2 addition. And this would explain why OLR has, according to data from ERBE, IRIS IMG and TES, apparently been increasing over this critical period instead of doing the decent thing in support of the IPCC view on attribution.

In the real world, I would have to agree that this is a distinct possibility. But in terms of the validity of the IPCC position, it looks very dubious. Most of the GCMs (by blog-repute at least since I have seen actual data for only a few) manage to bring the planet back into radiative equilibrium by the 70s (accidentally in all probability) by the expedient of using aerosols as an almost mirror-like equal and opposite forcing to CO2. This allows a history match of the decreasing surface temperature from the 40s through to the 70s. It also means, however, that the effect of pre-70s CO2 in the GCMs has already been neutralised. In which case, the above analysis from a position of radiative equilibrium is a valid approximation and, if CO2 were the primary cause of heating in the late 20th century, we should have seen a decrease in OLR. On the other hand, a withdrawal of atmospheric aerosols (in the models or in reality) would cause an increase in SW at the surface and an observed increase in OLR.

In conclusion, and with apologies for the length of this post, I agree with your criticism of Gary’s analysis, but I still believe that the observed increase in OLR is, indeed, a smoking gun for the IPCC’s attribution studies.

I’m wondering whether this actual experiment (as opposed to thought experiment) could have some bearing on the situation:

1. Fill two quart jars with equally hot tap water, as hot as possible. These two jars can be called “Jar A” and “Jar B”.

2. Place both jars on a table, three feet apart.

3. Around Jar A, place five jars filled with hot water, just less than the temperature of Jar A. These jars should be placed at a slight distance from Jar A, so they are barely not touching Jar A, arranged like petals of a flower.

4. Around Jar B, place five jars filled with ice (or five empty jars), arranged in the same manner as step 3.

5. After 30 minutes has elapsed, compare the temperature of Jar A and Jar B.

I welcome suggestions for improving the design of the experiment.

Your assumption about the emissivity of a star is the mistake you have made. Look at the Sun(careful!). Does it look like a black body?

Temperature is as you know a measure of the average kinetic energy of the moledules of the object, be it solid, liquid, gaseous, plasma, degenerate, or neutron star matter. Photons emitted by molecules at a temperature cannot be absorbed by molecules at a higher temperature. They just can’t. They are not absorbed. The heat is not transferred.

“You can’t win, you can’t break even, and you can’t drop out of the game.” Get used to it. If there is one law we have tried to break, it is this one, no perpetual motion…

Micheal Moon wrote:

“Photons emitted by molecules at a temperature cannot be absorbed by molecules at a higher temperature.” This is demonstrably false, but let’s assume you are correct.

If a photon leaves the earth and arrives at the sun, what happens to it if it isn’t absorbed? Does it go right through? Is it reflected? Is there a fourth option? It has to go somewhere, so where are you proposing that it goes?

Just curious.

As a hard core skeptic, I find this type of argument very frustrating. There are a number of demonstrably false statements in your argument (such as perfect black body radiators are black. This shows a lack of understanding of the definition of a black body radiator). This in turn allows acolytes to point to you and say “see, these guys don’t understand the basic premises of the science.” And real scientists who don’t study climate, look at your statements and have to agree and write off skeptics in general. This type of thing happens on both sides of the climate change argument, with Al Gore perhaps coming to mind.

Everything I’ve read on this site to date is based on known, accepted science (all of which relates to many fields, in addition to climate). Some of the work is (in my opinion) open to debate. I continue to research my hypothesis, now with a better understanding thanks to this site. Most of the science of radiant heat transfer is well established and well accepted. Engineers build things that work using this science every day. The real debate about radiant heat transfer effects in the atmosphere revolves around very small changes. The degree of A in AGW has not been fully established, but there is (or should be) no debate about the how. Only the how much.

JE

Science of Doom:

I`ve seen this statement from Ramanathan before:

by the same reasoning decrease of temperature with altitude also contributes to the trapping since radiation emitted by the warmer lower layers are trapped in the regions above.

This lapse rate factor is the essence of the `blanket` effect, versus the `marker` effect. I`ve talked about Leckner on another thread and in particular, the engineering method for evaluating radiant heat transfer. In other areas, we do not look at this transmission from layer to layer. We look at the total thickness and partial pressure of the intervening gas to come up with the path length. It is here that climate scientists diverge from other fields (Well, he also talks about convection, but I`ll take your word for the assertion that he doesn`t consider it to affect radiation). Note that the temperature gradient in the atmosphere is pretty small, relative to something like a blast furnace. Indeed, I would call ±50K a constant temperature.

Paul_K:

Thanks for the kind words.

Some interesting ideas in there. But the question about measurable OLR decreases is a difficult one. The amounts are small.

In the end, on any given day at any given location, theoretical OLR will be a function of surface temperature and absorbers in the atmosphere (CO2, H2O etc) through that profile.

So, for example, we pick a hot year and OLR will be higher – because surface temperature has increased the emission of radiation more than increases in trace gases can reduce it.

GCM models don’t show a monotonic increase in surface temperature, they show year on year ups and downs.

What’s more important I think is to understand why the temperature changes year on year. As Trenberth comments, saying “natural variability” isn’t answering the question.

As for attribution, I believe it is a difficult question while “natural variability” is not well understood. Stratospheric cooling is one interesting attribution concept.

John Eggert:

Without the concept the answers cannot be accurately known. Perhaps “other areas” are simpler.

Each “layer” of the atmosphere is in local thermodynamic equilibrium (below about 60km for rotational and vibrational modes of gas molecules and below about 500km for translational modes of gas molecules). Concentrations of different gases change with altitude (e.g. water vapor has a much lower concentration higher up in the atmosphere while CO2 is well mixed). Absorption properties also change with temperature (and therefore with altitude).

So the atmosphere cannot be treated as one layer and we just calculate the absorption – because we get the wrong answer.

When you say climate science does it differently from other areas you appear to imply that the methodology is flawed in climate science. If there is something wrong with the theory (radiative transfer equations) as applied you should point it out.

My understanding is that when the “solution” is calculated by just using absorption through the atmosphere for each trace gas a different answer is obtained compared with when the absorption and emission are calculated at each “layer” in the atmosphere.

Which to trust – the approximation, or the more thorough treatment?

Your statement that:

So the atmosphere cannot be treated as one layer and we just calculate the absorption – because we get the wrong answer.

Is where I disagree. For concentrations from 0 to about 200 ppm, I get the same answer. Specifically, the plot is colinear with a plot of deltaF=5.35lnCO2/CO2i. It is only at higher concentrations of CO2 that there is a divergence. I don`t exactly treat the atmosphere as one layer. I calculate using slices as well.

I’m not sure if there is a problem with the climate method of performing radiant heat transfer calcs. I still cling to the hope that I am wrong.

If the climate models are correct, they should duplicate other models that perform the same function. Do the methods used by climate scientists reproduce the results of other methods? That is, if we apply Ramanathan’s methods of applying the solutions to the RTE’s, can we generate Leckner’s curves?

John Eggert:

I’m having to guess a little bit as to exactly what you are proposing as the method, so I can’t be sure.

I had the impression you didn’t agree with treating the atmosphere as one layer (from your statement, “ In other areas, we do not look at this transmission from layer to layer. We look at the total thickness and partial pressure of the intervening gas to come up with the path length. It is here that climate scientists diverge from other fields“)

Can you give me a reference for Leckner’s curves?

Why not explain your model/Leckner’s model in detail?

Otherwise I can’t answer the questions.

Science of Doom:-

Thanks for the response. My issue is not with MONOTONICITY per se. I was merely emphasising that the theoretical solution for the simple idealised system shows that OLR should be decreasing continuously under a CO2 forcing for as long as CO2 is growing geometrically and the time period of interest is less than the equilibration time.

Hence, IF CO2 was the primary driver of temperature gain from the 70s through to the end of the century, AND IF TSI variation was small enough to be negligible over that period (as argued by the IPCC) AND IF the equlibration time for CO2 is in excess of 80 years (as argued by the IPCC) AND IF there were no other dominant drivers at work (as argued by the IPCC), then we would expect to see a decreasing trend in OLR and not an increasing trend.

There are a number of possible explanations for why we have observed an increasing trend even if CO2 is a player, but I do not believe that any of them can encompass all of the IPCC assumptions simultaneously, nor do I believe that it is coincidental that all of the AMIP models underestimate the growth in OLR over this period in the critical tropical region. At the very least, all of this suggests to me a need to revise the climate sensitivity to CO2 downwards. This gun is still smoking.

(Your article on stratospheric cooling is fascinating. I am still working on it. Thanks.)

I have a detailed paper (still a work in progress) in MS word with the calculations in excell. If you provide a contact, I’ll e-mail them to you. I assume you can access my e-mail address associated with this post.

In short, the emissivity of an intervening gas is dependant on the product of the total length times the partial pressure over that length. I use layers to produce lengthXpressure values that are then summed to obtain the atmospheric path length. This is then used to determine the emissivity. It works horizontally, so it should also work vertically.

If you use google books and search:

Heat Transfer Handbook, Adrian Bejan

you will be directed to one of my references. Page 618 are Leckner’s curves. There is a typo on the CO2 curve.

When I studied this in university, we used a text printed in 1952. Hottel’s curves and method were considered standard then for radiant heat effects. I believe it is out of print, but I’ve seen a number of heat transfer texts that reference the curves. Indeed, I haven’t found a heat transfer text yet that doesn’t reference either Hottel or Leckner when considering radiant energy transfer and intervening media.

I studied this in 1987. My professor talked about atmospheric effects and the fact (his word) that CO2 was saturated in the atmosphere.

At this time, I’m working on applying the impact of water on CO2 absorption as the values I obtain for total radiant absorption are higher than accepted values (I get 43 W/m²). I believe that by accounting for water, temperature and pressure my numbers will be very close to accepted values. The calculations for water are substantially more complicated than for CO2 and this is a spare time project for me.

Best.

JE

John Eggert:

The term saturation has a different meaning to different people.

In the current debates about the role of CO2 outside of atmospheric physics papers (in the blog world) it means “CO2 can do no more, or almost no more”.

In the world of atmospheric physics “saturated” means the atmosphere is optically thick at that wavelength. When the atmosphere is optically thin the transmittance increases as exp(-u) and when optically thick the transmittance increases as exp(-u^1/2), where u is the amount of absorber in the path.

See CO2 – An Insignificant Trace Gas? Part Four

And look out for CO2 Part Eight – Saturation which I am working on at the moment..

Everyone in atmospheric work references Goody. Seeing as Leckner also references Goody for this field I’m not sure what you are getting at.

That said, I am still interested to track down your reference.

Last question (honest!).

Lets have a thought experiment (actually, a very easily though perhaps not cheaply performable experiment).

A long chamber of some arbitrary diameter, lying on it’s side.

In the chamber is dry air with no CO2 (we will assume there is such a thing).

On one end of the chamber, we have a heat source and the other a black surface that is continuously cooled.

We then begin injecting CO2 into the chamber. There is a relief valve, such that the pressure in the chamber does not increase. After each injection, the system is allowed to stabilize.

As the CO2 increases, we measure the light spectrum at the black surface and the temperature of the gas.

At very low levels of CO2, each increase in CO2 will have a significant effect on the energy absorbed by the gas.

As the level of CO2 increases, the effect on energy absorbed by the gas will decrease (that is for each unit increase in CO2, the unit increase in absorbed energy will be less, but the CO2 will continue to absorb energy). This is best modelled by a logarithmic relation.

Up to this point, I believe we agree.

Here is where it seems we disagree.

I believe:

At some point, further increases in CO2 will no longer impact on the amount of heat absorbed by the gas. This would be true if the chamber were lying on its side (and hence P across the chamber is constant) or standing upright (and hence P across the chamber decreases with height). This point would be when the product of length times partial pressure of CO2 is about 200 bar cm. This assertion has been the entire basis of my exchange.

You believe:

Increasing CO2 will result in increasing heat absorption, following a logarithmic relation, even when the rest of the gas in the chamber has been displaced and after that, even if the pressure of the chamber is allowed to increase up to the point where there is a phase change from gas to (probably) solid.

If on the other hand, you agree with me, what is the level of atmospheric CO2 at which there is no longer an impact on heat absorption from increasing CO2?

Finally:

I must again thank you for your patience in this. You have provided me with (by far) the best explanation of the underlying science. Were there a chapter in the IPCC reports that was as clearly written and well documented as yours, it is likely there would be fewer skeptics such as myself. That is those who question whether further increases will have the magnitude of impact that is needed for the rest of the stuff to happen. I have no opinion on the veracity of temperature measurements, impact projections etc.

Best

JE

John Eggert:

Thanks for the kind words. And there’s no problem with asking continual questions, as they are good ones and worth answering.

The results of the experiment will be quite different from the real atmosphere because of the effects of temperature and pressure.

Each spectral line in CO2’s absorption spectra has a line shape and line width. This line width and line shape depends on pressure and temperature, the effect of which needs to be modeled. It’s a very specialist subject, but you get quite different results from reality if these changes are not taken into account.

On to the experiment which is a very good experiment to think about. I believe you will see continual absorption of radiation by CO2 even beyond the point you mention but there is an important point to note:

– Allowing the atmosphere to heat (rather than keeping it at a constant temp and measuring the transmittance) will change the absorptivity. I think the line width changes proportional to T^(-1/2) under these conditions, but I might have got it the wrong way round with it being proportional to T^(1/2). This might be important!

I will try and figure it out properly later.

There is a good qualitative explanation along with some numbers at http://www.realclimate.org/index.php/archives/2007/06/a-saturated-gassy-argument-part-ii/

And just an early look at one of the graphs in the upcoming Part Eight – Saturation: