In Part One we raced through some basics, including the central limit theorem which is very handy.

This theorem tells us that even if we don’t know the type of distribution of a population we can say something very specific about the mean of a sample from that population (subject to some caveats).

Even though this theorem is very specific and useful it is not the easiest idea to grasp conceptually. So it is worth taking the time to think about it – before considering the caveats..

What do we know about Samples taken from Populations?

Usually we can’t measure the entire “population”. So we take a sample from the population. If we do it once and measure the mean (= “the average”) of that sample, then repeat again and again, and then plot the “distribution” of those means of the samples we get the graph on the right:

Figure 1

– and the graph on the right follows a normal distribution.

We know the probabilities associated with normal distributions, so this means that even if we have just ONE sampling distribution – the usual case – we can assess how likely it is that it comes from a specific population.

Here is a demonstration..

Using Matlab I created a population – the uniform distribution on the left of figure 1. Then I took a random sample from the population. Note that in real life you don’t know the details of the actual population, this is what you are trying to ascertain via statistical methods.

Figure 2

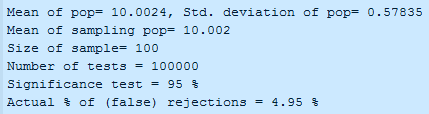

Each sample was 100 items. The test was made using the known probabilities of the normal distribution – “is this sample from a population of mean = 10?” And for a statistical test we can’t get a definite yes or no. We can only get a % likelihood. So a % threshold was set – you can see in figure 3, it was set at 95%.

Basically we are asking, “is there a 95% likelihood that this sample was drawn from a population with a mean of 10?”

The exercise of

a) extracting a random sample of 100 items, and

b) carrying out the test

– was repeated 100,000 times

Even though the sample was drawn from the actual population every single time, 5% of the time (4.95% to be precise) the test rejected the sample as coming from this population. This is to be expected. Statistical tests can only give answers in terms of a probability.

All we have done is confirmed that the test to 95% threshold gives us 95% correct answers and 5% incorrect answers. We do get incorrect answers. So why not increase the level of confidence in the test by increasing the threshold?

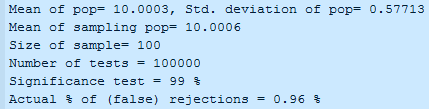

Ok, let’s try it. Let’s increase the threshold to 99%:

Figure 3

Nice. Now we only get just under 1% false rejections. We have improved our ability to tell whether or not a sample is drawn from a specific population!

Or have we?

Unfortunately there is no free lunch, especially in statistics.

Reducing the Risk of Rejecting one Error Increases the Risk of Accepting a Different Error..

In each and every case here we happen to know that we have drawn the sample from the population. Suppose we don’t know this? – The usual situation. The wider we cast the net, the more likely we are to assume that a sample is drawn from a population when in fact it is not.

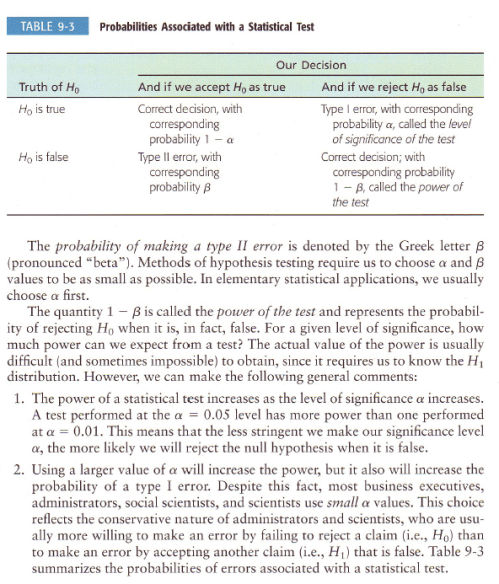

I’ll show some examples shortly, but here is a good summary of the problem – along with the terminology of Type I and Type II errors – note that H0 is the hypothesis that the sample was drawn from the population in question:

Figure 4

What we have been doing by moving from 95% to 99% certainty is reducing the possibility of making a Type I error = thinking that the sample does not come from the population in question when it actually does. But in doing so we have been increasing the possibility of making a Type II error = thinking that the sample does come from the population when it does not.

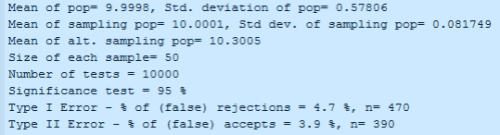

So now let’s widen the Matlab example – we have added an alternative population and are drawing samples out of that as well.

So first – as before – we take samples from the main population and use the statistical test to find out how good it is at determining whether the samples do come from this population. Then second, we take samples from the alternative population and use the same test to see whether it makes the mistake of thinking the samples come from the original population.

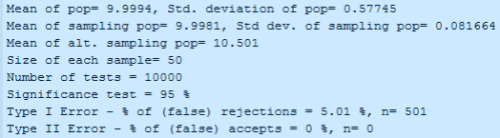

Figure 5

As before, the % of false rejections is about what we would expect (note the number of tests was reduced to 10,000, for no particular reason) for a 95% significance test.

But now we see the % of “false acceptance” – where a sample from an alternative population is assessed to see whether it came from the original population. This error is – in this case – around 4%.

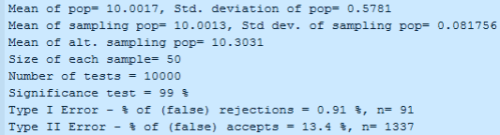

Now we increase the significance level to 99%:

Figure 6

Of course, the number of false rejections (type I error) has dropped to 1%. Excellent.

But the number of false accepts (type II error) has increased from 4% to 13%. Bad news.

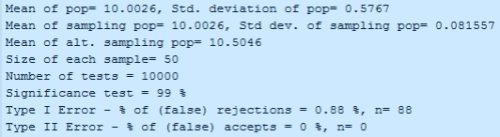

Now let’s demonstrate why it is that we can’t know in advance how likely Type II errors are. In the following example, the mean of the alternative population has moved to 10.5 (from 10.3):

Figure 7

So no Type II errors. And we widen the test to 99%:

Figure 8

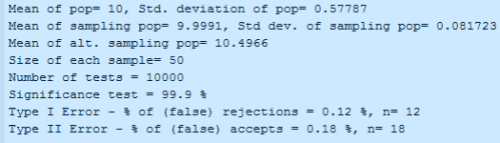

Still no Type II errors. So we widen the test further to 99.9%:

Figure 9

Finally we get some Type II errors. But because the population we are drawing the samples from is different enough from the population we are testing for (our hypothesis) the statistical test is very effective. The “power of the test” – in this case – is very high.

So, in summary, when you see a test “at the 5% significance level” =95%, or at the “1% significance level” = 99%, you have to understand that the more impressive the significance level, the more likely that a false result has been accepted.

Increasing the Sample Size

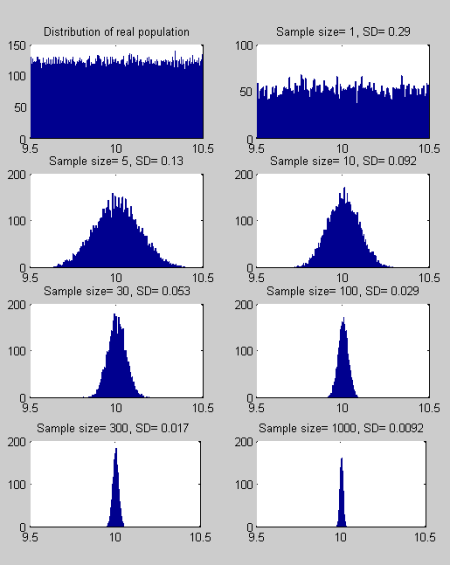

As the sample size increases the distribution of “the mean of the sample” gets smaller. I know, stats sounds like gobbledygook..

Let’s see a simple example to demonstrate what is a simple idea turned into incomprehensible English:

Figure 10

As you increase the size of the sample, you reduce the spread of the “sampling means” and this means that separating truth from fiction becomes easier.

It isn’t always possible to increase the sample size (for example, the monthly temperatures since satellites were introduced), but if it is possible, it makes it easier to find whether a sample is drawn from a given distribution or not.

Student T-test vs Normal Distribution test

What is a student t-test? It sounds like something “entry level” that serious people don’t bother with..

Actually it is a test developed by William Gossett just over 100 years ago and he had to write under a pen name because of his employer. Statistics was one of his employer’s trade secrets..

In the tests shown earlier we had to know the standard deviation of the population from which the sample was drawn. Often we don’t know this, and so we have a sample of unknown standard deviation – and we want to test the probability that it is drawn from a population of a certain mean.

The principle is the same, but the process is slightly different.

More in the next article, and hopefully we get to the concept of autocorrelation.

In all the basic elements we have covered so far we have assumed that each element in a sample and in a population is unrelated to any other element – independent events. Unfortunately, in the atmosphere and in climate, this assumption is not true (perhaps there are some circumstances where it is true, but generally it is not true).

Hi SOD,

Your title was “Statistics and Climate”. I hope the climate bit will follow soon.

Cheers,

Martin

Thanks again. The slow pace is suiting me fine – I had to read this Part several times to get it.

[…] the first two parts we looked at some basic statistical concepts, especially the idea of sampling from a distribution, and investigated how this question is answered: Does this sample come from a population of mean […]

SOD: Your discussion of the inverse relationship between Type I and Type II errors could have mentioned that both types of errors are not equally “bad”.

In a science journal, investigators are normally required to reject the null hypothesis with p<0.05 before including a conclusion in the abstract of a paper. The IPCC calls this level of confidence "extremely likely" and p<0.10 "very likely". The majority of the IPCC's conclusions merely "likely", p<0.33. How does the IPCC get away with publishing conclusions that a science journal wouldn't normally consider to be "statistically significant"?

If a science journal adopted the IPCC's standards, perhaps up to 1/4 of the conclusions reported in such a journal would be due to (random) chance arrangement of the data. If one includes the possibility of systematic errors in experiments and investigator bias also impact the reliability of results, one can see that the normal standard for "statistical significance" (p<0.05) is sensible for scientific journals. This policy means that more Type II errors are made (causing fewer publications). Science advances more slowly, but the foundations of scientific knowledge are more secure.

Policymakers have a different agenda than scientists. If there is a 50% chance an expensive disaster can be avoided by spending a small amount of money, a policymaker probably will want to take action to prevent the possible disaster. In this case the policymaker would be far more interested in Type II errors rather than Type I errors. Demanding a standard of p<0.05 under these circumstances would be absurd. This is why the IPCC publishes conclusions that wouldn't be found in the abstract of scientific articles. Unfortunately, restrictions on GHG emissions aren't going to cost a "small amount" of money and the really expensive disaster appear decades away.

The conflict between the need for science to avoid Type I errors and the need of policymakers to avoid both types of errors lies at the heart of many of the controversies with the IPCC and climate science. The desire to influence policy appears to be corrupting the scientific standards of some scientists and an excessive focus on scientific uncertainty appears to be used by opponents to prevent any action.

SoD,

Thanks for this – as someone who is very much a beginner in this area I think I will find this series a big help in following some of the statistical arguments around climate. However, being a beginner I have a very basic (and possibly dumb) question.

You did two initial tests – one with a 95% significance level then one with a 99% significance level, and got about 5% and 1% false rejections respectively. This initially seemed completely counter-intuitive to me because if you are asking the question “is there a n% likelihood that this sample was drawn from a population with a mean of 10?“ it seemed logical to expect more rejections with a higher significance level because it’s easier to make a claim with 95% probability than with 99% probability.

Having given it more thought, would I be right in saying that the question could be re-phrased as “given a population with mean of 10 and a normal distribution, would this sample fall within the range of values covering n% of the population”? This would explain the number of false rejections which resulted but it does seem to be a slightly different question.

Or am I on the wrong track entirely?

andy:

I’m not sure I understand the question, but I do understand the problem of getting confused by the statistics ideas presented.

To attempt to clarify your first question, take a look at figure 10 (the last figure) and the 3rd graph (2nd row, left side). You can see the range of values you get if you keep taking samples of 5 items and take the mean (=”the average”) in each case.

With me so far?

Now suppose you personally take a sample of 5 items and take the mean of those 5 items. Let’s say the mean = 9.5. Is it “likely” that it came from the original distribution?

It’s “unlikely”. The reason is that the range of averages of 5 items mostly falls between about 9.7 – 10.3.

In fact, the shape of the curve gives an idea of the probabilities involved. If we said, is there a 50% chance it (the value of 9.5) did not come from the original distribution? – the answer would be “Yes”.

If we said, is there a 10% chance it did not come from the original distribution? – the answer would be “Yes”. This is the converse of saying, is there a 90% likelihood that it did come from the original distribution? And getting the answer “No”.

If we said, is there a 0.000001% chance it did not come from the original distribution? – the answer would be “No”. This is the converse of saying, is there a 99.999999% likelihood that it did come from the original distribution? And getting the answer “Yes” [update – this last just corrected].

Perhaps I haven’t answered the question or helped…?

It depends what the claim is. And what the truth is.

If it is true that the sample was drawn from the original population then the higher we make the probability level the more likely we are to find the (true) claim true. The lower we make the probability level the more likely we are to think the true claim is false. This is because the mean of each sample will be different – and some will “land” a long way from the original population mean.

If in fact the sample was drawn from a different population, then the higher we make the probability level the more likely we are to believe a false claim to be true. Imagine spreading the net so wide – instead of from 9.5-10.5 imagine spreading it from 1.5 – 19.5 – now just about every sample will be happily accepted as being from the original population of mean = 10.

SoD,

Many thanks – it’s much straighter in my mind now and I managed to follow the rest of the article. I’ll see how I do when it gets really complex 😉

[…] in Part Two we have seen how to test whether a sample comes from a population of a stated mean value. The […]

Typically the population is very large making a or a complete of all the values in the population impractical or impossible. Samples are collected and statistics are calculated from the samples so that one can make or from the sample to the population.

OK this is about Hypothesis testing, and is the major issue with Man-made climate change. In a typical experiment one wishes to determine (beyond some acceptable small doubt) if something exists. It can be a (i) drug effect in clinical trials, it can be a (ii) man-made effect in climate change. The framework is to assume it does not (the null [effect] or H0) and work out the chance that you observed your data under this assumption. So we assume the (i) drug doesn’t work or (ii) man did not have an effect on climate. Let’s take (i) a potential new headache pill doesn’t work. So we would expect that if we gave a smartie instead it would work the same. Collect some data on the pill and the smartie, we’ll cut out all the gruesome details, when we do the voodoo (technical term for stats) we get the P(getting data given H0 is true) = 0.08.

If the drug is just a different coloured smartie and everyone who took it is colour blind then we would get the data we did in 8% of such studies. Then all that remains is to wonder if that’s a big enough chance to conclude one way or the other – long story.

Question have you ever seen the null quoted by the AGW protagonists? It should be that ‘man did not do it’ of course. Then we should see a whole series of studies that demonstrate some evidence towards man actually doing something to the climate. Observations are typically a direct link of CO2 to temperature, that is evidence, but I haven’t seen much of it. I’m afraid stuff like Polar bears are becoming cannibalistic etc are pretty much worthless. Also that it’s getting hotter is immaterial to the hypothesis and as such also pretty worthless.

Of course those pushing this thing did not set up their experiment in any suitable fashion at all. It would be simple if they did (wouldn’t it?), they just work out the probability that they got their data given man didn’t do anything (similar to my smarties having no effect)? Not that simple I suppose because there are so many climate factors that are confounded (meaning completely inseparable). The problem is actually impossible as the climate is just far too complex for any one man to comprehend even a small part of, it’s guess work.

Instead what they have cleverly done is to hide behind complex theories and circumstantial evidence (such as a few variables are correlated without assessment of cause). They also now claim (because ‘most scientists agree’ – who did they ask – bias perhaps?) that anyone questioning the man-made claim should prove that man didn’t do it.

Boy oh boy they’ve turned the normal scientific approach (statistical in nature) completely ass about t1t. They want that man did it as the H0 and the Ha (alternative to H0) as man didn’t do it. That’s not how it works apart from in an equivalence testing scenario and that’s not where we are.

Notice the ever desperate scare stories and recourse to the demise of fluffy creatures, feeding mans tendency and need for self-flagellation? When asked about the evidence that man is responsible they now say prove that he isn’t? If it wasn’t so tragic you would have to laugh.

This is how they now perpetuate the scam, no doubt man has effects on the climate (mostly local UHI etc) but via CO2, and to the extent they claim will happen unless you pay them lots of money – COME ON, SMELL THE COFFEE.

David Shaw:

You seem new to this blog. Please read the Etiquette and About This Blog.

Long essays unrelated to the specifics of the article especially with “stuff people have done” are liable to get snipped or removed.

If you have a specific point related to the article in question you are welcome, and encouraged, to make it.

If you want to sound off about “stuff people have done” and “stuff you think people might have done” there are many better blogs with higher readership and more people who will thank you for your contribution.

This blog welcomes specific testable ideas related to the article.

So, for example, I haven’t made any claim about polar bears and neither has anyone else commenting on this article. Now if you have a specific example of a polar bear hypothesis that someone has made that you believe will help to highlight a specific point in this article, please go ahead.

On the other hand, if you want to sound off about random people who have written stuff about polar bears but never written anything on this blog and never been endorsed on this blog you are in the wrong place.

This is a science blog.

Your next comment – will be snipped or deleted if it does not follow blog guidelines.

Then I shall leave you to be. I was only attempting to clarify your statistics in terms of climate science, forgive me I’m a statistician, frankly I don’t get your point. All I do is give examples, if you wish to discuss statistics in the context of climate I suggest (as someone else did) you do likewise. I have never heard such a tirade at such an inoffensive post.

Good luck with it all. Please delete as you wish, but maybe people want to understand how statistics is being misused in terms of climate science. But hey. what do I know I’m just a scientist. I apologise to all for any offence that this post causes, I do not know of any personal message system that I can post the blog ‘scientist’ upon.

David