In Eleven – End of the Last Ice age we saw the sequence of events that led to the termination of the most recent ice age – Termination I.

The date of this event, the time when the termination began, was about 17.5-18.0 kyrs ago (note 1). We also saw that “rising solar insolation” couldn’t explain this. By way of illustration I produced some plots in Pop Quiz: End of An Ice Age – all from the last 100 yrs and asked readers to identify which one coincided with Termination I.

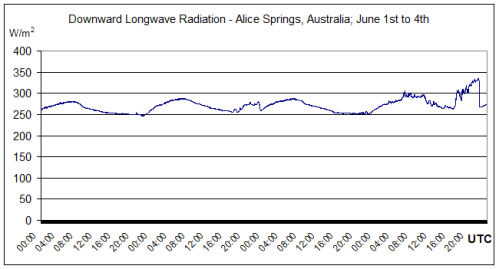

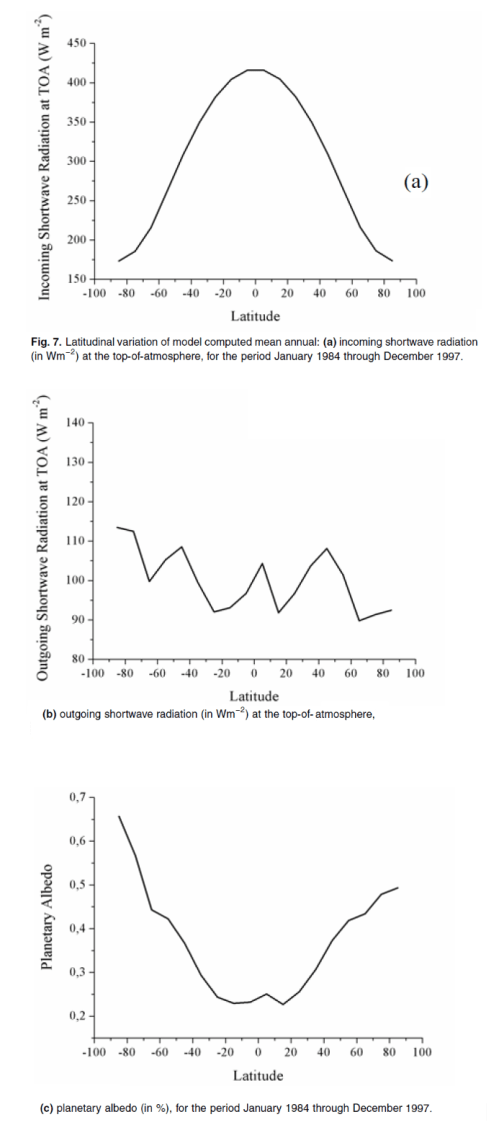

But this simple graph of insolation at 65ºN on July 1st summarizes the problem for the”classic version” (see Part Six – “Hypotheses Abound”) of the “Milankovitch theory” – in simple terms, if solar insolation at 18 kyrs ago caused the ice age to end, why didn’t the same or higher insolation at 100 kyrs, 80 kyrs, or from 60-30 kyrs cause the last ice age to end earlier:

Figure 1

And for a more visual demonstration of solar insolation changes in time, take a look at the Hövmoller plots in the comments of Part Eleven.

The other problem for the Milankovitch theory of ice age termination is the fact that southern hemisphere temperatures rose in advance of global temperatures. So the South led the globe out of the ice age. This is hard to explain if the cause of the last termination was melting northern hemisphere ice sheets. Take a look at Eleven – End of the Last Ice age.

Now we’ve quickly reviewed Termination I, let’s take a look at Termination II. This is the end of the penultimate ice age.

The traditional Milankovitch theory says that peak high latitude solar insolation around 127 kyrs BP was the trigger for the massive deglaciation that ended that earlier ice age.

The well-known SPECMAP dating of sea-level/ice-volume vs time has Termination II at 128 ± 3 kyrs BP. All is good.

Or is it?

What is the basis for the SPECMAP dating?

The ice age records that have been most used and best known come from ocean sediments. These were the first long-dated climate records that went back hundreds of thousands of years.

How do they work and what do they measure?

Oxygen exists in the form of a few different stable isotopes. The most common is 16O with 18O the next most common, but much smaller in proportion. Water, aka H2O, also exists with both these isotopes and has the handy behavior of evaporating and precipitating H218O water at different rates to H216O. The measurement is expressed as the ratio (the delta, or δ) as δ18O in parts per thousand.

The journey of water vapor evaporating from the ocean, followed by precipitation, produces a measure of the starting ratio of the isotopes as well as the local precipitation temperature.

The complex end result of these different process rates is that deep sea benthic foraminifera take up 18O out of the deep ocean, and the δ18O ratio is mostly in proportion to the global ice volume. The resulting deep sea sediments are therefore a time-series record of ice volume. However, sedimentation rates are not an exact science and are not necessarily constant in time.

As a result of lots of careful work by innumerable people over many decades, out popped a paper by Hays, Imbrie & Shackleton in 1976. This demonstrated that a lot of the recorded changes in ice volume happened at orbital frequencies of precession and obliquity (approximately every 20kyrs and every 40 kyrs). But there was an even stronger signal – the start and end of ice ages – at approximately every 100 kyrs. This coincides roughly with changes in eccentricity of the earth’s orbit, not that anyone has a (viable) theory that links this change to the start and end of ice ages.

Now the clear signal of obliquity and precession in the record gives us the option of “tuning up” the record so that peaks in the record match orbital frequencies of precession and obliquity. We discussed the method of tuning in some past comments on a similar, but much later, dataset – the LR04 stack (thanks to Clive Best for highlighting it).

The method isn’t wrong, but we can’t confirm the timing of key events with a dataset where dates have been tuned to a specific theory.

Luckily, some new methods have come along.

Ice Core Dating

It’s been exciting times for the last twenty plus years in climate science for people who want to wear thick warm clothing and “get away from it all”.

Greenland and Antarctica have yielded a number of ice cores. Greenland now has a record that goes back 123,000 years (NGRIP). Antarctica now has a record that goes back 800,000 years (EDC, aka, “Dome C”). Antarctica also has the Voskok ice core that goes back about 400,000 years, Dome Fuji that goes back 340,000 years and Dronning Maud Land (aka DML or EDML) which is higher resolution but only goes back 150,000 years.

What do these ice cores measure and how is the dating done?

The ice cores measure temperature at time of snow deposition via the δ18O measurement discussed above (note 2), which in this case is not a measure of global ice volume but of air temperature. The gas trapped in bubbles in the ice cores gives us CO2 and CH4 concentrations. We also can measure dust deposition and all kinds of other goodies.

The first problem is that the gas is “younger” than the ice because it moves around until the snow compacts enough. So we need a model to calculate this, and there is some uncertainty about the difference in age between the ice and the gas.

The second problem is how to work out the ice age. At the start we can count annual layers. After sufficient time (a few tens of thousands of years) these layers can’t be distinguished any more, instead we can use models of ice flow physics. Then a few handy constraints arrive like 10Be events that occurred about 50 kyrs BP. After ice flow physics and external events are exhausted, the data is constrained by “orbital tuning”, as with deep ocean cores.

Caves, Corals and Accurate Radiometric Dating

Neither deep sea cores, nor ice cores, give us much possibility of radiometric dating. But caves and corals do.

For newcomers to dating methods, if you have substance A that decays into substance B with a “half-life” that is accurately known, and you know exactly how much of substance A and B was there at the start (e.g. no possibility of additional amounts of A or B getting into the thing we want to measure) then you can very accurately calculate the age that the substance was formed.

Basically it’s all down to how to deposition process works. Uranium-Thorium dating has been successfully used to date calcite depositions in rock.

So, take a long section that has been continuously deposited, measure the δ18O (and 13C) at lots of points along the section, and take a number of samples and calculate the age along the section with radiometric dating. The subject of what exactly is being measured in the cores is complicated, but I will greatly over-simplify and say it revolves around two points:

- The actual amount of deposition, as not much water is available to create these depositions during extreme glaciation

- The variation of δ18O (and 13C), which to a first order depends on local air temperature

For people interested in more detail, I recommend McDermott 2004, some relevant extracts below in note 3).

Corals offer the possibility, via radiometric dating, of getting accurate dates of sea level. The most important variable to know is any depression and uplift of the earth’s crust.

Accurate dating of caves and coral has been a growth industry in the last twenty years with some interesting results.

Termination II

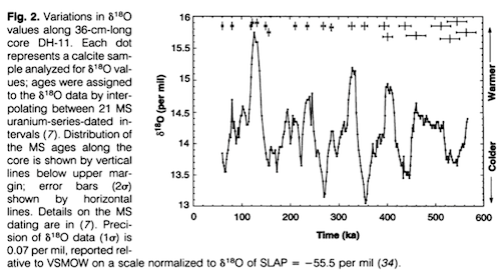

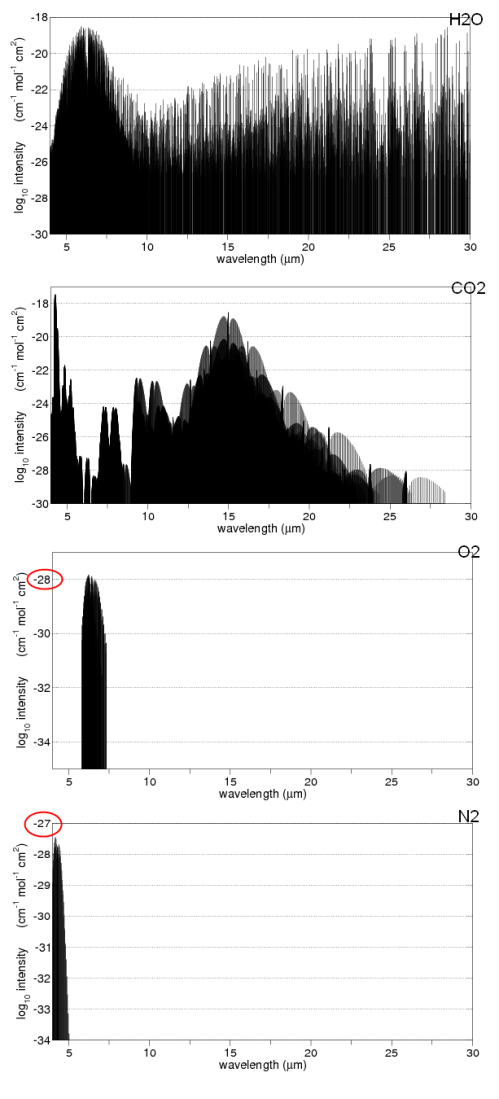

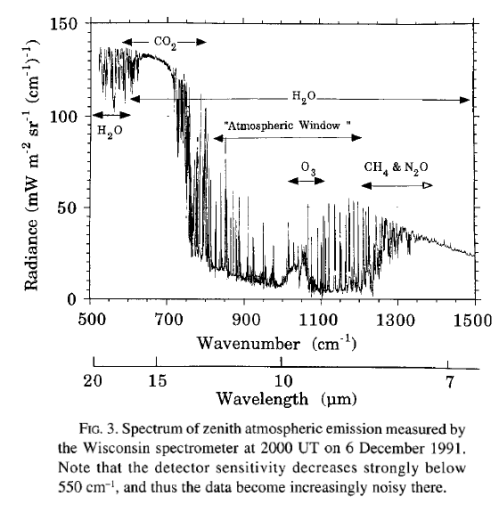

Winograd et al 1992 analyzed Devils Hole in Nevada (DH-11):

The Devils Hole δ18O time curve (fig 2) clearly displays the sawtooth pattern characteristic of marine δ18O records that have been interpreted to be the result of the waxing and waning of Northern Hemisphere ice sheets.. But what caused the δ18O variations in DH-11 shown on fig. 2? ..The δ18O variations in atmospheric precipitation are – to a first approximation – believed to reflect changes in average winter-spring surface temperature..

Figure 2

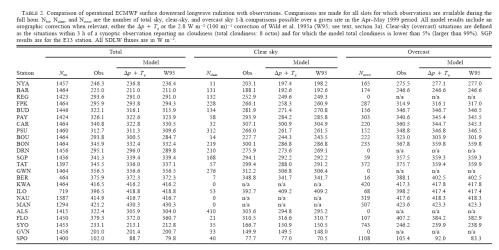

Termination II occurs at 140±3 (2σ) ka in the DH-11 record, at 140± 15 ka in the Vostok record (14), and at 128 ± 3 ka in the SPECMAP record (13). (The uncertainty in the DH-11 record is in the 2σ uncertainties on the MS uranium-series dates; other dates and uncertainties are from the sources cited.) Termination III occurred at about 253 +/- 3 (2σ) ka in the DH11 record and at about 244 +/- 3 ka in the SPECMAP record. These differences.. are minimum values..

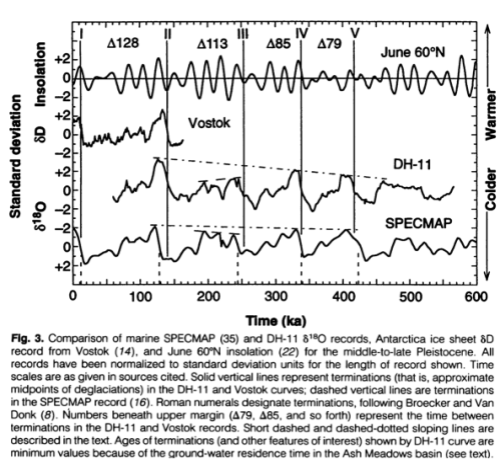

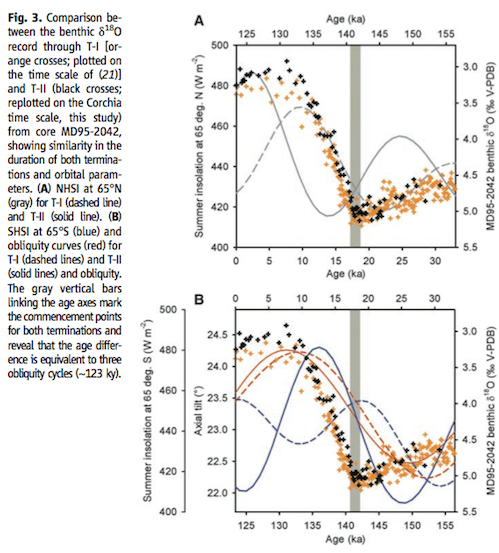

They compare summer insolation at 65ºN with SPECMAP, Devils Hole and the Vostok ice core on a handy graph:

Figure 3

Of course, not everyone was happy with this new information, and who knows what the isotope measurement really was a proxy for?

Slowey, Henderson & Curry 1996

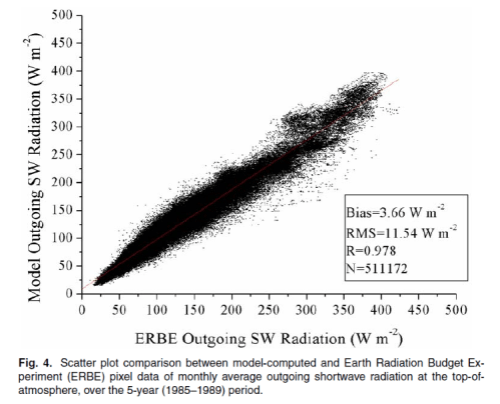

A few years later, in 1996, Slowey, Henderson & Curry (not the famous Judith) made this statement from their research:

Our dates imply a timing and duration for substage 5e in substantial agreement with the orbitally tunes marine chronology. Initial direct U-Th dating of the marine δ18O record supports the theory that orbital variations are a fundamental cause of Pleistocene climate change.

[Emphasis added, likewise with all quotes].

Henderson & Slowey 2000

Then in 2000, the same Henderson & Slowey (sans Curry):

Milankovitch proposed that summer insolation at mid-latitudes in the Northern Hemisphere directly causes the ice-age climate cycles. This would imply that times of ice-sheet collapse should correspond to peaks in Northern Hemisphere June insolation.

But the penultimate deglaciation has proved controversial because June insolation peaks 127 kyr ago whereas several records of past climate suggest that change may have occurred up to 15kyr earlier.

There is a clear signature of the penultimate deglaciation in marine oxygen-isotope records. But dating this event, which is significantly before the 14C age range, has not been possible.

Here we date the penultimate deglaciation in a record from the Bahamas using a new U-Th isochron technique. After the necessary corrections for a-recoil mobility of 234U and 230Th and a small age correction for sediment mixing, the midpoint age for the penultimate deglaciation is determined to be 135 +/-2.5 kyr ago. This age is consistent with some coral-based sea-level estimates, but it is difficult to reconcile with June Northern Hemisphere insolation as the trigger for the ice-age cycles.

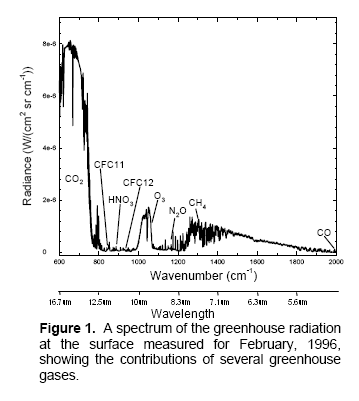

Zhao, Xia & Collerson (2001)

High-precision 230Th- 238U ages for a stalagmite from Newdegate Cave in southern Tasmania, Australia.. The fastest stalagmite growth occurred between 129.2 ± 1.6 and 122.1 ± 2.0 ka (61.5 mm/ka), coinciding with a time of prolific coral growth from Western Australia (128-122 ka). This is the first high-resolution continental record in the Southern Hemisphere that can be compared and correlated with the marine record. Such correlation shows that in southern Australia the onset of full interglacial sea level and the initiation of highest precipitation on land were synchronous. The stalagmite growth rate between 129.2 and 142.2 ka (5.9 mm/ka) was lower than that between 142.2 and 154.5 ka (18.7 mm/ka), implying drier conditions during the Penultimate Deglaciation, despite rising temperature and sea level.

This asymmetrical precipitation pattern is caused by latitudinal movement of subtropical highs and an associated Westerly circulation, in response to a changing Equator-to-Pole temperature gradient.

Both marine and continental records in Australia strongly suggest that the insolation maximum between 126 and 128 ka at 65°N was directly responsible for the maintenance of full Last Interglacial conditions, although the triggers that initiated Penultimate Deglaciation (at 142 ka) remain unsolved.

Figure 4

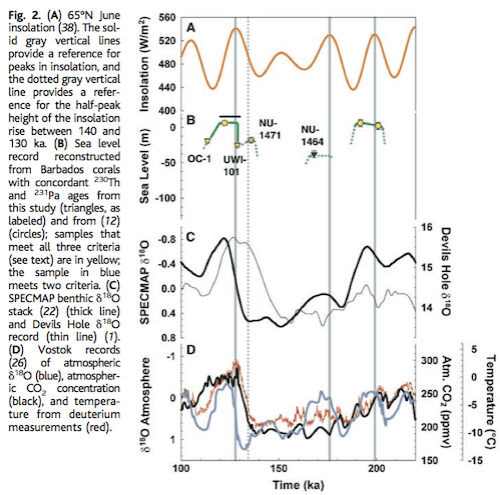

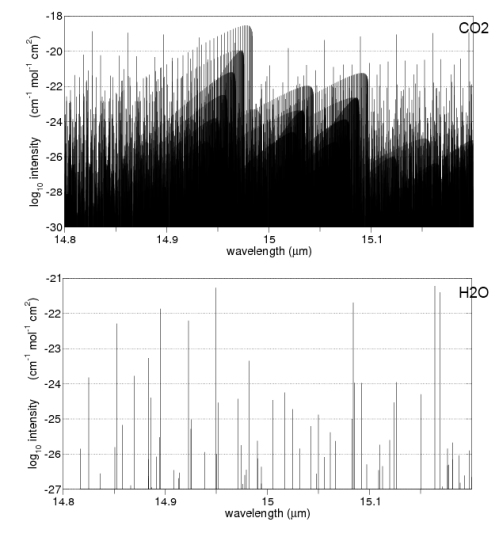

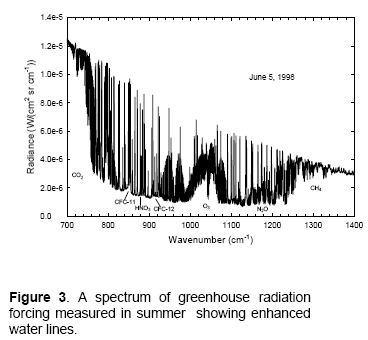

Gallup, Cheng, Taylor & Edwards (2002)

An outcrop within the last interglacial terrace on Barbados contains corals that grew during the penultimate deglaciation, or Termination II. We used combined 230Th and 231Pa dating to determine that they grew 135.8 ± 0.8 thousand years ago, indicating that sea level was 18 ± 3 meters below present sea level at the time. This suggests that sea level had risen to within 20% of its peak last- interglacial value by 136 thousand years ago, in conflict with Milankovitch theory predictions..

Figure 2B summarizes the sea level record suggested by the new data. Most significantly our record includes corals that document sea level directly during Termination II, suggesting that the majority (~80%) of the Termination II sea level rise occurred before 135 ka. This is broadly consistent with early shifts in δ18O recorded in the Bahamas and Devils Hole and with early dates (134 ka) of last interglacial corals from Hawaii, which call into question the timing of Termination II in the SPECMAP record..

Figure 5 – Click to expand

Of course, all is not lost for the many-headed Hydra (and see note 4):

..The Milankovitch theory in its simplest form cannot explain Termination II, as it does Termination I. However, it is still plausible that insolation forcing played a role in the timing of Termination II. As deglaciations must begin while Earth is in a glacial state, it is useful to look at factors that could trigger deglaciation during a glacial maximum. These include – (i) sea ice cutting off a moisture source for the ice sheets; – (ii) isostatic depression of continental crust; and – (iii) high Southern Hemisphere summer insolation through effects on the atmospheric CO concentration.

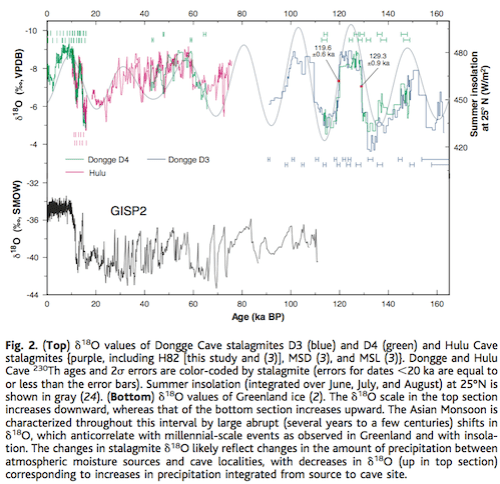

Yuan et al 2004 provide evidence in opposition:

Thorium-230 ages and oxygen isotope ratios of stalagmites from Dongge Cave, China, characterize the Asian Monsoon and low-latitude precipitation over the past 160,000 years. Numerous abrupt changes in 18O/16O values result from changes in tropical and subtropical precipitation driven by insolation and millennial-scale circulation shifts.

The Last Interglacial Monsoon lasted 9.7 +/- 1.1 thousand years, beginning with an abrupt (less than 200 years) drop in 18O/16O values 129.3 ± 0.9 thousand years ago and ending with an abrupt (less than 300 years) rise in 18O/16O values 119.6 ± 0.6 thousand years ago. The start coincides with insolation rise and measures of full interglacial conditions, indicating that insolation triggered the final rise to full interglacial conditions.

But they also comment:

Although the timing of Monsoon Termination II is consistent with Northern Hemisphere insolation forcing, not all evidence of climate change at about this time is consistent with such a mechanism (Fig. 3).

Figure 6 – Click to expand

Sea level apparently rose to levels as high as –21 m as early as 135 ky before the present (27 & Gallup et al 2002), preceding most of the insolation rise. The half-height of marine oxygen isotope Termination II has been dated at 135 +/- 2.5 ky (Henderson & Slowey 2000).

Speleothem evidence from the Alps indicates temperatures near present values at 135 +/- 1.2 ky (31). The half-height of the d18O rise at Devils Hole (142 +/- 3 ky) also precedes most of the insolation rise (20). Increases in Antarctic temperature and atmospheric CO2 (32) at about the time of Termination II appear to have started at times ranging from a few to several millennia before most of the insolation rise (4, 32, 33).

[Their reference numbers amended to papers where cited in this article]

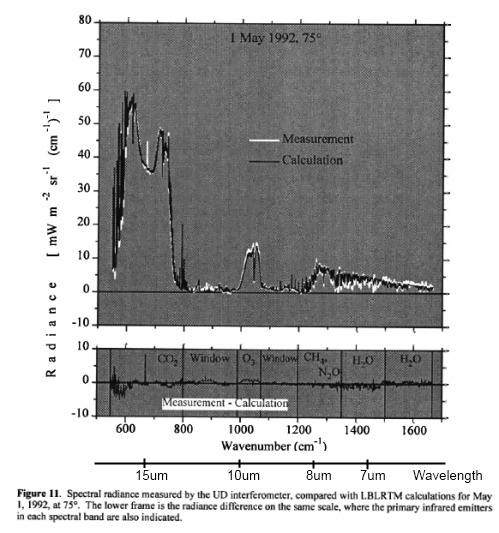

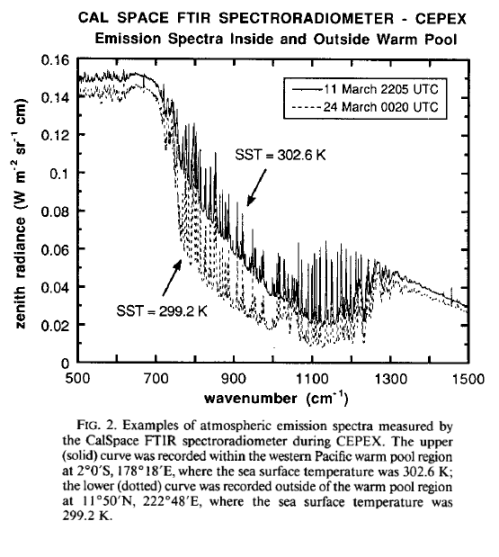

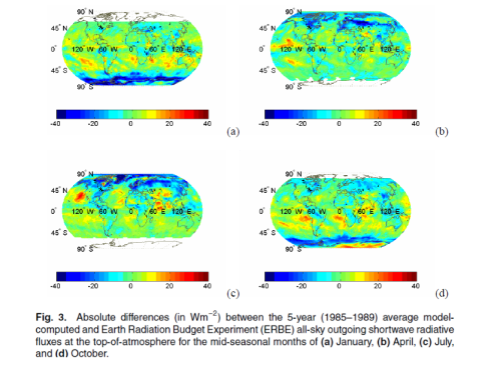

Drysdale et al 2009

Variations in the intensity of high-latitude Northern Hemisphere summer insolation, driven largely by precession of the equinoxes, are widely thought to control the timing of Late Pleistocene glacial terminations. However, recently it has been suggested that changes in Earth’s obliquity may be a more important mechanism. We present a new speleothem-based North Atlantic marine chronology that shows that the penultimate glacial termination (Termination II) commenced 141,000 ± 2,500 years before the present, too early to be explained by Northern Hemisphere summer insolation but consistent with changes in Earth’s obliquity. Our record reveals that Terminations I and II are separated by three obliquity cycles and that they started at near-identical obliquity phases.

Standard stuff by now, for readers who have made it this far.

But the Drysdale paper is interesting on two fronts – their dating method and their “one result in a row” matching a theory with evidence (I extracted more text from the paper in note 5 for interested readers). Let’s look at the dating method first.

Basically what they did was match up the deep ocean cores that record global ice volume (but have no independent dating) with accurately radiometrically-dated speleothems (cave depositions). How did they do the match up? It’s complicated but relies on the match between the δ18O in both records. The approach of providing absolute dating for existing deep ocean cores will give very interesting results if it proves itself.

Figure 7 – Click to expand

The correspondence between Corchia δ18O and Iberian-margin sea-surface temperatures (SSTs) through T-II (Fig. 2) is remarkable. Although the mechanisms that force speleothem δ18O variations are complex, we believe that Corchia δ18O is driven largely by variations in rainfall amount in response to changes in regional SSTs. Previous studies from Corchia show that speleothem δ18O is sensitive to past changes in North Atlantic circulation at both orbital and millennial time scales, with δ18O increasing during colder (glacial or stadial) phases and the reverse occurring during warmer (inter- glacial or interstadial) phases.

Figure 8 – Click to expand

Now to the hypothesis:

Figure 9

We find that NHSI [NH summer insolation] intensity is unlikely to be the driving force for T-II: Intensity values are close to minimum at the time of the start of T-II, and a lagged response to the previous insolation peak at ~148 ka is unlikely because of its low amplitude (Fig. 3A). This argues against the SPECMAP curve being a reliable age template through T-II, given the age offset of ~8 ky for the T-II midpoint (8) with respect to our record. A much stronger case can be made for obliquity as a forcing mechanism.

On the basis of our results (Fig. 3B), both T-I and T-II commence at the same phase of obliquity, and the period between them is exactly equivalent to three obliquity cycles (~123 ky).

(More of their explanation in note 5).

EPICA 2006

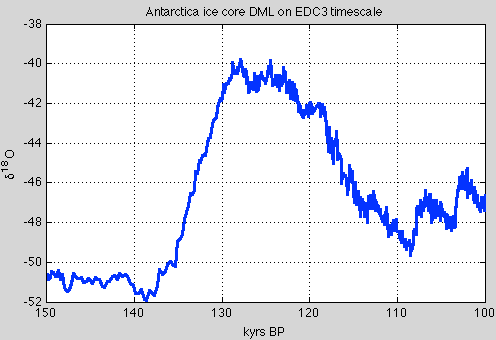

Here is my plot of the Dronning Maud Land ice core (DML) on EDC3 timescale from EPICA 2006 (data downloaded from the Nature website):

Figure 10

The many Antarctic and Greenland ice cores are still undergoing revisions of dating, and so I haven’t attempted to get the latest work. I just thought it would be good to throw in an ice core.

The value of δ18O here is a proxy for local temperature. On this timescale local temperatures began rising about 138 kyrs BP.

Conclusion

New data on Termination II from the last 20 years of radiometric dating from a number of different sites with different approaches demonstrate that TII started about 140 kyrs BP.

Here is the solar insolation curve at 65ºN over the last 180 kyrs, with the best dates of the two ice age terminations, separated by about 121 – 125 kyrs:

Figure – Click to expand

It’s proven that ice ages are terminated by low solar insolation in the high latitudes of the northern hemisphere. The basis for this is that the low solar insolation allows a much quicker build up of the northern hemisphere ice sheets, causing dynamic instability, leading to ice sheet calving, which disrupts ocean currents, outgassing CO2 in large concentrations and thereby creating a positive feedback for temperature rise. The local ice sheet collapses also create positive feedback due to higher solar insolation now absorbed. As the ice sheets continue to melt, the (finally) rising solar insolation in high northern latitudes strengthens the pre-existing conditions and helps to establish the termination. Thus the orbital theory is given strong support from the evidence of the timing of the last two terminations.

I just made that up in a few minutes for fun. It’s not true.

We saw one paper with different evidence for the start of TII – Yuan et al (and see note 6). However, it seems that most lines of evidence, including absolute dating of sea level rise puts TII starting around 140 kyrs BP.

This also means, for maths wizards, that the time between ice age terminations, from one result in a row, is about 122 kyrs.

As an aside, because Winograd et al 1992 calculated their age for TIII “at about 253 kyrs”:

This puts the time between TIII and TII at about 113 kyrs which is exactly the time of five precessional cycles!

I haven’t yet dug into any other dates for TIII so this theory is quite preliminary.

Articles in the Series

Part One – An introduction

Part Two – Lorenz – one point of view from the exceptional E.N. Lorenz

Part Three – Hays, Imbrie & Shackleton – how everyone got onto the Milankovitch theory

Part Four – Understanding Orbits, Seasons and Stuff – how the wobbles and movements of the earth’s orbit affect incoming solar radiation

Part Five – Obliquity & Precession Changes – and in a bit more detail

Part Six – “Hypotheses Abound” – lots of different theories that confusingly go by the same name

Part Seven – GCM I – early work with climate models to try and get “perennial snow cover” at high latitudes to start an ice age around 116,000 years ago

Part Seven and a Half – Mindmap – my mind map at that time, with many of the papers I have been reviewing and categorizing plus key extracts from those papers

Part Eight – GCM II – more recent work from the “noughties” – GCM results plus EMIC (earth models of intermediate complexity) again trying to produce perennial snow cover

Part Nine – GCM III – very recent work from 2012, a full GCM, with reduced spatial resolution and speeding up external forcings by a factors of 10, modeling the last 120 kyrs

Part Ten – GCM IV – very recent work from 2012, a high resolution GCM called CCSM4, producing glacial inception at 115 kyrs

Pop Quiz: End of An Ice Age – a chance for people to test their ideas about whether solar insolation is the factor that ended the last ice age

Eleven – End of the Last Ice age – latest data showing relationship between Southern Hemisphere temperatures, global temperatures and CO2

Twelve – GCM V – Ice Age Termination – very recent work from He et al 2013, using a high resolution GCM (CCSM3) to analyze the end of the last ice age and the complex link between Antarctic and Greenland

Fourteen – Concepts & HD Data – getting a conceptual feel for the impacts of obliquity and precession, and some ice age datasets in high resolution

Fifteen – Roe vs Huybers – reviewing In Defence of Milankovitch, by Gerard Roe

Sixteen – Roe vs Huybers II – remapping a deep ocean core dataset and updating the previous article

Seventeen – Proxies under Water I – explaining the isotopic proxies and what they actually measure

Eighteen – “Probably Nonlinearity” of Unknown Origin – what is believed and what is put forward as evidence for the theory that ice age terminations were caused by orbital changes

Nineteen – Ice Sheet Models I – looking at the state of ice sheet models

References

A Pliocene-Pleistocene stack of 57 globally distributed benthic D18O records, Lorraine E. Lisiecki & Maureen E. Raymo, Paleoceanography (2005) – free paper

Continuous 500,000-Year Climate Record from Vein Calcite in Devils Hole, Nevada, Winograd, Coplen, Landwehr, Riggs, Ludwig, Szabo, Kolesar & Revesz, Science (1992) – paywall, but might be available with a free Science registration

Palaeo-climate reconstruction from stable isotope variations in speleothems: a review, Frank McDermott, Quaternary Science Reviews 23 (2004) – free paper

Direct U-Th dating of marine sediments from the two most recent interglacial periods, NC Slowey, GM Henderson & WB Curry, Nature (1996)

Evidence from U-Th dating against northern hemisphere forcing of the penultimate deglaciation, GM Henderson & NC Slowey, Nature (2000)

Timing and duration of the last interglacial inferred from high resolution U-series chronology of stalagmite growth in Southern Hemisphere, J Zhao, Q Xia & K Collerson, Earth and Planetary Science Letters (2001)

Direct determination of the timing of sea level change during Termination II, CD Gallup, H Cheng, FW Taylor & RL Edwards, Science (2002)

Timing, Duration, and Transitions of the Last Interglacial Asian Monsoon, Yuan, Cheng, Edwards, Dykoski, Kelly, Zhang, Qing, Lin, Wang, Wu, Dorale, An & Cai, Science (2004)

Evidence for Obliquity Forcing of Glacial Termination II, Drysdale, Hellstrom, Zanchetta, Fallick, Sánchez Goñi, Couchoud, McDonald, Maas, Lohmann & Isola, Science (2009)

One-to-one coupling of glacial climate variability in Greenland and Antarctica, EPICA Community Members, Nature (2006)

Millennial- and orbital-scale changes in the East Asian monsoon over the past 224,000 years, Wang, Cheng, Edwards, Kong, Shao, Chen, Wu, Jiang, Wang & An, Nature (2008)

Notes

Note 1 – In common ice age convention, the date of a termination is the midpoint of the sea level rise from the last glacial maximum to the peak interglacial condition. This can be confusing for newcomers.

Note 2 – The alternative method used on some of the ice cores is δD, which works on the same basis – water with the hydrogen isotope Deuterium evaporates and condenses at different rates to “regular” water.

Note 3 – A few interesting highlights from McDermott 2004:

2. Oxygen isotopes in precipitation

As discussed above, d18O in cave drip-waters reflect

(i) the d18O of precipitation (d18Op) and

(ii) in arid/semi- arid regions, evaporative processes that modify d18Op at the surface prior to infiltration and in the upper part of the vadose zone.

The present-day pattern of spatial and seasonal variations in d18Op is well documented (Rozanski et al., 1982, 1993; Gat, 1996) and is a consequence of several so-called ‘‘effects’’ (e.g. latitude, altitude, distance from the sea, amount of precipitation, surface air temperature).

On centennial to millennial timescales, factors other than mean annual air temperature may cause temporal variations in d18Op (e.g. McDermott et al., 1999 for a discussion). These include:

(i) changes in the d18O of the ocean surface due to changes in continental ice volume that accompany glaciations and deglaciations;

(ii) changes in the temperature difference between the ocean surface temperature in the vapour source area and the air temperature at the site of interest;

(iii) long-term shifts in moisture sources or storm tracks;

(iv) changes in the proportion of precipitation which has been derived from non-oceanic sources, i.e. recycled from continental surface waters (Koster et al., 1993); and

(v) the so-called ‘‘amount’’ effect.

As a result of these ambiguities there has been a shift from the expectation that speleothem d18Oct might provide quantitative temperature estimates to the more attainable goal of providing precise chronological control on the timing of major first-order shifts in d18Op, that can be interpreted in terms of changes in atmospheric circulation patterns (e.g. Burns et al., 2001; McDermott et al., 2001; Wang et al., 2001), changes in the d18O of oceanic vapour sources (e.g. Bar Matthews et al., 1999) or first-order climate changes such as D/O events during the last glacial (e.g. Spo.tl and Mangini, 2002; Genty et al., 2003)..

4.1. Isotope stage 6 and the penultimate deglaciation

Speleothem records from Late Pleistocene mid- to high-latitude sites are discussed first, because these are likely to be sensitive to glacial–interglacial transitions, and they illustrate an important feature of speleothems, namely that calcite deposition slows down or ceases during glacials. Fig. 1 is a compilation of approximately 750 TIMS U-series speleothem dates that have been published during the past decade, plotted against the latitude of the relevant cave site.

The absence of speleothem deposition in the mid- to high latitudes of the Northern Hemisphere during isotope stage 2 is striking, consistent with results from previous compilations based on less precise alpha-spectrometric dates (e.g. Gordon et al., 1989; Baker et al., 1993; Hercmann, 2000). By contrast, speleothem deposition appears to have been essentially continuous through the glacial periods at lower latitudes in the Northern Hemisphere (Fig. 1)..

..A comparison of the DH-11 [Devils Hole] record with the Vostok (Antarctica) ice-core deuterium record and the SPEC- MAP record that largely reflects Northern Hemisphere ice volume (Fig. 2) indicates that both clearly record the first-order glacial–interglacial transitions.

Note 4 – Note the reference to Milankovitch theory “explaining” Termination I. This appears to be the point that insolation was at least rising as Termination began, rather than falling. It’s not demonstrated or proven in any way in the paper that Termination I was caused by high latitude northern insolation, it is an illustration of the way the “widely-accepted point of view” usually gets a thumbs up. You can see the same point in the quotation from the Zhao paper. It’s the case with almost every paper.

If it’s impossible to disprove a theory with any counter evidence then it fails the test of being a theory.

Note 5 – More from Drysdale et al 2009:

During the Late Pleistocene, the period of glacial-to-interglacial transitions (or terminations) has increased relative to the Early Pleistocene [~100 thousand years (ky) versus 40 ky]. A coherent explanation for this shift still eludes paleoclimatologists. Although many different models have been proposed, the most widely accepted one invokes changes in the intensity of high-latitude Northern Hemisphere summer insolation (NHSI). These changes are driven largely by the precession of the equinoxes, which produces relatively large seasonal and hemispheric insolation intensity anomalies as the month of perihelion shifts through its ~23-ky cycle.

Recently, a convincing case has been made for obliquity control of Late Pleistocene terminations, which is a feasible hypothesis because of the relatively large and persistent increases in total summer energy reaching the high latitudes of both hemispheres during times of maximum Earth tilt. Indeed, the obliquity period has been found to be an important spectral component in methane (CH4) and paleotemperature records from Antarctic ice cores.

Testing the obliquity and other orbital-forcing models requires precise chronologies through terminations, which are best recorded by oxygen isotope ratios of benthic foraminifera (d18Ob) in deep-sea sediments (1, 8).

Although affected by deep-water temperature (Tdw) and composition (d18Odw) variations triggered by changes in circulation patterns (9), d18Ob signatures remain the most robust measure of global ice-volume changes through terminations. Unfortunately, dating of marine sediment records beyond the limits of radiocarbon methods has long proved difficult, and only Termination I [T-I, ~18 to 9 thousand years ago (ka)] has a reliable independent chronology.

Most marine chronologies for earlier terminations rely on the SPECMAP orbital template (8) with its a priori assumptions of insolation forcing and built-in phase lags between orbital trigger and ice-sheet response. Although SPECMAP and other orbital-based age models serve many important purposes in paleoceanography, their ability to test climate- forcing hypotheses is limited because they are not independent of the hypotheses being tested. Consequently, the inability to accurately date the benthic record of earlier terminations constitutes perhaps the single greatest obstacle to unraveling the problem of Late Pleistocene glaciations..

..

Obliquity is clearly very important during the Early Pleistocene, and recently a compelling argument was advanced that Late Pleistocene terminations are also forced by obliquity but that they bridge multiple obliquity cycles. Under this model, predominantly obliquity-driven total summer energy is considered more important in forcing terminations than the classical precession-based peak summer insolation model, primarily because the length of summer decreases as the Earth moves closer to the Sun. Hence, increased insolation intensity resulting from precession is offset by the shorter summer duration, with virtually no net effect on total summer energy in the high latitudes. By contrast, larger angles of Earth tilt lead to more positive degree days in both hemispheres at high latitudes, which can have a more profound effect on the total summer energy received and can act essentially independently from a given precession phase. The effect of obliquity on total summer energy is more persistent at large tilt angles, lasting up to 10 ky, because of the relatively long period of obliquity. Lastly, in a given year the influence of maximum obliquity persists for the whole summer, whereas at maximum precession early summer positive insolation anomalies are cancelled out by late summer negative anomalies, limiting the effect of precession over the whole summer.

Although the precise three-cycle offset between T-I and T-II in our radiometric chronology and the phase relationships shown in Fig. 3 together argue strongly for obliquity forcing, the question remains whether obliquity changes alone are responsible.

Recent work invoked an “insolation-canon,” whereby terminations are Southern Hemisphere–led but only triggered at times when insolation in both hemispheres is increasing simultaneously, with SHSI approaching maximum and NHSI just beyond a minimum. However, it is not clear that relatively low values of NHSI (at times of high SHSI) should play a role in deglaciation. An alternative is an insolation canon involving SHSI and obliquity.

Note 6 – There are a number of papers based on Dongge and Hulu caves in China that have similar data and conclusions but I am still trying to understand them. They attempt to tease out the relationship between δ18O and the monsoonal conditions and it’s involved. These papers include: Kelly et al 2006, High resolution characterization of the Asian Monsoon between 146,000 and 99,000 years B.P. from Dongge Cave, China and global correlation of events surrounding Termination II; Wang et al 2008, Millennial- and orbital-scale changes in the East Asian monsoon over the past 224,000 years.